The Journal of Artificial Intelligence Research (JAIR) is dedicated to the rapid dissemination of important research results to the global artificial intelligence (AI) community. The journal’s scope encompasses all areas of AI, including agents and multi-agent systems, automated reasoning, constraint processing and search, knowledge representation, machine learning, natural language, planning and scheduling, robotics and vision, and uncertainty in AI.

Current Issue

Vol. 80 (2024)

Published: 2024-05-10

Estimating Agent Skill in Continuous Action Domains

Computing pareto-optimal and almost envy-free allocations of indivisible goods.

The present and future of AI

Finale doshi-velez on how ai is shaping our lives and how we can shape ai.

Finale Doshi-Velez, the John L. Loeb Professor of Engineering and Applied Sciences. (Photo courtesy of Eliza Grinnell/Harvard SEAS)

How has artificial intelligence changed and shaped our world over the last five years? How will AI continue to impact our lives in the coming years? Those were the questions addressed in the most recent report from the One Hundred Year Study on Artificial Intelligence (AI100), an ongoing project hosted at Stanford University, that will study the status of AI technology and its impacts on the world over the next 100 years.

The 2021 report is the second in a series that will be released every five years until 2116. Titled “Gathering Strength, Gathering Storms,” the report explores the various ways AI is increasingly touching people’s lives in settings that range from movie recommendations and voice assistants to autonomous driving and automated medical diagnoses .

Barbara Grosz , the Higgins Research Professor of Natural Sciences at the Harvard John A. Paulson School of Engineering and Applied Sciences (SEAS) is a member of the standing committee overseeing the AI100 project and Finale Doshi-Velez , Gordon McKay Professor of Computer Science, is part of the panel of interdisciplinary researchers who wrote this year’s report.

We spoke with Doshi-Velez about the report, what it says about the role AI is currently playing in our lives, and how it will change in the future.

Q: Let's start with a snapshot: What is the current state of AI and its potential?

Doshi-Velez: Some of the biggest changes in the last five years have been how well AIs now perform in large data regimes on specific types of tasks. We've seen [DeepMind’s] AlphaZero become the best Go player entirely through self-play, and everyday uses of AI such as grammar checks and autocomplete, automatic personal photo organization and search, and speech recognition become commonplace for large numbers of people.

In terms of potential, I'm most excited about AIs that might augment and assist people. They can be used to drive insights in drug discovery, help with decision making such as identifying a menu of likely treatment options for patients, and provide basic assistance, such as lane keeping while driving or text-to-speech based on images from a phone for the visually impaired. In many situations, people and AIs have complementary strengths. I think we're getting closer to unlocking the potential of people and AI teams.

There's a much greater recognition that we should not be waiting for AI tools to become mainstream before making sure they are ethical.

Q: Over the course of 100 years, these reports will tell the story of AI and its evolving role in society. Even though there have only been two reports, what's the story so far?

There's actually a lot of change even in five years. The first report is fairly rosy. For example, it mentions how algorithmic risk assessments may mitigate the human biases of judges. The second has a much more mixed view. I think this comes from the fact that as AI tools have come into the mainstream — both in higher stakes and everyday settings — we are appropriately much less willing to tolerate flaws, especially discriminatory ones. There's also been questions of information and disinformation control as people get their news, social media, and entertainment via searches and rankings personalized to them. So, there's a much greater recognition that we should not be waiting for AI tools to become mainstream before making sure they are ethical.

Q: What is the responsibility of institutes of higher education in preparing students and the next generation of computer scientists for the future of AI and its impact on society?

First, I'll say that the need to understand the basics of AI and data science starts much earlier than higher education! Children are being exposed to AIs as soon as they click on videos on YouTube or browse photo albums. They need to understand aspects of AI such as how their actions affect future recommendations.

But for computer science students in college, I think a key thing that future engineers need to realize is when to demand input and how to talk across disciplinary boundaries to get at often difficult-to-quantify notions of safety, equity, fairness, etc. I'm really excited that Harvard has the Embedded EthiCS program to provide some of this education. Of course, this is an addition to standard good engineering practices like building robust models, validating them, and so forth, which is all a bit harder with AI.

I think a key thing that future engineers need to realize is when to demand input and how to talk across disciplinary boundaries to get at often difficult-to-quantify notions of safety, equity, fairness, etc.

Q: Your work focuses on machine learning with applications to healthcare, which is also an area of focus of this report. What is the state of AI in healthcare?

A lot of AI in healthcare has been on the business end, used for optimizing billing, scheduling surgeries, that sort of thing. When it comes to AI for better patient care, which is what we usually think about, there are few legal, regulatory, and financial incentives to do so, and many disincentives. Still, there's been slow but steady integration of AI-based tools, often in the form of risk scoring and alert systems.

In the near future, two applications that I'm really excited about are triage in low-resource settings — having AIs do initial reads of pathology slides, for example, if there are not enough pathologists, or get an initial check of whether a mole looks suspicious — and ways in which AIs can help identify promising treatment options for discussion with a clinician team and patient.

Q: Any predictions for the next report?

I'll be keen to see where currently nascent AI regulation initiatives have gotten to. Accountability is such a difficult question in AI, it's tricky to nurture both innovation and basic protections. Perhaps the most important innovation will be in approaches for AI accountability.

Topics: AI / Machine Learning , Computer Science

Cutting-edge science delivered direct to your inbox.

Join the Harvard SEAS mailing list.

Scientist Profiles

Finale Doshi-Velez

Herchel Smith Professor of Computer Science

Press Contact

Leah Burrows | 617-496-1351 | [email protected]

Related News

SEAS at CHI

SEAS teams win Best Paper, honorable mentions at international conference on Human-Computer Interaction

AI / Machine Learning , Computer Science

AI for more caring institutions

Improving AI-based decision-making tools for public services

Coming out to a chatbot?

Researchers explore the limitations of mental health chatbots in LGBTQ+ communities

Advertisement

Artificial intelligence and machine learning research: towards digital transformation at a global scale

- Published: 17 April 2021

- Volume 13 , pages 3319–3321, ( 2022 )

Cite this article

- Akila Sarirete 1 ,

- Zain Balfagih 1 ,

- Tayeb Brahimi 1 ,

- Miltiadis D. Lytras 1 , 2 &

- Anna Visvizi 3 , 4

12k Accesses

13 Citations

Explore all metrics

Avoid common mistakes on your manuscript.

Artificial intelligence (AI) is reshaping how we live, learn, and work. Until recently, AI used to be a fanciful concept, more closely associated with science fiction rather than with anything else. However, driven by unprecedented advances in sophisticated information and communication technology (ICT), AI today is synonymous technological progress already attained and the one yet to come in all spheres of our lives (Chui et al. 2018 ; Lytras et al. 2018 , 2019 ).

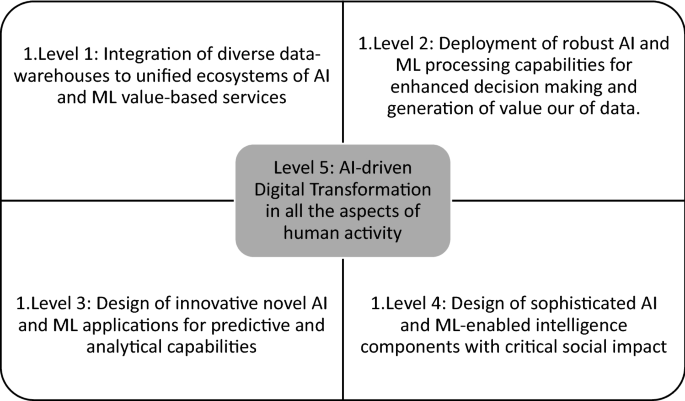

Considering that Machine Learning (ML) and AI are apt to reach unforeseen levels of accuracy and efficiency, this special issue sought to promote research on AI and ML seen as functions of data-driven innovation and digital transformation. The combination of expanding ICT-driven capabilities and capacities identifiable across our socio-economic systems along with growing consumer expectations vis-a-vis technology and its value-added for our societies, requires multidisciplinary research and research agenda on AI and ML (Lytras et al. 2021 ; Visvizi et al. 2020 ; Chui et al. 2020 ). Such a research agenda should oscilate around the following five defining issues (Fig. 1 ):

Source: The Authors

An AI-Driven Digital Transformation in all aspects of human activity/

Integration of diverse data-warehouses to unified ecosystems of AI and ML value-based services

Deployment of robust AI and ML processing capabilities for enhanced decision making and generation of value our of data.

Design of innovative novel AI and ML applications for predictive and analytical capabilities

Design of sophisticated AI and ML-enabled intelligence components with critical social impact

Promotion of the Digital Transformation in all the aspects of human activity including business, healthcare, government, commerce, social intelligence etc.

Such development will also have a critical impact on government, policies, regulations and initiatives aiming to interpret the value of the AI-driven digital transformation to the sustainable economic development of our planet. Additionally the disruptive character of AI and ML technology and research will required further research on business models and management of innovation capabilities.

This special issue is based on submissions invited from the 17th Annual Learning and Technology Conference 2019 that was held at Effat University and open call jointly. Several very good submissions were received. All of them were subjected a rigorous peer review process specific to the Ambient Intelligence and Humanized Computing Journal.

A variety of innovative topics are included in the agenda of the published papers in this special issue including topics such as:

Stock market Prediction using Machine learning

Detection of Apple Diseases and Pests based on Multi-Model LSTM-based Convolutional Neural Networks

ML for Searching

Machine Learning for Learning Automata

Entity recognition & Relation Extraction

Intelligent Surveillance Systems

Activity Recognition and K-Means Clustering

Distributed Mobility Management

Review Rating Prediction with Deep Learning

Cybersecurity: Botnet detection with Deep learning

Self-Training methods

Neuro-Fuzzy Inference systems

Fuzzy Controllers

Monarch Butterfly Optimized Control with Robustness Analysis

GMM methods for speaker age and gender classification

Regression methods for Permeability Prediction of Petroleum Reservoirs

Surface EMG Signal Classification

Pattern Mining

Human Activity Recognition in Smart Environments

Teaching–Learning based Optimization Algorithm

Big Data Analytics

Diagnosis based on Event-Driven Processing and Machine Learning for Mobile Healthcare

Over a decade ago, Effat University envisioned a timely platform that brings together educators, researchers and tech enthusiasts under one roof and functions as a fount for creativity and innovation. It was a dream that such platform bridges the existing gap and becomes a leading hub for innovators across disciplines to share their knowledge and exchange novel ideas. It was in 2003 that this dream was realized and the first Learning & Technology Conference was held. Up until today, the conference has covered a variety of cutting-edge themes such as Digital Literacy, Cyber Citizenship, Edutainment, Massive Open Online Courses, and many, many others. The conference has also attracted key, prominent figures in the fields of sciences and technology such as Farouq El Baz from NASA, Queen Rania Al-Abdullah of Jordan, and many others who addressed large, eager-to-learn audiences and inspired many with unique stories.

While emerging innovations, such as Artificial Intelligence technologies, are seen today as promising instruments that could pave our way to the future, these were also the focal points around which fruitful discussions have always taken place here at the L&T. The (AI) was selected for this conference due to its great impact. The Saudi government realized this impact of AI and already started actual steps to invest in AI. It is stated in the Kingdome Vision 2030: "In technology, we will increase our investments in, and lead, the digital economy." Dr. Ahmed Al Theneyan, Deputy Minister of Technology, Industry and Digital Capabilities, stated that: "The Government has invested around USD 3 billion in building the infrastructure so that the country is AI-ready and can become a leader in AI use." Vision 2030 programs also promote innovation in technologies. Another great step that our country made is establishing NEOM city (the model smart city).

Effat University realized this ambition and started working to make it a reality by offering academic programs that support the different sectors needed in such projects. For example, the master program in Energy Engineering was launched four years ago to support the energy sector. Also, the bachelor program of Computer Science has tracks in Artificial Intelligence and Cyber Security which was launched in Fall 2020 semester. Additionally, Energy & Technology and Smart Building Research Centers were established to support innovation in the technology and energy sectors. In general, Effat University works effectively in supporting the KSA to achieve its vision in this time of national transformation by graduating skilled citizen in different fields of technology.

The guest editors would like to take this opportunity to thank all the authors for the efforts they put in the preparation of their manuscripts and for their valuable contributions. We wish to express our deepest gratitude to the referees, who provided instrumental and constructive feedback to the authors. We also extend our sincere thanks and appreciation for the organizing team under the leadership of the Chair of L&T 2019 Conference Steering Committee, Dr. Haifa Jamal Al-Lail, University President, for her support and dedication.

Our sincere thanks go to the Editor-in-Chief for his kind help and support.

Chui KT, Lytras MD, Visvizi A (2018) Energy sustainability in smart cities: artificial intelligence, smart monitoring, and optimization of energy consumption. Energies 11(11):2869

Article Google Scholar

Chui KT, Fung DCL, Lytras MD, Lam TM (2020) Predicting at-risk university students in a virtual learning environment via a machine learning algorithm. Comput Human Behav 107:105584

Lytras MD, Visvizi A, Daniela L, Sarirete A, De Pablos PO (2018) Social networks research for sustainable smart education. Sustainability 10(9):2974

Lytras MD, Visvizi A, Sarirete A (2019) Clustering smart city services: perceptions, expectations, responses. Sustainability 11(6):1669

Lytras MD, Visvizi A, Chopdar PK, Sarirete A, Alhalabi W (2021) Information management in smart cities: turning end users’ views into multi-item scale development, validation, and policy-making recommendations. Int J Inf Manag 56:102146

Visvizi A, Jussila J, Lytras MD, Ijäs M (2020) Tweeting and mining OECD-related microcontent in the post-truth era: A cloud-based app. Comput Human Behav 107:105958

Download references

Author information

Authors and affiliations.

Effat College of Engineering, Effat Energy and Technology Research Center, Effat University, P.O. Box 34689, Jeddah, Saudi Arabia

Akila Sarirete, Zain Balfagih, Tayeb Brahimi & Miltiadis D. Lytras

King Abdulaziz University, Jeddah, 21589, Saudi Arabia

Miltiadis D. Lytras

Effat College of Business, Effat University, P.O. Box 34689, Jeddah, Saudi Arabia

Anna Visvizi

Institute of International Studies (ISM), SGH Warsaw School of Economics, Aleja Niepodległości 162, 02-554, Warsaw, Poland

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Akila Sarirete .

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Reprints and permissions

About this article

Sarirete, A., Balfagih, Z., Brahimi, T. et al. Artificial intelligence and machine learning research: towards digital transformation at a global scale. J Ambient Intell Human Comput 13 , 3319–3321 (2022). https://doi.org/10.1007/s12652-021-03168-y

Download citation

Published : 17 April 2021

Issue Date : July 2022

DOI : https://doi.org/10.1007/s12652-021-03168-y

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Find a journal

- Publish with us

- Track your research

Help | Advanced Search

Artificial Intelligence

Authors and titles for recent submissions.

- Tue, 21 May 2024

- Mon, 20 May 2024

- Fri, 17 May 2024

- Thu, 16 May 2024

- Wed, 15 May 2024

Tue, 21 May 2024 (showing first 25 of 143 entries )

Artificial Intelligence and the Skill Premium

How will the emergence of ChatGPT and other forms of artificial intelligence (AI) affect the skill premium? To address this question, we propose a nested constant elasticity of substitution production function that distinguishes among three types of capital: traditional physical capital (machines, assembly lines), industrial robots, and AI. Following the literature, we assume that industrial robots predominantly substitute for low-skill workers, whereas AI mainly helps to perform the tasks of high-skill workers. We show that AI reduces the skill premium as long as it is more substitutable for high-skill workers than low-skill workers are for high-skill workers.

Corresponding author: David E. Bloom. The views expressed herein are those of the authors and do not necessarily reflect the views of the National Bureau of Economic Research.

MARC RIS BibTeΧ

Download Citation Data

Working Groups

More from nber.

In addition to working papers , the NBER disseminates affiliates’ latest findings through a range of free periodicals — the NBER Reporter , the NBER Digest , the Bulletin on Retirement and Disability , the Bulletin on Health , and the Bulletin on Entrepreneurship — as well as online conference reports , video lectures , and interviews .

Assessing the Feasibility of Processing a Paper-based Multilingual Social Needs Screening Questionnaire Using Artificial Intelligence

- Find this author on Google Scholar

- Find this author on PubMed

- Search for this author on this site

- ORCID record for Obinna I Ekekezie

- For correspondence: [email protected]

- Info/History

- Supplementary material

- Preview PDF

The collection of Social Determinants of Health (SDoH) data is increasingly mandated by healthcare payers, yet traditional paper-based methods pose challenges in terms of cost effectiveness, accuracy, and completeness when manually entered into Electronic Health Records (EHRs). This study explores the application of artificial intelligence (AI), specifically using a document understanding model (Microsoft Azure Document Intelligence) and large language models (OpenAI's GPT-4 Turbo and GPT-3.5 Turbo), to automate the conversion of paper-based Social Determinants of Health (SDoH) questionnaires into structured, machine-readable formats that could theoretically be incorporated into EHRs. Using a dataset of synthetic and scanned examples, the study compares the performance of the GPT-3.5 and 4 Turbo base models and fine-tuned GPT-3.5 Turbo models on this task. Findings indicate that GPT-4 Turbo outperforms GPT-3.5 Turbo in accuracy and consistency, with fine-tuning enhancing GPT-3.5 Turbo's performance and consistency in several languages. These results suggest that AI could prove to be an accurate alternative to manual data entry, with important implications for improving how SDoH data is incorporated into EHRs. Future research should address data privacy, security concerns, cost considerations, and the technical aspects of incorporating AI-generated data into EHRs.

Competing Interest Statement

The authors have declared no competing interest.

Funding Statement

This study did not receive any funding

Author Declarations

I confirm all relevant ethical guidelines have been followed, and any necessary IRB and/or ethics committee approvals have been obtained.

I confirm that all necessary patient/participant consent has been obtained and the appropriate institutional forms have been archived, and that any patient/participant/sample identifiers included were not known to anyone (e.g., hospital staff, patients or participants themselves) outside the research group so cannot be used to identify individuals.

I understand that all clinical trials and any other prospective interventional studies must be registered with an ICMJE-approved registry, such as ClinicalTrials.gov. I confirm that any such study reported in the manuscript has been registered and the trial registration ID is provided (note: if posting a prospective study registered retrospectively, please provide a statement in the trial ID field explaining why the study was not registered in advance).

I have followed all appropriate research reporting guidelines, such as any relevant EQUATOR Network research reporting checklist(s) and other pertinent material, if applicable.

- Additional mention of related prior research in introduction - More robust dataset and methodology yielding new results - Revised manuscript and supplemental files accordingly

Data Availability

All data produced in the present study are available upon reasonable request to the author. In addition, a GitHub repo containing the results from assessing GPT-3.5 Turbo on the training synthetic examples and from comparing models on the two testing datasets (synthetic and scanned) as well as the LLM calls and responses is available. The repo also contains the notebooks (HTML) referenced in the Supplementary Appendix.

https://github.com/oekekezie/poc-ai-sdoh-questionnaire-v2/

View the discussion thread.

Supplementary Material

Thank you for your interest in spreading the word about medRxiv.

NOTE: Your email address is requested solely to identify you as the sender of this article.

Citation Manager Formats

- EndNote (tagged)

- EndNote 8 (xml)

- RefWorks Tagged

- Ref Manager

- Tweet Widget

- Facebook Like

- Google Plus One

- Addiction Medicine (324)

- Allergy and Immunology (628)

- Anesthesia (165)

- Cardiovascular Medicine (2381)

- Dentistry and Oral Medicine (289)

- Dermatology (207)

- Emergency Medicine (380)

- Endocrinology (including Diabetes Mellitus and Metabolic Disease) (838)

- Epidemiology (11777)

- Forensic Medicine (10)

- Gastroenterology (703)

- Genetic and Genomic Medicine (3751)

- Geriatric Medicine (350)

- Health Economics (635)

- Health Informatics (2401)

- Health Policy (933)

- Health Systems and Quality Improvement (899)

- Hematology (341)

- HIV/AIDS (782)

- Infectious Diseases (except HIV/AIDS) (13320)

- Intensive Care and Critical Care Medicine (768)

- Medical Education (366)

- Medical Ethics (105)

- Nephrology (398)

- Neurology (3510)

- Nursing (198)

- Nutrition (528)

- Obstetrics and Gynecology (675)

- Occupational and Environmental Health (665)

- Oncology (1825)

- Ophthalmology (538)

- Orthopedics (219)

- Otolaryngology (287)

- Pain Medicine (233)

- Palliative Medicine (66)

- Pathology (446)

- Pediatrics (1035)

- Pharmacology and Therapeutics (426)

- Primary Care Research (420)

- Psychiatry and Clinical Psychology (3180)

- Public and Global Health (6149)

- Radiology and Imaging (1280)

- Rehabilitation Medicine and Physical Therapy (749)

- Respiratory Medicine (828)

- Rheumatology (379)

- Sexual and Reproductive Health (372)

- Sports Medicine (323)

- Surgery (402)

- Toxicology (50)

- Transplantation (172)

- Urology (146)

Advertisement

Supported by

Senators Propose $32 Billion in Annual A.I. Spending but Defer Regulation

Their plan is the culmination of a yearlong listening tour on the dangers of the new technology.

- Share full article

By Cecilia Kang and David McCabe

Cecilia Kang and David McCabe cover technology policy.

A bipartisan group of senators released a long-awaited legislative plan for artificial intelligence on Wednesday, calling for billions in funding to propel American leadership in the technology while offering few details on regulations to address its risks.

In a 20-page document titled “Driving U.S. Innovation in Artificial Intelligence,” the Senate leader, Chuck Schumer, and three colleagues called for spending $32 billion annually by 2026 for government and private-sector research and development of the technology.

The lawmakers recommended creating a federal data privacy law and said they supported legislation, planned for introduction on Wednesday, that would prevent the use of realistic misleading technology known as deepfakes in election campaigns. But they said congressional committees and agencies should come up with regulations on A.I., including protections against health and financial discrimination, the elimination of jobs, and copyright violations caused by the technology.

“It’s very hard to do regulations because A.I. is changing too quickly,” Mr. Schumer, a New York Democrat, said in an interview. “We didn’t want to rush this.”

He designed the road map with two Republican senators, Mike Rounds of South Dakota and Todd Young of Indiana, and a fellow Democrat, Senator Martin Heinrich of New Mexico, after their yearlong listening tour to hear concerns about new generative A.I. technologies. Those tools, like OpenAI’s ChatGPT, can generate realistic and convincing images, videos, audio and text. Tech leaders have warned about the potential harms of A.I., including the obliteration of entire job categories, election interference, discrimination in housing and finance, and even the replacement of humankind.

The senators’ decision to delay A.I. regulation widens a gap between the United States and the European Union , which this year adopted a law that prohibits A.I.’s riskiest uses, including some facial recognition applications and tools that can manipulate behavior or discriminate. The European law requires transparency around how systems operate and what data they collect. Dozens of U.S. states have also proposed privacy and A.I. laws that would prohibit certain uses of the technology.

Outside of recent legislation mandating the sale or ban of the social media app TikTok, Congress hasn’t passed major tech legislation in years, despite multiple proposals.

“It’s disappointing because at this point we’ve missed several windows of opportunity to act while the rest of the world has,” said Amba Kak, a co-executive director of the nonprofit AI Now Institute and a former adviser on A.I. to the Federal Trade Commission.

Mr. Schumer’s efforts on A.I. legislation began in June with a series of high-profile forums that brought together tech leaders including Elon Musk of Tesla, Sundar Pichai of Google and Sam Altman of OpenAI.

(The New York Times has sued OpenAI and its partner, Microsoft, over use of the publication’s copyrighted works in A.I. development.)

Mr. Schumer said in the interview that through the forums, lawmakers had begun to understand the complexity of A.I. technologies and how expert agencies and congressional committees were best equipped to create regulations.

The legislative road map encourages greater federal investment in the growth of domestic research and development.

“This is sort of the American way — we are more entrepreneurial,” Mr. Schumer said in the interview, adding that the lawmakers hoped to make “innovation the North Star.”

In a separate briefing with reporters, he said the Senate was more likely to consider A.I. proposals piecemeal instead of in one large legislative package.

“What we’d expect is that we would have some bills that certainly pass the Senate and hopefully pass the House by the end of the year,” Mr. Schumer said. “It won’t cover the whole waterfront. There’s too much waterfront to cover, and things are changing so rapidly.”

He added that his staff had spoken with Speaker Mike Johnson’s office

Maya Wiley, president of the Leadership Conference on Civil and Human Rights, participated in the first forum. She said that the closed-door meetings were “tech industry heavy” and that the report’s focus on promoting innovation overshadowed the real-world harms that could result from A.I. systems, noting that health and financial tools had already shown signs of discrimination against certain ethnic and racial groups.

Ms. Wiley has called for greater focus on the vetting of new products to make sure they are safe and operate without biases that can target certain communities.

“We should not assume that we don’t need additional rights,” she said.

Cecilia Kang reports on technology and regulatory policy and is based in Washington D.C. She has written about technology for over two decades. More about Cecilia Kang

David McCabe covers tech policy. He joined The Times from Axios in 2019. More about David McCabe

Explore Our Coverage of Artificial Intelligence

News and Analysis

Ilya Sutskever, the OpenAI co-founder and chief scientist who in November joined three other board members to force out Sam Altman before saying he regretted the move, is leaving the company .

OpenAI has unveiled a new version of its ChatGPT chatbot that can receive and respond to voice commands, images and videos.

A bipartisan group of senators released a long-awaited legislative plan for A.I. , calling for billions in funding to propel U.S. leadership in the technology while offering few details on regulations.

The Age of A.I.

D’Youville University in Buffalo had an A.I. robot speak at its commencement . Not everyone was happy about it.

A new program, backed by Cornell Tech, M.I.T. and U.C.L.A., helps prepare lower-income, Latina and Black female computing majors for A.I. careers.

Publishers have long worried that A.I.-generated answers on Google would drive readers away from their sites. They’re about to find out if those fears are warranted, our tech columnist writes .

A new category of apps promises to relieve parents of drudgery, with an assist from A.I. But a family’s grunt work is more human, and valuable, than it seems.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Innovation (Camb)

- v.2(4); 2021 Nov 28

Artificial intelligence: A powerful paradigm for scientific research

1 Institute of Computing Technology, Chinese Academy of Sciences, Beijing 100190, China

35 University of Chinese Academy of Sciences, Beijing 100049, China

5 Academy of Mathematics and Systems Science, Chinese Academy of Sciences, Beijing 100190, China

10 Zhongshan Hospital Institute of Clinical Science, Fudan University, Shanghai 200032, China

Changping Huang

18 Aerospace Information Research Institute, Chinese Academy of Sciences, Beijing 100094, China

11 Institute of Physics, Chinese Academy of Sciences, Beijing 100190, China

37 Songshan Lake Materials Laboratory, Dongguan, Guangdong 523808, China

26 Institute of High Energy Physics, Chinese Academy of Sciences, Beijing 100049, China

Xingchen Liu

28 Institute of Coal Chemistry, Chinese Academy of Sciences, Taiyuan 030001, China

2 Institute of Software, Chinese Academy of Sciences, Beijing 100190, China

Fengliang Dong

3 National Center for Nanoscience and Technology, Beijing 100190, China

Cheng-Wei Qiu

4 Department of Electrical and Computer Engineering, National University of Singapore, Singapore 117583, Singapore

6 Department of Gynaecology, Obstetrics and Gynaecology Hospital, Fudan University, Shanghai 200011, China

36 Shanghai Key Laboratory of Female Reproductive Endocrine-Related Diseases, Shanghai 200011, China

7 School of Food Science and Technology, Dalian Polytechnic University, Dalian 116034, China

41 Second Affiliated Hospital School of Medicine, and School of Public Health, Zhejiang University, Hangzhou 310058, China

8 Department of Obstetrics and Gynecology, Peking University Third Hospital, Beijing 100191, China

9 Zhejiang Provincial People’s Hospital, Hangzhou 310014, China

Chenguang Fu

12 School of Materials Science and Engineering, Zhejiang University, Hangzhou 310027, China

Zhigang Yin

13 Fujian Institute of Research on the Structure of Matter, Chinese Academy of Sciences, Fuzhou 350002, China

Ronald Roepman

14 Medical Center, Radboud University, 6500 Nijmegen, the Netherlands

Sabine Dietmann

15 Institute for Informatics, Washington University School of Medicine, St. Louis, MO 63110, USA

Marko Virta

16 Department of Microbiology, University of Helsinki, 00014 Helsinki, Finland

Fredrick Kengara

17 School of Pure and Applied Sciences, Bomet University College, Bomet 20400, Kenya

19 Agriculture College of Shihezi University, Xinjiang 832000, China

Taolan Zhao

20 Institute of Genetics and Developmental Biology, Chinese Academy of Sciences, Beijing 100101, China

21 The Brain Cognition and Brain Disease Institute, Shenzhen Institute of Advanced Technology, Chinese Academy of Sciences, Shenzhen 518055, China

38 Shenzhen-Hong Kong Institute of Brain Science-Shenzhen Fundamental Research Institutions, Shenzhen 518055, China

Jialiang Yang

22 Geneis (Beijing) Co., Ltd, Beijing 100102, China

23 Department of Communication Studies, Hong Kong Baptist University, Hong Kong, China

24 South China Botanical Garden, Chinese Academy of Sciences, Guangzhou 510650, China

39 Center of Economic Botany, Core Botanical Gardens, Chinese Academy of Sciences, Guangzhou 510650, China

Zhaofeng Liu

27 Shanghai Astronomical Observatory, Chinese Academy of Sciences, Shanghai 200030, China

29 Suzhou Institute of Nano-Tech and Nano-Bionics, Chinese Academy of Sciences, Suzhou 215123, China

Xiaohong Liu

30 Chongqing Institute of Green and Intelligent Technology, Chinese Academy of Sciences, Chongqing 400714, China

James P. Lewis

James m. tiedje.

34 Center for Microbial Ecology, Department of Plant, Soil and Microbial Sciences, Michigan State University, East Lansing, MI 48824, USA

40 Zhejiang Lab, Hangzhou 311121, China

25 Shanghai Institute of Nutrition and Health, Chinese Academy of Sciences, Shanghai 200031, China

31 Department of Computer Science, Aberystwyth University, Aberystwyth, Ceredigion SY23 3FL, UK

Zhipeng Cai

32 Department of Computer Science, Georgia State University, Atlanta, GA 30303, USA

33 Institute of Soil Science, Chinese Academy of Sciences, Nanjing 210008, China

Jiabao Zhang

Artificial intelligence (AI) coupled with promising machine learning (ML) techniques well known from computer science is broadly affecting many aspects of various fields including science and technology, industry, and even our day-to-day life. The ML techniques have been developed to analyze high-throughput data with a view to obtaining useful insights, categorizing, predicting, and making evidence-based decisions in novel ways, which will promote the growth of novel applications and fuel the sustainable booming of AI. This paper undertakes a comprehensive survey on the development and application of AI in different aspects of fundamental sciences, including information science, mathematics, medical science, materials science, geoscience, life science, physics, and chemistry. The challenges that each discipline of science meets, and the potentials of AI techniques to handle these challenges, are discussed in detail. Moreover, we shed light on new research trends entailing the integration of AI into each scientific discipline. The aim of this paper is to provide a broad research guideline on fundamental sciences with potential infusion of AI, to help motivate researchers to deeply understand the state-of-the-art applications of AI-based fundamental sciences, and thereby to help promote the continuous development of these fundamental sciences.

Graphical abstract

Public summary

- • “Can machines think?” The goal of artificial intelligence (AI) is to enable machines to mimic human thoughts and behaviors, including learning, reasoning, predicting, and so on.

- • “Can AI do fundamental research?” AI coupled with machine learning techniques is impacting a wide range of fundamental sciences, including mathematics, medical science, physics, etc.

- • “How does AI accelerate fundamental research?” New research and applications are emerging rapidly with the support by AI infrastructure, including data storage, computing power, AI algorithms, and frameworks.

Introduction

“Can machines think?” Alan Turing posed this question in his famous paper “Computing Machinery and Intelligence.” 1 He believes that to answer this question, we need to define what thinking is. However, it is difficult to define thinking clearly, because thinking is a subjective behavior. Turing then introduced an indirect method to verify whether a machine can think, the Turing test, which examines a machine's ability to show intelligence indistinguishable from that of human beings. A machine that succeeds in the test is qualified to be labeled as artificial intelligence (AI).

AI refers to the simulation of human intelligence by a system or a machine. The goal of AI is to develop a machine that can think like humans and mimic human behaviors, including perceiving, reasoning, learning, planning, predicting, and so on. Intelligence is one of the main characteristics that distinguishes human beings from animals. With the interminable occurrence of industrial revolutions, an increasing number of types of machine types continuously replace human labor from all walks of life, and the imminent replacement of human resources by machine intelligence is the next big challenge to be overcome. Numerous scientists are focusing on the field of AI, and this makes the research in the field of AI rich and diverse. AI research fields include search algorithms, knowledge graphs, natural languages processing, expert systems, evolution algorithms, machine learning (ML), deep learning (DL), and so on.

The general framework of AI is illustrated in Figure 1 . The development process of AI includes perceptual intelligence, cognitive intelligence, and decision-making intelligence. Perceptual intelligence means that a machine has the basic abilities of vision, hearing, touch, etc., which are familiar to humans. Cognitive intelligence is a higher-level ability of induction, reasoning and acquisition of knowledge. It is inspired by cognitive science, brain science, and brain-like intelligence to endow machines with thinking logic and cognitive ability similar to human beings. Once a machine has the abilities of perception and cognition, it is often expected to make optimal decisions as human beings, to improve the lives of people, industrial manufacturing, etc. Decision intelligence requires the use of applied data science, social science, decision theory, and managerial science to expand data science, so as to make optimal decisions. To achieve the goal of perceptual intelligence, cognitive intelligence, and decision-making intelligence, the infrastructure layer of AI, supported by data, storage and computing power, ML algorithms, and AI frameworks is required. Then by training models, it is able to learn the internal laws of data for supporting and realizing AI applications. The application layer of AI is becoming more and more extensive, and deeply integrated with fundamental sciences, industrial manufacturing, human life, social governance, and cyberspace, which has a profound impact on our work and lifestyle.

The general framework of AI

History of AI

The beginning of modern AI research can be traced back to John McCarthy, who coined the term “artificial intelligence (AI),” during at a conference at Dartmouth College in 1956. This symbolized the birth of the AI scientific field. Progress in the following years was astonishing. Many scientists and researchers focused on automated reasoning and applied AI for proving of mathematical theorems and solving of algebraic problems. One of the famous examples is Logic Theorist, a computer program written by Allen Newell, Herbert A. Simon, and Cliff Shaw, which proves 38 of the first 52 theorems in “Principia Mathematica” and provides more elegant proofs for some. 2 These successes made many AI pioneers wildly optimistic, and underpinned the belief that fully intelligent machines would be built in the near future. However, they soon realized that there was still a long way to go before the end goals of human-equivalent intelligence in machines could come true. Many nontrivial problems could not be handled by the logic-based programs. Another challenge was the lack of computational resources to compute more and more complicated problems. As a result, organizations and funders stopped supporting these under-delivering AI projects.

AI came back to popularity in the 1980s, as several research institutions and universities invented a type of AI systems that summarizes a series of basic rules from expert knowledge to help non-experts make specific decisions. These systems are “expert systems.” Examples are the XCON designed by Carnegie Mellon University and the MYCIN designed by Stanford University. The expert system derived logic rules from expert knowledge to solve problems in the real world for the first time. The core of AI research during this period is the knowledge that made machines “smarter.” However, the expert system gradually revealed several disadvantages, such as privacy technologies, lack of flexibility, poor versatility, expensive maintenance cost, and so on. At the same time, the Fifth Generation Computer Project, heavily funded by the Japanese government, failed to meet most of its original goals. Once again, the funding for AI research ceased, and AI was at the second lowest point of its life.

In 2006, Geoffrey Hinton and coworkers 3 , 4 made a breakthrough in AI by proposing an approach of building deeper neural networks, as well as a way to avoid gradient vanishing during training. This reignited AI research, and DL algorithms have become one of the most active fields of AI research. DL is a subset of ML based on multiple layers of neural networks with representation learning, 5 while ML is a part of AI that a computer or a program can use to learn and acquire intelligence without human intervention. Thus, “learn” is the keyword of this era of AI research. Big data technologies, and the improvement of computing power have made deriving features and information from massive data samples more efficient. An increasing number of new neural network structures and training methods have been proposed to improve the representative learning ability of DL, and to further expand it into general applications. Current DL algorithms match and exceed human capabilities on specific datasets in the areas of computer vision (CV) and natural language processing (NLP). AI technologies have achieved remarkable successes in all walks of life, and continued to show their value as backbones in scientific research and real-world applications.

Within AI, ML is having a substantial broad effect across many aspects of technology and science: from computer science to geoscience to materials science, from life science to medical science to chemistry to mathematics and to physics, from management science to economics to psychology, and other data-intensive empirical sciences, as ML methods have been developed to analyze high-throughput data to obtain useful insights, categorize, predict, and make evidence-based decisions in novel ways. To train a system by presenting it with examples of desired input-output behavior, could be far easier than to program it manually by predicting the desired response for all potential inputs. The following sections survey eight fundamental sciences, including information science (informatics), mathematics, medical science, materials science, geoscience, life science, physics, and chemistry, which develop or exploit AI techniques to promote the development of sciences and accelerate their applications to benefit human beings, society, and the world.

AI in information science

AI aims to provide the abilities of perception, cognition, and decision-making for machines. At present, new research and applications in information science are emerging at an unprecedented rate, which is inseparable from the support by the AI infrastructure. As shown in Figure 2 , the AI infrastructure layer includes data, storage and computing power, ML algorithms, and the AI framework. The perception layer enables machines have the basic ability of vision, hearing, etc. For instance, CV enables machines to “see” and identify objects, while speech recognition and synthesis helps machines to “hear” and recognize speech elements. The cognitive layer provides higher ability levels of induction, reasoning, and acquiring knowledge with the help of NLP, 6 knowledge graphs, 7 and continual learning. 8 In the decision-making layer, AI is capable of making optimal decisions, such as automatic planning, expert systems, and decision-supporting systems. Numerous applications of AI have had a profound impact on fundamental sciences, industrial manufacturing, human life, social governance, and cyberspace. The following subsections provide an overview of the AI framework, automatic machine learning (AutoML) technology, and several state-of-the-art AI/ML applications in the information field.

The knowledge graph of the AI framework

The AI framework provides basic tools for AI algorithm implementation

In the past 10 years, applications based on AI algorithms have played a significant role in various fields and subjects, on the basis of which the prosperity of the DL framework and platform has been founded. AI frameworks and platforms reduce the requirement of accessing AI technology by integrating the overall process of algorithm development, which enables researchers from different areas to use it across other fields, allowing them to focus on designing the structure of neural networks, thus providing better solutions to problems in their fields. At the beginning of the 21st century, only a few tools, such as MATLAB, OpenNN, and Torch, were capable of describing and developing neural networks. However, these tools were not originally designed for AI models, and thus faced problems, such as complicated user API and lacking GPU support. During this period, using these frameworks demanded professional computer science knowledge and tedious work on model construction. As a solution, early frameworks of DL, such as Caffe, Chainer, and Theano, emerged, allowing users to conveniently construct complex deep neural networks (DNNs), such as convolutional neural networks (CNNs), recurrent neural networks (RNNs), and LSTM conveniently, and this significantly reduced the cost of applying AI models. Tech giants then joined the march in researching AI frameworks. 9 Google developed the famous open-source framework, TensorFlow, while Facebook's AI research team released another popular platform, PyTorch, which is based on Torch; Microsoft Research published CNTK, and Amazon announced MXNet. Among them, TensorFlow, also the most representative framework, referred to Theano's declarative programming style, offering a larger space for graph-based optimization, while PyTorch inherited the imperative programming style of Torch, which is intuitive, user friendly, more flexible, and easier to be traced. As modern AI frameworks and platforms are being widely applied, practitioners can now assemble models swiftly and conveniently by adopting various building block sets and languages specifically suitable for given fields. Polished over time, these platforms gradually developed a clearly defined user API, the ability for multi-GPU training and distributed training, as well as a variety of model zoos and tool kits for specific tasks. 10 Looking forward, there are a few trends that may become the mainstream of next-generation framework development. (1) Capability of super-scale model training. With the emergence of models derived from Transformer, such as BERT and GPT-3, the ability of training large models has become an ideal feature of the DL framework. It requires AI frameworks to train effectively under the scale of hundreds or even thousands of devices. (2) Unified API standard. The APIs of many frameworks are generally similar but slightly different at certain points. This leads to some difficulties and unnecessary learning efforts, when the user attempts to shift from one framework to another. The API of some frameworks, such as JAX, has already become compatible with Numpy standard, which is familiar to most practitioners. Therefore, a unified API standard for AI frameworks may gradually come into being in the future. (3) Universal operator optimization. At present, kernels of DL operator are implemented either manually or based on third-party libraries. Most third-party libraries are developed to suit certain hardware platforms, causing large unnecessary spending when models are trained or deployed on different hardware platforms. The development speed of new DL algorithms is usually much faster than the update rate of libraries, which often makes new algorithms to be beyond the range of libraries' support. 11

To improve the implementation speed of AI algorithms, much research focuses on how to use hardware for acceleration. The DianNao family is one of the earliest research innovations on AI hardware accelerators. 12 It includes DianNao, DaDianNao, ShiDianNao, and PuDianNao, which can be used to accelerate the inference speed of neural networks and other ML algorithms. Of these, the best performance of a 64-chip DaDianNao system can achieve a speed up of 450.65× over a GPU, and reduce the energy by 150.31×. Prof. Chen and his team in the Institute of Computing Technology also designed an Instruction Set Architecture for a broad range of neural network accelerators, called Cambricon, which developed into a serial DL accelerator. After Cambricon, many AI-related companies, such as Apple, Google, HUAWEI, etc., developed their own DL accelerators, and AI accelerators became an important research field of AI.

AI for AI—AutoML

AutoML aims to study how to use evolutionary computing, reinforcement learning (RL), and other AI algorithms, to automatically generate specified AI algorithms. Research on the automatic generation of neural networks has existed before the emergence of DL, e.g., neural evolution. 13 The main purpose of neural evolution is to allow neural networks to evolve according to the principle of survival of the fittest in the biological world. Through selection, crossover, mutation, and other evolutionary operators, the individual quality in a population is continuously improved and, finally, the individual with the greatest fitness represents the best neural network. The biological inspiration in this field lies in the evolutionary process of human brain neurons. The human brain has such developed learning and memory functions that it cannot do without the complex neural network system in the brain. The whole neural network system of the human brain benefits from a long evolutionary process rather than gradient descent and back propagation. In the era of DL, the application of AI algorithms to automatically generate DNN has attracted more attention and, gradually, developed into an important direction of AutoML research: neural architecture search. The implementation methods of neural architecture search are usually divided into the RL-based method and the evolutionary algorithm-based method. In the RL-based method, an RNN is used as a controller to generate a neural network structure layer by layer, and then the network is trained, and the accuracy of the verification set is used as the reward signal of the RNN to calculate the strategy gradient. During the iteration, the controller will give the neural network, with higher accuracy, a higher probability value, so as to ensure that the strategy function can output the optimal network structure. 14 The method of neural architecture search through evolution is similar to the neural evolution method, which is based on a population and iterates continuously according to the principle of survival of the fittest, so as to obtain a high-quality neural network. 15 Through the application of neural architecture search technology, the design of neural networks is more efficient and automated, and the accuracy of the network gradually outperforms that of the networks designed by AI experts. For example, Google's SOTA network EfficientNet was realized through the baseline network based on neural architecture search. 16

AI enabling networking design adaptive to complex network conditions

The application of DL in the networking field has received strong interest. Network design often relies on initial network conditions and/or theoretical assumptions to characterize real network environments. However, traditional network modeling and design, regulated by mathematical models, are unlikely to deal with complex scenarios with many imperfect and high dynamic network environments. Integrating DL into network research allows for a better representation of complex network environments. Furthermore, DL could be combined with the Markov decision process and evolve into the deep reinforcement learning (DRL) model, which finds an optimal policy based on the reward function and the states of the system. Taken together, these techniques could be used to make better decisions to guide proper network design, thereby improving the network quality of service and quality of experience. With regard to the aspect of different layers of the network protocol stack, DL/DRL can be adopted for network feature extraction, decision-making, etc. In the physical layer, DL can be used for interference alignment. It can also be used to classify the modulation modes, design efficient network coding 17 and error correction codes, etc. In the data link layer, DL can be used for resource (such as channels) allocation, medium access control, traffic prediction, 18 link quality evaluation, and so on. In the network (routing) layer, routing establishment and routing optimization 19 can help to obtain an optimal routing path. In higher layers (such as the application layer), enhanced data compression and task allocation is used. Besides the above protocol stack, one critical area of using DL is network security. DL can be used to classify the packets into benign/malicious types, and how it can be integrated with other ML schemes, such as unsupervised clustering, to achieve a better anomaly detection effect.

AI enabling more powerful and intelligent nanophotonics

Nanophotonic components have recently revolutionized the field of optics via metamaterials/metasurfaces by enabling the arbitrary manipulation of light-matter interactions with subwavelength meta-atoms or meta-molecules. 20 , 21 , 22 The conventional design of such components involves generally forward modeling, i.e., solving Maxwell's equations based on empirical and intuitive nanostructures to find corresponding optical properties, as well as the inverse design of nanophotonic devices given an on-demand optical response. The trans-dimensional feature of macro-optical components consisting of complex nano-antennas makes the design process very time consuming, computationally expensive, and even numerically prohibitive, such as device size and complexity increase. DL is an efficient and automatic platform, enabling novel efficient approaches to designing nanophotonic devices with high-performance and versatile functions. Here, we present briefly the recent progress of DL-based nanophotonics and its wide-ranging applications. DL was exploited for forward modeling at first using a DNN. 23 The transmission or reflection coefficients can be well predicted after training on huge datasets. To improve the prediction accuracy of DNN in case of small datasets, transfer learning was introduced to migrate knowledge between different physical scenarios, which greatly reduced the relative error. Furthermore, a CNN and an RNN were developed for the prediction of optical properties from arbitrary structures using images. 24 The CNN-RNN combination successfully predicted the absorption spectra from the given input structural images. In inverse design of nanophotonic devices, there are three different paradigms of DL methods, i.e., supervised, unsupervised, and RL. 25 Supervised learning has been utilized to design structural parameters for the pre-defined geometries, such as tandem DNN and bidirectional DNNs. Unsupervised learning methods learn by themselves without a specific target, and thus are more accessible to discovering new and arbitrary patterns 26 in completely new data than supervised learning. A generative adversarial network (GAN)-based approach, combining conditional GANs and Wasserstein GANs, was proposed to design freeform all-dielectric multifunctional metasurfaces. RL, especially double-deep Q-learning, powers up the inverse design of high-performance nanophotonic devices. 27 DL has endowed nanophotonic devices with better performance and more emerging applications. 28 , 29 For instance, an intelligent microwave cloak driven by DL exhibits millisecond and self-adaptive response to an ever-changing incident wave and background. 28 Another example is that a DL-augmented infrared nanoplasmonic metasurface is developed for monitoring dynamics between four major classes of bio-molecules, which could impact the fields of biology, bioanalytics, and pharmacology from fundamental research, to disease diagnostics, to drug development. 29 The potential of DL in the wide arena of nanophotonics has been unfolding. Even end-users without optics and photonics background could exploit the DL as a black box toolkit to design powerful optical devices. Nevertheless, how to interpret/mediate the intermediate DL process and determine the most dominant factors in the search for optimal solutions, are worthy of being investigated in depth. We optimistically envisage that the advancements in DL algorithms and computation/optimization infrastructures would enable us to realize more efficient and reliable training approaches, more complex nanostructures with unprecedented shapes and sizes, and more intelligent and reconfigurable optic/optoelectronic systems.

AI in other fields of information science

We believe that AI has great potential in the following directions:

- • AI-based risk control and management in utilities can prevent costly or hazardous equipment failures by using sensors that detect and send information regarding the machine's health to the manufacturer, predicting possible issues that could occur so as to ensure timely maintenance or automated shutdown.

- • AI could be used to produce simulations of real-world objects, called digital twins. When applied to the field of engineering, digital twins allow engineers and technicians to analyze the performance of an equipment virtually, thus avoiding safety and budget issues associated with traditional testing methods.

- • Combined with AI, intelligent robots are playing an important role in industry and human life. Different from traditional robots working according to the procedures specified by humans, intelligent robots have the ability of perception, recognition, and even automatic planning and decision-making, based on changes in environmental conditions.

- • AI of things (AIoT) or AI-empowered IoT applications. 30 have become a promising development trend. AI can empower the connected IoT devices, embedded in various physical infrastructures, to perceive, recognize, learn, and act. For instance, smart cities constantly collect data regarding quality-of-life factors, such as the status of power supply, public transportation, air pollution, and water use, to manage and optimize systems in cities. Due to these data, especially personal data being collected from informed or uninformed participants, data security, and privacy 31 require protection.

AI in mathematics

Mathematics always plays a crucial and indispensable role in AI. Decades ago, quite a few classical AI-related approaches, such as k-nearest neighbor, 32 support vector machine, 33 and AdaBoost, 34 were proposed and developed after their rigorous mathematical formulations had been established. In recent years, with the rapid development of DL, 35 AI has been gaining more and more attention in the mathematical community. Equipped with the Markov process, minimax optimization, and Bayesian statistics, RL, 36 GANs, 37 and Bayesian learning 38 became the most favorable tools in many AI applications. Nevertheless, there still exist plenty of open problems in mathematics for ML, including the interpretability of neural networks, the optimization problems of parameter estimation, and the generalization ability of learning models. In the rest of this section, we discuss these three questions in turn.

The interpretability of neural networks

From a mathematical perspective, ML usually constructs nonlinear models, with neural networks as a typical case, to approximate certain functions. The well-known Universal Approximation Theorem suggests that, under very mild conditions, any continuous function can be uniformly approximated on compact domains by neural networks, 39 which serves a vital function in the interpretability of neural networks. However, in real applications, ML models seem to admit accurate approximations of many extremely complicated functions, sometimes even black boxes, which are far beyond the scope of continuous functions. To understand the effectiveness of ML models, many researchers have investigated the function spaces that can be well approximated by them, and the corresponding quantitative measures. This issue is closely related to the classical approximation theory, but the approximation scheme is distinct. For example, Bach 40 finds that the random feature model is naturally associated with the corresponding reproducing kernel Hilbert space. In the same way, the Barron space is identified as the natural function space associated with two-layer neural networks, and the approximation error is measured using the Barron norm. 41 The corresponding quantities of residual networks (ResNets) are defined for the flow-induced spaces. For multi-layer networks, the natural function spaces for the purposes of approximation theory are the tree-like function spaces introduced in Wojtowytsch. 42 There are several works revealing the relationship between neural networks and numerical algorithms for solving partial differential equations. For example, He and Xu 43 discovered that CNNs for image classification have a strong connection with multi-grid (MG) methods. In fact, the pooling operation and feature extraction in CNNs correspond directly to restriction operation and iterative smoothers in MG, respectively. Hence, various convolution and pooling operations used in CNNs can be better understood.

The optimization problems of parameter estimation

In general, the optimization problem of estimating parameters of certain DNNs is in practice highly nonconvex and often nonsmooth. Can the global minimizers be expected? What is the landscape of local minimizers? How does one handle the nonsmoothness? All these questions are nontrivial from an optimization perspective. Indeed, numerous works and experiments demonstrate that the optimization for parameter estimation in DL is itself a much nicer problem than once thought; see, e.g., Goodfellow et al. 44 As a consequence, the study on the solution landscape ( Figure 3 ), also known as loss surface of neural networks, is no longer supposed to be inaccessible and can even in turn provide guidance for global optimization. Interested readers can refer to the survey paper (Sun et al. 45 ) for recent progress in this aspect.

Recent studies indicate that nonsmooth activation functions, e.g., rectified linear units, are better than smooth ones in finding sparse solutions. However, the chain rule does not work in the case that the activation functions are nonsmooth, which then makes the widely used stochastic gradient (SG)-based approaches not feasible in theory. Taking approximated gradients at nonsmooth iterates as a remedy ensures that SG-type methods are still in extensive use, but that the numerical evidence has also exposed their limitations. Also, the penalty-based approaches proposed by Cui et al. 46 and Liu et al. 47 provide a new direction to solve the nonsmooth optimization problems efficiently.

The generalization ability of learning models

A small training error does not always lead to a small test error. This gap is caused by the generalization ability of learning models. A key finding in statistical learning theory states that the generalization error is bounded by a quantity that grows with the increase of the model capacity, but shrinks as the number of training examples increases. 48 A common conjecture relating generalization to solution landscape is that flat and wide minima generalize better than sharp ones. Thus, regularization techniques, including the dropout approach, 49 have emerged to force the algorithms to bypass the sharp minima. However, the mechanism behind this has not been fully explored. Recently, some researchers have focused on the ResNet-type architecture, with dropout being inserted after the last convolutional layer of each modular building. They thus managed to explain the stochastic dropout training process and the ensuing dropout regularization effect from the perspective of optimal control. 50

AI in medical science

There is a great trend for AI technology to grow more and more significant in daily operations, including medical fields. With the growing needs of healthcare for patients, hospital needs are evolving from informationization networking to the Internet Hospital and eventually to the Smart Hospital. At the same time, AI tools and hardware performance are also growing rapidly with each passing day. Eventually, common AI algorithms, such as CV, NLP, and data mining, will begin to be embedded in the medical equipment market ( Figure 4 ).

AI doctor based on electronic medical records

For medical history data, it is inevitable to mention Doctor Watson, developed by the Watson platform of IBM, and Modernizing Medicine, which aims to solve oncology, and is now adopted by CVS & Walgreens in the US and various medical organizations in China as well. Doctor Watson takes advantage of the NLP performance of the IBM Watson platform, which already collected vast data of medical history, as well as prior knowledge in the literature for reference. After inputting the patients' case, Doctor Watson searches the medical history reserve and forms an elementary treatment proposal, which will be further ranked by prior knowledge reserves. With the multiple models stored, Doctor Watson gives the final proposal as well as the confidence of the proposal. However, there are still problems for such AI doctors because, 51 as they rely on prior experience from US hospitals, the proposal may not be suitable for other regions with different medical insurance policies. Besides, the knowledge updating of the Watson platform also relies highly on the updating of the knowledge reserve, which still needs manual work.

AI for public health: Outbreak detection and health QR code for COVID-19

AI can be used for public health purposes in many ways. One classical usage is to detect disease outbreaks using search engine query data or social media data, as Google did for prediction of influenza epidemics 52 and the Chinese Academy of Sciences did for modeling the COVID-19 outbreak through multi-source information fusion. 53 After the COVID-19 outbreak, a digital health Quick Response (QR) code system has been developed by China, first to detect potential contact with confirmed COVID-19 cases and, secondly, to indicate the person's health status using mobile big data. 54 Different colors indicate different health status: green means healthy and is OK for daily life, orange means risky and requires quarantine, and red means confirmed COVID-19 patient. It is easy to use for the general public, and has been adopted by many other countries. The health QR code has made great contributions to the worldwide prevention and control of the COVID-19 pandemic.

Biomarker discovery with AI

High-dimensional data, including multi-omics data, patient characteristics, medical laboratory test data, etc., are often used for generating various predictive or prognostic models through DL or statistical modeling methods. For instance, the COVID-19 severity evaluation model was built through ML using proteomic and metabolomic profiling data of sera 55 ; using integrated genetic, clinical, and demographic data, Taliaz et al. built an ML model to predict patient response to antidepressant medications 56 ; prognostic models for multiple cancer types (such as liver cancer, lung cancer, breast cancer, gastric cancer, colorectal cancer, pancreatic cancer, prostate cancer, ovarian cancer, lymphoma, leukemia, sarcoma, melanoma, bladder cancer, renal cancer, thyroid cancer, head and neck cancer, etc.) were constructed through DL or statistical methods, such as least absolute shrinkage and selection operator (LASSO), combined with Cox proportional hazards regression model using genomic data. 57

Image-based medical AI

Medical image AI is one of the most developed mature areas as there are numerous models for classification, detection, and segmentation tasks in CV. For the clinical area, CV algorithms can also be used for computer-aided diagnosis and treatment with ECG, CT, eye fundus imaging, etc. As human doctors may be tired and prone to make mistakes after viewing hundreds and hundreds of images for diagnosis, AI doctors can outperform a human medical image viewer due to their specialty at repeated work without fatigue. The first medical AI product approved by FDA is IDx-DR, which uses an AI model to make predictions of diabetic retinopathy. The smartphone app SkinVision can accurately detect melanomas. 58 It uses “fractal analysis” to identify moles and their surrounding skin, based on size, diameter, and many other parameters, and to detect abnormal growth trends. AI-ECG of LEPU Medical can automatically detect heart disease with ECG images. Lianying Medical takes advantage of their hardware equipment to produce real-time high-definition image-guided all-round radiotherapy technology, which successfully achieves precise treatment.

Wearable devices for surveillance and early warning

For wearable devices, AliveCor has developed an algorithm to automatically predict the presence of atrial fibrillation, which is an early warning sign of stroke and heart failure. The 23andMe company can also test saliva samples at a small cost, and a customer can be provided with information based on their genes, including who their ancestors were or potential diseases they may be prone to later in life. It provides accurate health management solutions based on individual and family genetic data. In the 20–30 years of the near feature, we believe there are several directions for further research: (1) causal inference for real-time in-hospital risk prediction. Clinical doctors usually acquire reasonable explanations for certain medical decisions, but the current AI models nowadays are usually black box models. The casual inference will help doctors to explain certain AI decisions and even discover novel ground truths. (2) Devices, including wearable instruments for multi-dimensional health monitoring. The multi-modality model is now a trend for AI research. With various devices to collect multi-modality data and a central processor to fuse all these data, the model can monitor the user's overall real-time health condition and give precautions more precisely. (3) Automatic discovery of clinical markers for diseases that are difficult to diagnose. Diseases, such as ALS, are still difficult for clinical doctors to diagnose because they lack any effective general marker. It may be possible for AI to discover common phenomena for these patients and find an effective marker for early diagnosis.

AI-aided drug discovery

Today we have come into the precision medicine era, and the new targeted drugs are the cornerstones for precision therapy. However, over the past decades, it takes an average of over one billion dollars and 10 years to bring a new drug into the market. How to accelerate the drug discovery process, and avoid late-stage failure, are key concerns for all the big and fiercely competitive pharmaceutical companies. The highlighted emerging role of AI, including ML, DL, expert systems, and artificial neural networks (ANNs), has brought new insights and high efficiency into the new drug discovery processes. AI has been adopted in many aspects of drug discovery, including de novo molecule design, structure-based modeling for proteins and ligands, quantitative structure-activity relationship research, and druggable property judgments. DL-based AI appliances demonstrate superior merits in addressing some challenging problems in drug discovery. Of course, prediction of chemical synthesis routes and chemical process optimization are also valuable in accelerating new drug discovery, as well as lowering production costs.

There has been notable progress in the AI-aided new drug discovery in recent years, for both new chemical entity discovery and the relating business area. Based on DNNs, DeepMind built the AlphaFold platform to predict 3D protein structures that outperformed other algorithms. As an illustration of great achievement, AlphaFold successfully and accurately predicted 25 scratch protein structures from a 43 protein panel without using previously built proteins models. Accordingly, AlphaFold won the CASP13 protein-folding competition in December 2018. 59 Based on the GANs and other ML methods, Insilico constructed a modular drug design platform GENTRL system. In September 2019, they reported the discovery of the first de novo active DDR1 kinase inhibitor developed by the GENTRL system. It took the team only 46 days from target selection to get an active drug candidate using in vivo data. 60 Exscientia and Sumitomo Dainippon Pharma developed a new drug candidate, DSP-1181, for the treatment of obsessive-compulsive disorder on the Centaur Chemist AI platform. In January 2020, DSP-1181 started its phase I clinical trials, which means that, from program initiation to phase I study, the comprehensive exploration took less than 12 months. In contrast, comparable drug discovery using traditional methods usually needs 4–5 years with traditional methods.

How AI transforms medical practice: A case study of cervical cancer

As the most common malignant tumor in women, cervical cancer is a disease that has a clear cause and can be prevented, and even treated, if detected early. Conventionally, the screening strategy for cervical cancer mainly adopts the “three-step” model of “cervical cytology-colposcopy-histopathology.” 61 However, limited by the level of testing methods, the efficiency of cervical cancer screening is not high. In addition, owing to the lack of knowledge by doctors in some primary hospitals, patients cannot be provided with the best diagnosis and treatment decisions. In recent years, with the advent of the era of computer science and big data, AI has gradually begun to extend and blend into various fields. In particular, AI has been widely used in a variety of cancers as a new tool for data mining. For cervical cancer, a clinical database with millions of medical records and pathological data has been built, and an AI medical tool set has been developed. 62 Such an AI analysis algorithm supports doctors to access the ability of rapid iterative AI model training. In addition, a prognostic prediction model established by ML and a web-based prognostic result calculator have been developed, which can accurately predict the risk of postoperative recurrence and death in cervical cancer patients, and thereby better guide decision-making in postoperative adjuvant treatment. 63

AI in materials science