Click through the PLOS taxonomy to find articles in your field.

For more information about PLOS Subject Areas, click here .

Loading metrics

Open Access

Peer-reviewed

Research Article

Machine learning based approach to exam cheating detection

Roles Conceptualization, Data curation, Formal analysis, Investigation, Methodology, Software, Writing – original draft, Writing – review & editing

* E-mail: [email protected]

Affiliation Department of Electrical Engineering, Canadian University Dubai, Dubai, UAE

Roles Funding acquisition, Writing – review & editing

Affiliation Department of Mathematics and Statistics, American University of Sharjah, Sharjah, UAE

Roles Methodology, Writing – original draft

Affiliation Department of Communication and Media, Canadian University Dubai, Dubai, UAE

- Firuz Kamalov,

- Hana Sulieman,

- David Santandreu Calonge

- Published: August 4, 2021

- https://doi.org/10.1371/journal.pone.0254340

- Reader Comments

The COVID-19 pandemic has impelled the majority of schools and universities around the world to switch to remote teaching. One of the greatest challenges in online education is preserving the academic integrity of student assessments. The lack of direct supervision by instructors during final examinations poses a significant risk of academic misconduct. In this paper, we propose a new approach to detecting potential cases of cheating on the final exam using machine learning techniques. We treat the issue of identifying the potential cases of cheating as an outlier detection problem. We use students’ continuous assessment results to identify abnormal scores on the final exam. However, unlike a standard outlier detection task in machine learning, the student assessment data requires us to consider its sequential nature. We address this issue by applying recurrent neural networks together with anomaly detection algorithms. Numerical experiments on a range of datasets show that the proposed method achieves a remarkably high level of accuracy in detecting cases of cheating on the exam. We believe that the proposed method would be an effective tool for academics and administrators interested in preserving the academic integrity of course assessments.

Citation: Kamalov F, Sulieman H, Santandreu Calonge D (2021) Machine learning based approach to exam cheating detection. PLoS ONE 16(8): e0254340. https://doi.org/10.1371/journal.pone.0254340

Editor: Mohammed Saqr, KTH Royal Institute of Technology, SWEDEN

Received: June 20, 2020; Accepted: June 26, 2021; Published: August 4, 2021

Copyright: © 2021 Kamalov et al. This is an open access article distributed under the terms of the Creative Commons Attribution License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Data Availability: The data used in the experiments consists of simulated and real-life datasets. The description of the simulated data is provided in the text and the code to generate the simulations is uploaded on GitHub: https://github.com/group-automorphism/exam_cheating . The real-life data is described within the manuscript and also uploaded as a Supporting information file.

Funding: This work was supported in part by the American University of Sharjah. No additional external funding was received for this study.

Competing interests: The authors have declared that no competing interests exist.

1 Introduction

The COVID-19 pandemic has brought unprecedented challenges to the way schools and universities facilitate learning. Due to imposed quarantine measures, educational institutions around the world were left with no alternative other than to continue their course delivery in an online format. Although remote teaching has been adopted by many tertiary institutions, it suffers from several significant drawbacks. One of the main challenges is ensuring academic integrity. Students who take their exams at home are expected to work independently without use of any external help. However, in practice, a nontrivial portion of students attempt to circumvent the rules of academic integrity by, for instance, using digital cheating or contract cheating [ 1 , 2 ] i.e., remunerating a third-party to do the work on their behalf. Universities try to combat exam cheating with the use of remote proctoring, webcams, LockDown Browser (Respondus), plagiarism software (e.g., Turnitin, SafeAssign, iThenticate, to cite a few) and monitoring software during the exam. Nevertheless, it remains relatively effortless for a student to receive third-party aid during the exam, as indicated by a University of New South Wales report, which found that 139 science students had “hired ghost writers from the Chinese messaging site WeChat to complete their work” [ 3 ]. Our goal in this paper is to identify cases of cheating on exams based on a post-exam analysis of the scores to detect abnormal scores. The significance of our approach lies in the novel use of machine learning techniques to identify the anomalous grades on the exam.

Studies over the past two decades in various countries have provided important information on the prevalence of academic dishonesty in higher education [ 4 – 8 ]. Results of a study by Lanier [ 7 ] of 1,262 students taking courses in regular and distance learning formats indicated for instance that cheating was much more widespread in the online sessions. Chirumamilla et al. [ 5 ], in a survey of 212 students and 162 teachers in Norway, identified six most commonly used cheating practices: impersonation, forbidden aids, peeking, peer collaboration, outside assistance and student–staff collusion. In the Canadian context, Eaton [ 9 ] investigated cases of academic integrity violations in the higher education sector, covered by the media between 2010 and 2019. The report concluded that academic misconduct was a “large issue” and that deeper investigation into its extent was warranted.

There is a growing body of literature that recognizes post-exam grade analysis as one promising avenue for detecting exam violations [ 10 , 11 ]. By comparing students’ continuous assessment grades to the grades on the final exam, exam violations can be identified. Significant deviations from the expected results may be an indication of potential exam transgression. Sudden and unexpected high scores on the final exam for an average student may raise a few eyebrows, red flags and be considered abnormal. However, the situation is not always as straightforward as it seems. If the final exam is relatively unchallenging and the majority of students receive high grades, any violation would be less observable. In addition, it is important to take into account the sequential nature of course assessments and that the order of the scores matters. The temporal order consideration complicates the situation considerably. One popular algorithm to analyze ordered data is a recurrent neural network [ 12 ]. It allows for information to propagate through a sequence via parameter sharing.

In this study, we attempt to identify anomalous scores on the final exam using a combination of a recurrent neural network and an outlier detection method. The proposed method uses data consisting of student course assessments including quizzes, the midterm exam, and the final exam. Outlier detection methods are effective in identifying points in data that deviate from the general population and are often used in fraud detection. Recurrent neural networks are used to process sequential data and can be applied to chronologically ordered data such as course assessments. First, the data is processed by a recurrent neural network. Then, the output of the neural network is fed into an outlier detection method which determines the potential instances of cheating. The resulting algorithm provides a robust and efficient tool to identify potential cases of academic violation. We are optimistic that the proposed method would be a useful tool in the fight against exam fraud.

This study is crucial as educational institutions around the globe are looking into effective ways of preventing academic misconduct with the use of advanced analytics such as artificial intelligence. According to Senthil Nathan, managing director and co-founder of Edu Alliance Ltd, “Distance education has not been widely accredited in this region, mainly because of authentication issues” [ 13 ]. Recent developments in the field of remote learning have led to a renewed interest in detecting academic dishonesty in online environments, with the use of behavioral biometrics, learning analytics, data forensics [ 14 ] or data mining [ 15 ]. A search of the literature however revealed few studies (apart perhaps from [ 16 ]) which addressed this issue using anomaly detection methods. The experimental work presented here provides an alternative approach for identifying potential cases of exam dishonesty based on student scores using machine learning methods.

Despite the effectiveness of the proposed algorithm, the generalizability of these results is subject to certain limitations. It is certainly plausible and possible for a student to achieve an unusually high score through hard work and study. Therefore, any case identified as a potential violation requires further investigation by a human expert before a final decision is declared. Notwithstanding the limitations, this work offers valuable insights into final exam fraud detection and aims to contribute to this growing area of research.

Our paper is organized as follows. Section 2 provides an overview of existing literature on fraud detection and related topics. In Section 3, we present our methodology. We describe the details of the proposed approach to identify potential cases of exam violations. In Section 4, we carry out a range of numerical experiments to test the performance of our method. We conclude the paper with a closing summary and remarks in Section 5.

2 Literature

“Are Online Exams an Invitation to Cheat?” [ 17 ]. Academic dishonesty in Higher Education during online final exams is prevalent and far from being a new occurrence. A decade ago, Carnevale [ 18 ] had already argued that technology was “offering students new and easier ways to cheat” (para.1). The findings of a study by King, Guyette Jr., and Piotrowski [ 19 ] on the attitude and behavior of business students towards cheating in an exam administered online indicated that 73.6% of the respondents perceived that cheating online was an easy task (p.7). Since the switch to online examinations, as a consequence of the pandemic lockdown, there seems to be an unwelcome recrudescence of student academic misconduct cases [ 20 ]. In the U.S., Boston University reported for instance that students had “… used various means, including websites such as Chegg, to get help during the quizzes given remotely” [ 21 ].

Outlier detection is a well investigated aspect of data science [ 22 ]. Anomaly detection has been used successfully in many applications. For example, medical claims processing involves large volumes of data which necessitates the use of automated screening procedures. Thus, anomaly detection algorithms are well suited to identify potential fraudulent claims [ 23 , 24 ]. Similarly, financial data processing such as credit card transactions requires automatic means of anomaly detection [ 25 ]. In network security, anomaly detection is used to identify malicious signals in the network traffic [ 26 ]. Recently, investigators in education have examined outlier detection methods to efficiently identify students’ irregular learning processes [ 27 , 28 ], typing patterns [ 29 ], or cheating in Massive Open Online Courses [ 30 ].

Outlier detection methods can be divided into two groups: semi-supervised and unsupervised methods. In a semi-supervised outlier detection method, an initial dataset representing the population of negative (non-outlier) observations is available. A machine learning tool such as one-class SVM can be trained to obtain the boundary of the distribution of the initial observations. Then new observations are categorized according to their distance from the boundary. In unsupervised methods, the algorithm is trained without a clean initial dataset of negative observations. The majority of the algorithms fall under the unsupervised category. Unsupervised anomaly detection methods can be grouped into model, distance, and density-based approaches. An up-to-date review and analysis of the modern methods can be found in [ 31 ]. Model-based approaches are the simplest methods for outlier detection. They are based on the assumption that the normal data is generated according to some statistical distribution [ 32 , 33 ]. The distribution parameters such as the mean and standard deviation are calculated based on the sample data. More sophisticated approaches use kernel functions to estimate the underlying distribution of data [ 34 ]. Then the points with low probability are deemed to be outliers. Distance-based approaches operate on the assumption that abnormal points are far from the main cluster of points. A popular distance method uses a heuristically-determined radius δ and percentage p . Then a point x is considered an outlier if at most p percent of all other points have a distance to x less than δ [ 35 ]. Density-based approaches compare the data density at the given point relative to the density at the neighboring points. Points with relatively low density are considered anomalous. One of the popular density methods is the local outlier factor (LOF) which computes the local density at a given point. The points with a lower local density compared to their neighbors are considered outliers [ 36 ]. A variant of the LOF was used in [ 37 ] to identify schools with unusual performance on standardized tests.

Since the number of anomalous scores is relatively low, it leads to the issue of imbalanced class distribution. Traditional machine learning techniques are not well-equipped to handle imbalanced data [ 38 ]. There exists a number of ways to deal with imbalanced data. A popular method called SMOTE achieves data balance by artificially adding new minority points to the original dataset prior to the training phase [ 39 ]. More recently a new method based on gamma distribution was proposed to generate new instances of minority points [ 40 ].

3 Methodology

In this section, we present our algorithm for detecting potential cases of cheating on the final exam. The inputs to the algorithm are sequences of grades—quizzes, midterm exam, the final exam—of an entire class, while the output is a collection of labels—one label per student—indicating whether each student cheated or not. The proposed method consists of two parts: regression and unsupervised outlier detection. First, a recurrent neural network model is trained to predict the final exam scores based on the previous assessment scores. Then an outlier detection model is applied to identify the instances where the difference between the actual and the predicted final exam scores is abnormal. Since the input data is unlabeled, the proposed method is an unsupervised algorithm.

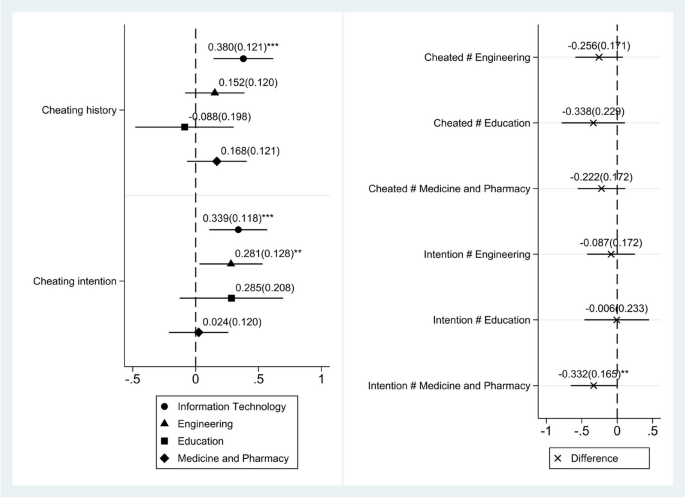

The job of identifying abnormal final exam scores is not a straightforward task. There are several considerations that need to be taken into account. The anomaly of a final exam score depends uniquely on all the other scores in class. An exam score that is anomalous for one class of students may be completely normal for another class. However, further experiments are required to better understand this issue. Second, the existing machine learning techniques must be tailored to the particular features of the problem. We wish to identify the anomalous scores by comparing the scores prior to the final exam to the score on the final exam. It stands to reason that a student with a large gap between the two scores is more likely to have cheated. Implementing this philosophy requires a custom solution. Third, the temporal nature of the assessments must be taken into account during the analysis. Quizzes, term exams, projects, and the final exam are taken in sequence. The order of the assessments contains crucial information. For example, the scores {79, 90, 70, 61, 50, 95} convey different information than the scores {50, 61, 70, 79, 90, 95}. The former sequence of scores is normal whereas the latter is abnormal ( Fig 1 ).

- PPT PowerPoint slide

- PNG larger image

- TIFF original image

The steady progression of grades of Student 2 makes a score of 95 on the final exam seem plausible. On the other hand, the pattern of grades for Student 1 makes a grade of 95 on the final exam highly unexpected.

https://doi.org/10.1371/journal.pone.0254340.g001

Since the scores in different classes have their own particular characteristics the grades of each class must be analyzed individually. The need for individual analysis of each set of exam scores steers us in the direction of outlier detection methods. Traditional outlier detection algorithms are designed to function without any prior training. Each dataset is analyzed on its own and the abnormal instances are flagged by the algorithm. However, the standard outlier detection algorithms suffer from two crucial drawbacks. First, given an n -dimensional feature vector, unsupervised outlier detection methods do not always identify the important features. It is possible that one of the features is more relevant than the rest in identifying outliers. In our case, it is clear that the grade on the final exam is more relevant than, say, the grade on quiz 1. The traditional outlier detection methods do not provide an option of assigning different weights to features. This disadvantage applies to many machine learning algorithms including neural networks. Second, outlier detection methods do not take into account the temporal nature of the sequential data. The temporal information can significantly improve the learning outcomes of algorithms. Given the above deficiencies, outlier detection methods must be complemented with another method that takes into account the outlined considerations. One of the most effective modern machine learning approaches to handling sequential data is recurrent neural networks. These networks use the data from the previous steps to make predictions about the next step. Thus, our approach is based on two key ingredients: neural networks and anomaly detection.

3.1 Anomaly detection

https://doi.org/10.1371/journal.pone.0254340.g002

3.2 Long short-term memory

Recurrent neural networks (RNNs) and their subclass long short-term memory networks (LSTMs) are a natural choice to deal with sequential data. RNNs and LSTMs are designed to deal efficiently with sequence data by allowing previous outputs to be used as inputs. They have been used successfully in various applications including speech and text recognition as well as time series analysis such as daily stock price data [ 42 ]. We use LSTMs in the proposed algorithm. We refer the reader to [ 43 ] for the details of the LSTM architecture.

3.3 Algorithm

Our goal is to design an algorithm that accomplishes the following two fundamental tasks:

- Identify students whose final exam grade is unreasonably high relative to the rest of the class and their prior assessment scores.

- Take into account the sequential nature of the data under consideration.

We employ LSTMs to accomplish the second task. Concretely, we build a regression model using an LSTM network with the aim of predicting the final exam score based on the scores prior to the final exam. The input variables in our model include scores on quizzes, midterm exam, projects, and other pre-final exam assessments. The output of the model is the score on the final exam. The model is trained to minimize the mean squared error of the predictions. The neural network architecture for predicting the final exam scores consists of an input, output, and hidden layers. The size of the input layer depends on the dataset. In our numerical experiments, the input layer has size 5 which corresponds to four quizzes and one midterm exam scores. The output layer has size one which corresponds to the final exam score. The student scores are processed by the three hidden layers to establish the weights of the network to minimize the prediction error. The first hidden layer is a LSTM layer and the other two layers are fully-connected layers. The neural network was constructed using Keras [ 44 ]. The details of the network layers are presented in Table 1 .

https://doi.org/10.1371/journal.pone.0254340.t001

The exam scores predicted by the LSTM regression model are compared to the actual exam scores. Next, we apply a KDE-based outlier detection method described in the preceding section to identify the potential cheating cases on the basis of the predicted and actual exam scores. We apply Scott’s rule to determine the bandwidth parameter for the KDE. To identify the outliers, we select 9% of the instances with the lowest probability based on the estimated density function. The contamination level was determined based on the fact that 10/110 of the instances in the data are outliers. In general, the contamination level is set by the expert based on the expected number of outliers within the data. The synopsis of the proposed detection procedure is given below.

- The input features ( X ) are the scores prior to the final exam, i.e. quizzes, the midterm exam, project, and others.

- The output ( y ) is the final exam score.

- Calculate the error between the scores predicted by the trained model and the actual exam scores. Apply a KDE-based outlier detection method on the set of errors to determine the abnormal scores.

4 Numerical experiments

In this section, we conduct numerical experiments to test the performance of the proposed algorithm against other benchmark methods. The experiments are carried out over a range of scenarios. The data used in the experiments consists of four synthetic and one real-life datasets. We employ a naive as well as more sophisticated anomaly detection strategies as benchmarks against the proposed method. To measure the efficacy of the algorithms, we compute the true positive and false positive rates of the classification results. The results of the experiments show that the proposed method outperforms the benchmark methods in the majority of the scenarios. The proposed algorithm achieves an average of 95% true positive rate and 2.5% false positive rate on the synthetic data. It achieves 100% true positive rate and 4% false positive rate on the real-life data. The results of the experiments are a strong indication that the proposed algorithm may be an effective tool in fraud identification.

4.1 Benchmark methods

The naive strategy employed in practice is to compare the mean of the scores prior to the final exam with the score on the final exam. If the final exam score is significantly higher than the mean score of the preceding assessments, then it would be a sign of a potential violation. It is a simple and intuitive approach commonly used by instructors to identify potential cases of fraud. Despite its simplicity the naive strategy can be a quick and effective tool to detect abnormal scores on the final exam. As such, it is a good baseline strategy that we aim to beat. Further to the baseline method, we benchmarked our proposed algorithms to the following standalone outlier detection techniques: robust covariance [ 33 ], isolation forest [ 45 ], and local outlier factor [ 36 ]. The benchmark methods are implemented in the popular scikit-learn machine learning library. We used the default parameter settings for the models with exception of the contamination parameter which was set to 0.09. Note that in the sklearn implementation, the default value of the contamination parameter is set to 0.1, i.e., 10% of the points are deemed outliers.

It is important to note that in our data, a sequence of grades is labeled based on whether or not the student cheated on the final exam. This information is built into both our data generation and in our cheating detection method, but is wholly unavailable to the baseline methods. In other words, the baseline methods do not make the same assumption about the structure of the data that is available to the proposed method. Thus, the comparison is not entirely fair.

4.2 Datasets

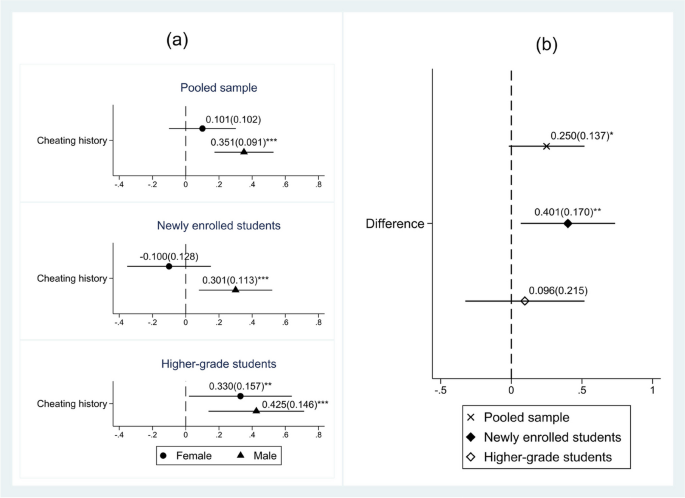

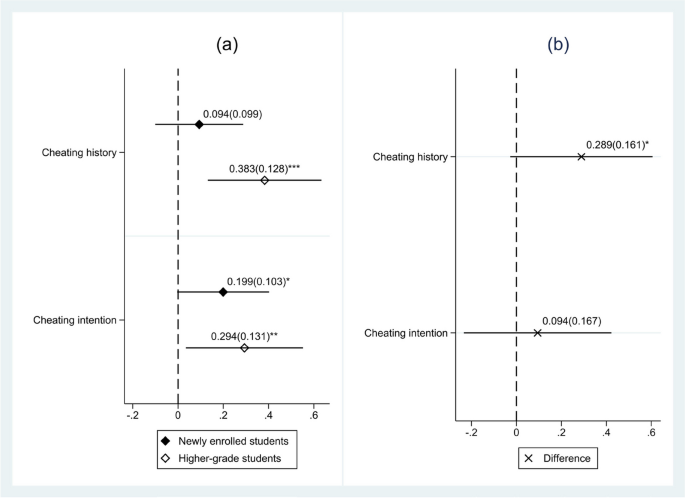

The experimental evaluation of our proposed approach includes four different synthetic and one real-life datasets. The synthetic datasets are designed to simulate real-life scenarios of exam cheating. A representative sample of each synthetic dataset is shown in Fig 3 . Dataset 1 consists of 100 normal grades and 10 cheating cases ( Fig 3a ). The cheating cases are designed to be egregious with a 35-point difference between the average of the regular assessment scores and the final exam score. The normal grades are mostly homogeneous. Approximately 80 of the normal grades remain consistently within a 10-point range across all assessments including the final exam. The remaining 20 normal grades increase during the semester. The cheating cases in Dataset 1 are relatively easy to identify even with the naive strategy of comparing the average grade prior the final exam to the score on the final exam. Dataset 2 is similar to Dataset 1. However, the cheating cases are more masked in Dataset 2 with only a 20-point difference between the average score prior to the final and the score on the final exam ( Fig 3b ). A smaller margin between the final exam and preceding scores would make it more difficult to discern the cases of cheating. As a result, the performance of the anomaly detection algorithms deteriorates. Dataset 3 is similar to Dataset 2. However, Dataset 3 contains normal grades that are rising throughout the semester in such a way that the difference between the average score prior to the final exam and the final exam is the same as in the cheating cases. As shown in Fig 3c , the scores in maroon color are steadily rising indicating a normal pattern. On the other hand, the scores in red color increase abruptly with much larger amount on the final exam. As mentioned, the difference between the average score prior to the final and the score on the final exam is similar in both instances. To distinguish between the former and the latter cases traditionally requires human judgment. Nevertheless, we show that the proposed method can automatically detect cheating cases even under such challenging conditions. The last dataset in our experiment illustrates the scenario of an easy final exam where all the grades increase relative to the regular assessments. The normal grades are simulated to increase by 10 points on the final exam over the average of the preceding scores. The cheating cases are designed to increase by 25 points on the final exam compared to the prior regular semester assessments ( Fig 3d ). Since all grades increase on the final exam it becomes more challenging to identify cases of cheating. Further details about the synthetic datasets together with the code can be found on our public GitHub repository ( https://github.com/group-automorphism/exam_cheating ).

The datasets capture different scenarios for the distribution of the grades. (a) A representative sample of Dataset 1 grades. The dataset consists of 91% normal and 9% anomalous grades. The normal grades consist of three quarters homogeneous grades and one quarter increasing grades. The anomalous grades rise sharply —by 35 points—during the final exam. (b) A representative sample of Dataset 2 grades. The dataset is similar to Dataset 1. However, the anomalous grades rise less sharply —by 20 points—during the final exam. As a result, the outliers are harder to identify. (c) A representative sample of Dataset 3 grades. The dataset is similar to Dataset 2. However, around 10% of the normal grades are increasing at an incremental pace so that the difference between the average prior and final exam scores are same as in the anomalous instances. As a result, it is even more challenging to identify the outlier scores. (d) A representative sample of Dataset 4 grades. The dataset is designed to simulate a scenario when the final exam is easy and everyone receives a relatively high grade. The normal final exam scores 10 points higher than the average on prior assessments. The anomalous final exam scores 25 points higher than the average preceding scores.

https://doi.org/10.1371/journal.pone.0254340.g003

In addition to the synthetic data, we also employ one real-life dataset in our experiments. Although we would have liked to use more real-life data it is difficult to obtain due to a number of reasons. Our dataset consists of 52 observations of which 3 are positive cases. Each observation includes scores from 4 quizzes, a midterm exam, and the final exam.

4.3 Results and discussion

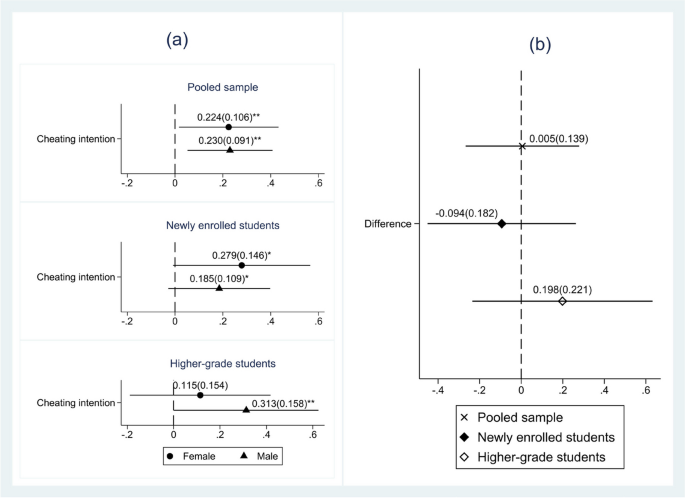

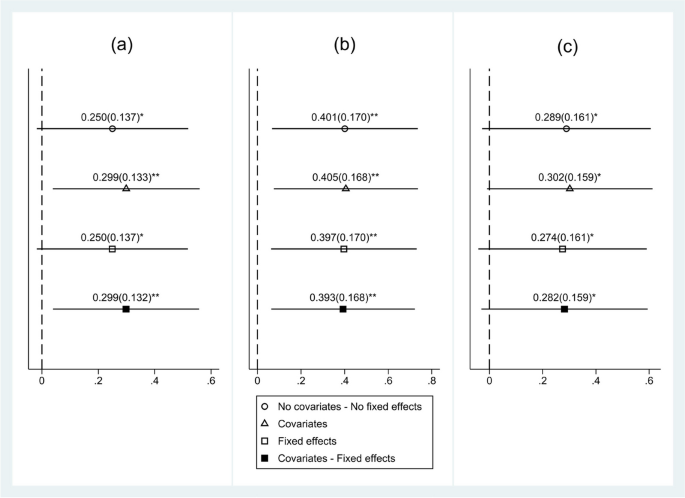

To determine the efficacy of the algorithms, we calculate the true positive rate (TPR) and the false positive rate (FPR) of the classification results. The TPR measures the proportion of actual positives that are correctly identified as such. The FPR is the proportion of all negatives that are identified as positive by the test. For each synthetic dataset, the experiment is run 20 times. We report the mean and standard deviation of the TPRs. The results of the experiments reveal that the proposed algorithm may be an effective tool in identifying cases of cheating on the final exam based on prior scores.

The mean TPRs of the proposed algorithm together with the benchmark methods is presented in Table 2 . Results indicate that the proposed algorithm has the highest overall average TPR of 0.874 among all the tested algorithms. In addition, the proposed method produces the highest individual mean TPR on Datasets 2 and 3. The standard deviations of the TPRs are also presented in Table 2 . The standard deviation of the TPRs range between 0.05 and 0.12.

The results represent experiments on four datasets based on 20 simulated experiments. The proposed method ( NewAlgo ) produces the best overall results.

https://doi.org/10.1371/journal.pone.0254340.t002

The FPR of the proposed algorithm together with the benchmark methods is presented in Table 3 . As can be seen from the table, the proposed algorithm produces the smallest overall average FPR of 0.013 among all tested detection methods. It also produces the lowest FPR on Dataset 2 and Dataset 3.

The results represent experiments on four datasets based on 20 simulated experiments.

https://doi.org/10.1371/journal.pone.0254340.t003

To further test our proposed algorithm, we applied it to simulated data of size 220 which is double the original size used in the preceding experiments. We simulated each dataset 20 times and recorded the mean TPRs. The results of the experiments with the increased class size are largely in line with the original results. As shown in Table 4 , the mean TPR values of the proposed method range between 0.780 and 0.988. The new algorithm achieves the highest overall mean TPR of 0.872 among all the tested methods. The new overall average TPR is close to the original overall average (0.868).

The results represent experiments on four datasets based on 20 simulated experiments and the class size of 220. The proposed method ( NewAlgo ) produces the best overall results.

https://doi.org/10.1371/journal.pone.0254340.t004

The primary factor in identifying the cases of cheating is the score on the final exam relative to the prior assessments. In addition, secondary factors such as the trajectory (momentum) of the scores and the performance relative to the rest of the class must be considered. Although the naive approach does not take into account the trajectory and the relative performance, it does capture the average difference between the prior assessments and the final exam scores. As a result, it produces a robust performance. On the other hand, the standard outlier detection methods are based purely on the geometry of the sample points. The points away from the main cluster or the points in a relatively low-density region of the sample space are labeled as outliers. No special meaning is assigned to any particular feature.

To better understand the results of the numerical experiments (Tables 2 – 4 ) and to illustrate the mechanism of the outlier detection methods consider the dataset of grades consisting of Quiz 1, Quiz 3, and the Final exam scores from DS2 dataset ( Fig 3b ). The details of the cases of cheating and the outliers are provided in Table 5 . As shown in Table 5 , in the cases of cheating the scores on Quiz 1 and Quiz 2 are relatively stable before suddenly jumping on the Final exam. A sudden increase in the score is an indication of a potential case of cheating. The RobustCov method is a model-based method that fits a Gaussian ellipsoid to the dataset using the central data points. As shown in Table 5 , the RobustCov method selects the points with a positive trend in scores. Although it could be argued from a geometric stance that the points with a positive trend could be outliers, it would not be appropriate to make such an assumption from an academic stance. A student who consistently improves his/her marks between assessments and ultimately receives a high grade on the final exam is not surprising. The RobustCov method fails because it is unable to take into account the context of the problem. The IsoForest method is based on the random forest classifier. It operates on the principle that anomalous points have shorter paths from the root to the terminal node. As can be seen from Table 5 , the IsoForest method labels as outliers the points with either high or low-valued elements. It appears that the points with extremely low or extremely high-valued elements require the fewest number of splittings to be isolated. As a result, the IsoForest method misses the cheating cases. The LOF method is based on the idea of selecting the samples that have a substantially lower density than their neighbors. It selects the points that are away from clusters. The cheating cases are not identified because they form a cluster of points with low quiz scores and high final exam score. In addition, the cheating cases are close to the cases where students gradually improve their marks making them even harder to distinguish.

https://doi.org/10.1371/journal.pone.0254340.t005

The results of the experiment on the real-life data are in line with the results on simulated data. As shown in Table 6 , the proposed method attains the highest TPR. Indeed, the proposed method successfully identifies all 3 cases of cheating among 52 cases. The proposed method also attains the lowest FPR. Only 4% of students who did not cheat were flagged as suspicious.

The results represent experiments on a single real-life dataset. The proposed method ( NewAlgo ) produces the best overall results.

https://doi.org/10.1371/journal.pone.0254340.t006

The results of the numerical experiments reveal that the proposed algorithm achieves high levels of accuracy. It can identify almost all cases of cheating while avoiding falsely labeling a normal score. The proposed method significantly outperforms the benchmark methods both in TPR and FPR. The performance of our method is relatively consistent across different datasets.

In the problem of identifying the potential cases of cheating, the final exam scores play a crucial role. The proposed algorithm is tailored to compare the predicted and the actual final exam scores to identify the anomalous grades. The standard outlier detection algorithms have the added burden of learning the importance of the final exam scores from the data and therefore do not perform well.

5 Conclusion

The COVID-19 pandemic has forced most of the schools and universities in the world to switch to online education. In particular, exams have been administered remotely with little supervision. As a result, the likelihood of exam cheating has increased dramatically. The main objective of the present research was to examine a new approach to detect potential cases of cheating using machine learning techniques. In particular, we considered the problem of identifying cases of cheating on the final exam. We propose a novel method for identifying potential cases of cheating on the final exam using a post-exam analysis of the student grades. Our method takes into account student grades prior to the final exam, grades on the final exam, and the overall performance of the class to make a decision. We employ LSTMs in combination with a KDE-based outlier detection technique to identify the potential cases of cheating. The insights gained from this study may be of assistance to academics and administrators interested in preserving the academic integrity of course assessments.

The proposed method yields promising results on various datasets. It achieves an average TPR of 0.95 and FPR of 0.05 in our numerical experiments. Our method significantly outperforms the benchmark methods that were used in the experiments. However, it is important to note that the baseline algorithms have the added burden of learning the importance of the final exam scores from the data. So the comparison may not be entirely adequate. The performance of the proposed method is consistent across different scenarios simulated in our experiments and produces near perfect accuracy in identifying cases of cheating on the data used in the study.

The present study lays the groundwork for future educational research into outlier detection techniques, as it proposes a complement to commercial plagiarism detection software and possibly a non-intrusive deterrent alternative to highly-polemical remotely-invigilated exams. The reader should bear in mind however that the scope of this study was limited in terms of sample size and context, its replication at other universities would undoubtedly be valuable and recommended. Further investigation and experimentation into the proposed method and academic integrity detection is therefore strongly advocated. Remote exam administration poses a great challenge to preserving the academic integrity of the exam. It is relevant issue today and it will remain important in the future. Our method offers a great tool to help address the issue of academic integrity for remotely administered exams.

A natural progression of this work would be to observe the performance of our model by replacing the regression component with alternatives such as linear regression or Gaussian process regression. Indeed, it would be informative to test different regressor/anomaly detection combinations and perhaps even obtain an improvement over the current configuration.

Supporting information

https://doi.org/10.1371/journal.pone.0254340.s001

- View Article

- Google Scholar

- 2. Lancaster, T., & Clarke, R. (2016). Contract cheating: the outsourcing of assessed student work. Handbook of academic integrity, 639–654

- 3. Fellner, C. (2020). Ghost writers helping UNSW students to cheat on assessments, leaked report reveals. The Sydney Morning Herald. May 5. Retrieved from https://www.smh.com.au/national/nsw/cheating-unsw-students-hire-ghost-writers-from-messaging-site-wechat-to-complete-work-20200505-p54q3f.html

- 4. Awdry, R. (2020). Assignment outsourcing: moving beyond contract cheating, Assessment & Evaluation in Higher Education. https://doi.org/10.1080/02602938.2020.1765311

- 9. Eaton, S. E. (2020). An Inquiry into Major Academic Integrity Violations in Canada: 2010–2019. Retrieved from https://prism.ucalgary.ca/handle/1880/111483

- 10. Berman, S., Brandes, G., Carpenter, M. M., & Strauss, A. L. (2020). Secure Online High Stakes Testing: A Serious Alternative as Legal Education Moves Online. Available at SSRN 3567894.

- PubMed/NCBI

- 13. Bollag, B. (2020). Next Steps for New Online Courses: Measure Learning, Prevent Cheating. Al-Fanar Media. May 26. Retrieved from https://www.al-fanarmedia.org/2020/05/next-steps-for-new-online-courses-measure-learning-prevent-cheating/

- 14. Cizek, G. J., & Wollack, J. A. (2016). Handbook of quantitative methods for detecting cheating on tests. Routledge.

- 15. Hernándeza, J. A., Ochoab, A., Muñozd, J., & Burlaka, G. (2006). Detecting cheats in online student assessments using Data Mining. In Conference on Data Mining| DMIN (Vol. 6, p. 205).

- 18. Carnevale, D. (1999). How to Proctor From a Distance. The Chronicle of Higher Education. November 12. Retrieved from https://www.chronicle.com/article/How-to-Proctor-From-a-Distance/19417

- 20. Sheinermann, M.R; & Shevin, Z. (2020). Math TA posted false solution online to catch students in violation of academic integrity. The Daily Princetonian. May 26. Retrieved from https://www.dailyprincetonian.com/article/2020/05/princeton-teaching-assistant-math-department-slader-mat202-academic-integrity-cheating-covid

- 21. Connolly, C. & Moroney, J. (2020). BU Investigates Whether Students Cheated on Online Tests Amid Shutdown. NBC Boston. April 30. Retrieved from https://www.nbcboston.com/news/local/boston-university-investigates-cheating-allegations/2116206/

- 22. Aggarwal, C. C., & Yu, P. S. (2001, May). Outlier detection for high dimensional data. In Proceedings of the 2001 ACM SIGMOD international conference on Management of data (pp. 37–46).

- 25. Ram, P., & Gray, A. G. (2018, January). Fraud Detection with Density Estimation Trees.

- 26. Nguyen, Q. P., Lim, K. W., Divakaran, D. M., Low, K. H., & Chan, M. C. (2019, June). GEE: A gradient-based explainable variational autoencoder for network anomaly detection. In 2019 IEEE Conference on Communications and Network Security (CNS) (pp. 91–99). IEEE.

- 28. Ueno, M., & Nagaoka, K. (2002). Learning Log Database and Data Mining system for e-Learning–On-Line Statistical Outlier Detection of irregular learning processes. In Proceedings of the International Conference on Advanced Learning Technologies (pp. 436–438).

- 29. Leinonen, J., Longi, K., Klami, A., Ahadi, A., & Vihavainen, A. (2016, July). Typing patterns and authentication in practical programming exams. In Proceedings of the 2016 ACM Conference on Innovation and Technology in Computer Science Education (pp. 160–165).

- 30. Alexandron, G., Ruipérez-Valiente, J. A., & Pritchard, D. E. (2019). Towards a general purpose anomaly detection method to identify cheaters in massive open online courses. In Proceedings of the 12th international conference on educational data mining (pp. 480–483).

- 32. Barnett, V., & Lewis, T. (1994). Outliers in statistical data, vol 3. Wiley.

- 37. Simon, M. (2014). Local outlier detection in data forensics: Data mining approach to flag unusual schools. In Test Fraud (pp. 99–116). Routledge.

- 44. Chollet, F., and others (2015). Keras. https://keras.io

- 45. Liu, Fei Tony, Ting, Kai Ming and Zhou, Zhi-Hua. “Isolation forest.” Data Mining , 2008. ICDM‘08 .

- Original article

- Open access

- Published: 27 November 2020

Attitudes towards cheating behavior during assessing students᾽performance: student and teacher perspectives

- Dijana Vučković ORCID: orcid.org/0000-0003-2129-555X 1 ,

- Sanja Peković 2 ,

- Marijana Blečić 1 &

- Rajka Đoković 3

International Journal for Educational Integrity volume 16 , Article number: 13 ( 2020 ) Cite this article

16k Accesses

13 Citations

3 Altmetric

Metrics details

Our aim in this study was to determine students’ and teachers’ attitudes towards cheating in assessing students’ performance. We used mixed methodology and the main research method was a case study. We aimed to describe how our respondents: 1. recognize ethical misconduct (EM) in several situations given through case studies, 2. understand the roles of each subject involved, 3. predict consequences of the EM and how they understand its possible causes, 4. create individual answers to EM or resolve problem situations. The research sample of students (120) includes participants from three basic study programs and two postgraduate programs in the field of education. A sample of teachers (42) was obtained from a number of faculties by random selection. Our respondents have identified most forms of EM reasonably well, although in some situations, the respondents recognized other errors (poor organization of time for learning, professors’ strict deadline for paper submission, etc.) as EM. Therefore, the issues of ethics are not completely clear to all respondents, which leads to the conclusion that universities must organize training in this field. Both groups of respondents understand EM in a similar way, and whether it is a professor or a student (or students) who commits EM has not affected their responses. Our results suggest that it is necessary to work on the prevention of fraud by discussing the consequences (especially the long-term ones, which were not considerably discussed in the comments), by learning ethical reasoning, by developing functional strategies of learning for the purpose of preventing fraud.

Introduction

While ethical misconduct (EM) has been studied at world universities since the 1990s (McCabe et al. 2001 ), it has become a popular topic in Montenegro only in recent years. This does not mean that various forms of EM have not been noted in higher education (ETINED Council of Europe Platform on Ethics, Transparency and Integrity in Education, 2018 ). Ethical misconduct in (higher) education in a country experiencing transition Footnote 1 where the education sector is facing a series of reforms represents a most serious disruptive factor to progress. Since there was no research on cheating in assessing students’ performance in Montenegro, we considered it necessary to determine from the outset whether there has been a common understanding of cheating, its causes and consequences. We wanted to find out the implicit ethical norms of our respondents (Colnerud and Rosander 2009 ). Our aim in this study is to determine students’ and teachers’ attitudes towards academic integrity (AI) issues in assessing students’ performance.

We will first present an overview of literature relevant to our research, which also refers to the recognizing and understanding of EM, its social and individual causes and effects, as well as the role of teachers, students and institutions in the prevention and sanctioning of cheating. The results of an empirical research study with students and teachers as respondents follow further on. We have used the case study method, where the respondents evaluated ethical problems presented in case studies.

Literature review

Fraud in assessment – do we consider the same meaning.

The question posed by Ashworth et al. ( 1997 ), which refers to determining the meaning of the term academic fraud, is still relevant. The authors point out that the term does not have the same meaning for all members of the academic community, while the starting point in various studies is that there is a consensus on the meaning of the term fraud (Ashworth et al. 1997 ). Even though the problem is a complex one and while it is quite possible that some students may have difficulties understanding its meaning (Trautner and Borland 2013 ), some authors think that students most certainly know what is expected from them (Burrus et al. 2013 ). Respondents answer the question What do students perceive to be plagiarism/cheating? correctly and precisely (Jones 2011 ), e.g. the majority of students knew what plagiarism/cheating is. What we understand by the term cheating in this paper is “Actions that attempt to get any advantage by means that undermine values of integrity” (Tauginienė et al. 2018 , p.12), while we will be focused on cheating connected with the assessment of students’ performance.

If students have been accustomed to fraud during their previous education and if they live in transitional societies where there is no coherent system of values (Hrabak et al. 2004 ) – which is the case in Montenegro – then it is quite natural to “mix up” the notions of what is allowed, and what is not. For example, the research conducted by Hrabak et al. ( 2004 ) with medicine students (who study ethics as a separate subject) showed that most of them (94%) admitted to cheating in exams, while almost half of respondents would never report cheating. The knowledge of ethics did not appear applicable to this group of students.

Cheating in assessment represents a violation of the rules of AI, by which we understand the expectation of being “honest, trustworthy, responsible, respectful, and fair, by completing work only in ways authorized by... the institution” (Bertram Gallant 2008 , pp.10–11). Coursework and examination cheating has almost become common at universities, i.e. it has acquired the status of normative behavior among students (Chapman et al. 2004 ). Examination cheating has been treated as a more serious type of fraud compared with coursework cheating (Ashworth et al. 1997 ).

A number of types of fraud have been identified (Jones 2011 ; Newman 2020 ), and numerous attempts have been made to classify them (Ashworth et al. 1997 ; Lothringer 2008 ). The Glossary for Academic Integrity (Tauginienė et al. 2018 ) was compiled, and taxonomies contributing to the systematization of the more and more abundant glossary in this field were developed (Lothringer 2008 ; Tauginienė et al. 2019 ). Despite researches, taxonomies and numerous activities whose primary purpose is to preserve ethics in higher education, academic fraud is getting more widespread, its types and forms are multiplying, so that it is obvious that classifications and definitions do not considerably contribute to fraud prevention.

Spreading of cheating, its actors and frequency

We could speak of both individual and collective cheating within the students’ community (Lothringer 2008 ), where collective cheating may be either socially active or socially passive (Thomas 2017 ). Students’ frauds sometimes involve teachers, so that a number of scandals at prestigious universities have been referred to in literature (Aaron and Roche 2013 ; Robin 2004 ). Third parties, i.e. persons from outside the academic community, are also getting more and more involved in cheating, so the contract cheating is growing more and more evident (Steel 2017 ), meaning that ghost writing has become an “industry”, as reported by numerous studies (Glendinning 2016 ; Shahghasemi and Akhavan 2015 ). The circle of the people involved is getting bigger, while the types of fraud are multiplying, regardless of the only acceptable public (desirably, personal as well) attitude that collecting points and the grade itself must be attained exclusively by hard work and knowledge with due respect to all rules which (un) officially apply to knowledge assessment.

Since the 1990s this topic has been drawing particular attention (Ashworth et al. 1997 ) and there are numerous studies which have provided valuable insights, but their impact is obviously not enough because the types and forms of academic fraud have been multiplying incredibly fast mostly by means of the Internet and modern technology (Aaron and Roche 2013 ; Burrus et al. 2013 ). Besides technology, diversity in the classrooms is another important factor that strongly influences students’ cheating (Brodowsky et al. 2019 ).

Cheating poses a problem around the world (Thomas 2017 ) and it represents “a threat to the morality of the students, the integrity of their grades, and the reputation of our institutions of higher education” (Aaron and Roche 2013 , p.161). The problem of academic dishonesty represents a long-term threat to our entire society. The questionable AI of students, and often of professors (Aaron and Roche 2013 ; Thomas 2017 ) is a definite sign that morality of contemporary higher education is not at a satisfactory level. In addition, there is evidence that cheating in education begins long before university (Aaron and Roche 2013 ), as well as that it continues after university, i.e. at work (Jones 2011 ; Teixeira and Rocha 2006 ). If cheating is not prevented in a timely manner, it threatens to reoccur and persist, thus tending to become a common pattern of conduct.

There are frequent researches of cheating in the disciplines of economy (Brodowsky et al. 2019 ), medicine (Hrabak et al. 2004 ), and life sciences (Dömeová and Jindrová 2013 ), while Joshua Newman ( 2020 ) points to a lack of research concerned with studies whose students will work in the public sector. A high degree of self-reporting has been recorded – students admit to having been involved in cheating – but they often do not see it as immoral behavior (Dömeová and Jindrová 2013 ). Education sciences students also admit to having been involved in cheating (Cummings et al. 2002 ). Frequent self-reporting is a certain indicator that cheating has acquired the status of habitual behavior.

Factors determining students’ cheating

Both collective and individual factors determine cheating, and cheating has also been associated with certain contexts (Thomas 2017 ). The cultural context represents the commonest factor which (de) motivates cheating, so that the orientation individualism vs. collectivism has been recognized as that which most influences making ethical decisions (Brodowsky et al. 2019 ). Cultural individualism directs to an individual who does not subjugate his or her interest to the group, while in collectivism everything is subjugated to the interests of the group (Brodowsky et al. 2019 ; Thomas 2017 ). A step further in such researches has been determining such a phenomenon which shows that some cultures and regions in the world more or less tolerate fraud (Brodowsky et al. 2019 ) through the orientation individualism vs. collectivism. Therefore, societies of the Middle East, North Africa and Eastern Europe have been recognized as utilitarian societies, and “utilitarian cultures are the most tolerant of cheating behavior wherein people view cheating as a way to accomplish the greatest good for the greatest number of people” (Brodowsky et al. 2019 , p.26). The authors emphasize an important non-discriminatory idea, i.e. they point out that one should avoid overgeneralizations while determining cultural factors (Brodowsky et al. 2019 ). In addition to this, much as cultures may have powerful influences, it is clear that they are not the only ones. Certain studies indicate similarities in perception among students coming from radically different cultures (Rawwas et al. 2004 ). This similarity of attitudes may be explained by communication through social networks.

Directly related to individualism-collectivism is also the relation or construct agency-communion, where agency represents a tendency by which an individual boosts his or her potentials and forms his or her independence, while communion represents an opposite tendency by which an individual becomes completely devoted to the interests of the collective and directed towards belonging to the collective (Brodowsky et al. 2019 ; Thomas 2017 ). In addition to the aforementioned determinants, Thomas ( 2017 ) further analyzes several more factors, which have also been recognized as culturally modelled, such as: mind-set (fixed vs. growth mind-set), learning environment (transmission vs. active learning) and motivation. In education it is necessary to encourage growth mind-set, active learning approaches (e.g. discussion, problem solving), and students need to be constantly motivated (Thomas 2017 ).

However, individual certainly are culturally modelled, but they also have some individual characteristics (e.g. demographics, capabilities, attitudes and beliefs) which make them more or less liable to cheating (Ashworth et al. 1997 ; Brodowsky et al. 2019 ; Chapman et al. 2004 ). When they find themselves in a peer group, individuals are especially guided by the culture of that group (McCabe et al. 2001 ). In student groups peer loyalty and fellow feeling are highly valued (Aaron and Roche 2013 ; Ashworth et al. 1997 ). Thus a student will rarely report his or her fellow student who is cheating (Aaron and Roche 2013 ), or they will even express a strong disapproval of the one who reports (Brodowsky et al. 2019 ).

Student group culture also implies the fact that students often model their behavior according to what they think other students do. Students cheat because they think their peers do that as well (Engler and Landau 2011 ). Moreover, they find that their fellow students do that more often than they themselves, which is seen “as a justification for their behaviors” (Engler et al. 2008 , p.99). Thus Engler and Landau ( 2011 ) prove that in fraud prevention it is of particular importance to show students what the behavior of an average student is, i.e. that their perception of fraud is unrealistic (Engler and Landau 2011 ).

The application of social norms interventions requires consistency, stability and depth, because if there is no consistency in the message on the part of the university, the student will not thoroughly adopt the value (Engler et al. 2008 ). It is especially important to position learning as a motivating factor for students, because as long as motivation is purely extrinsic (grades), the problem of values is seriously compromised (Aaron and Roche 2013 ). Students can play a major role in maintaining AI by creating the right climate, but also through peer reporting (Burrus et al. 2013 ). In addition, institutions are thought to be able to improve AI with appropriate codes of ethics and their being respected (McCabe et al. 2001 ).

Causes, effects and prevention of fraud

Various causes of fraud have been identified, such as: pressure (mostly from parents), the desire to increase GPA because of a scholarship and/or getting a job, the fact of being overburdened, a lack of self-confidence, etc. (Davis and Ludvigson 1995 ). Academic fraud often occurs because of bad time management, “everybody does that”, “nobody cares”, the material is incomprehensible (Jones 2011 ). Less personal investment in learning also causes cheating (Colnerud and Rosander 2009 ). Plagiarism seems to be unclear to students (unintentional plagiarism is also recognized), there is an alienation from the university, the influence of large groups and an emphasis on group studying – students consider these as factors which encourage cheating and plagiarism (Ashworth et al. 1997 ).

The list of causes is not exhausted, and neither is the list of consequences. It is highly important that students be aware of the consequences of their conduct and understand fully the seriousness of the mistake they make while cheating. When it comes to consequences, it is necessary to point out to students the short-term negative effects (punishments, ignorance of the subject, etc.), but even more the long-term consequences – incompetence in the profession and the resulting dangers. Students themselves are unaware of the consequences for those who commit fraud (Aaron and Roche 2013 ). In that respect students point out that it is of vital importance that faculties take all measures to prevent fraud (Aaron and Roche 2013 ). In addition to strictly controlling of technical conditions where exams take place, it is particularly important that students be taught responsibility and integrity (Rosile 2007 ; Trautner and Borland 2013 ), as well as that they learn to manage their time better (Aaron and Roche 2013 ). Professors are expected to be more devoted, to create exam tasks in a more creative form (avoiding tasks that can be plagiarized), and to encourage higher forms of learning and thinking instead of simply memorizing data (Aaron and Roche 2013 ; Newman 2020 ; Thomas 2017 ). The quality of teaching and assessment must be at a high level (Ashworth et al. 1997 ).

The authors of the pedagogical orientation assert that the moral education of young people poses a major problem in modern society, so that theories on moral development and learning should be put into function of solving this problem (Caro et al. 2018 ). Therefore, one of the suggestions is rereading Lawrence Kohlberg’s theory with an emphasis on its practical side. According to Kohlberg’s theory, it is considerably more important for moral learning to work on the development of students’ moral reasoning through moral dilemmas than to teach them attitudes and values in the form of content (Caro et al. 2018 ). It is precisely the principle of moral reasoning on which Trautner and Borland ( 2013 ) suggest the model of teaching by means of the so-called sociological imagination. The model consists of a series of stories encompassing moral dilemmas which are the topic for discussion and analysis (Trautner and Borland 2013 ). In addition, it is important to keep in mind that certain forms of academic fraud are caused by the fact that it is still necessary to teach some things directly, i.e. in the form of content, because “students may not be as competent at completing academic assignments as educators usually assume that they are” (Newman 2020 , p.73). This phenomenon concerns different contents, such as general academic writing, adequate selection of literature and its proper citation, but also other important aspects. Many students do not have learning skills developed (Perović and Vučković 2019 ).

Anonymous direct question surveys and/or Likert-type scale were the most frequent methods in this research field (Ashworth et al. 1997 ; Thomas 2017 ).

Research context

As for our research context, it is represented by a collectivist culture which is still not oriented enough to growth mind-set, so Thomas’s ( 2017 ) research corresponds to our context very well, regardless of it having been undertaken on the other part of the planet. Namely, Thomas ( 2017 ) study, conducted with 207 university students in Thailand (in an area marked by a collectivist culture), corresponds to our study because Montenegrin culture is also collectivist and belongs to the region of South-Eastern Europe, which is also recognized as an area of collectivist utilitarian cultures (Brodowsky et al. 2019 ). In addition, teaching and learning at the university level in Montenegro, despite a number of changes that have taken place since the signing of the Bologna declaration in 2003, is still marked by traditional transmissive teaching which does not encourage a growth mind-set and agency, as confirmed by research (Perović and Vučković 2019 ; Vučković 2010 ).

The learning environment is mostly marked by traditional transmission teaching, while the motivation for learning is predominantly extrinsic – in the form of points and grades (ETINED Council of Europe Platform on Ethics, Transparency and Integrity in Education, 2018 ). Students are also often demotivated towards studying because securing employment in a particular field is very hard. It is considered that cheating starts considerably before getting to university.

The Council of Europe ETINED platform published a comparative survey on AI in six South-Eastern European countries, including Montenegro. The greatest disadvantage of that research regarding Montenegro is the fact that a small number of respondents participated, especially through the survey (ETINED 2018 ). However, if we even take this disadvantage into consideration, we still think that the results correspond to the real situation quite well. Among other things, the report indicates:

the lack of clear guidelines on how to preserve AI at the level of decision makers;

HEIs do have necessary documentation (codes of ethics), but there are no clear and consistent procedures of their implementation;

students’ cheating is not considered to be a serious problem;

the respondents indicate that prevention is to be provided by the application of external verification measures (e.g. by software for plagiarism detection and by technical prevention of cheating during exams);

some students do get training on academic writing, but they find it insufficient (ETINED 2018 ).

Among other things, the recommendations emphasize the following:

institutions must improve the learning environment;

students should be included in the prevention of academic fraud, and it is necessary to encourage them to report fraud;

academic staff must show responsibility, but also great pedagogical competence (encouraging higher forms of learning, avoiding tasks that can be plagiarized, highly estimating learning, etc.);

getting familiar with every aspect of AI must be intensified (ETINED 2018 ).

Interesting information was obtained from students about their treating fraud as a game, and it was noted that some teachers also denied students’ cheating, which is drastically different from what students stated (ETINED 2018 ).

Some of the difficulties Montenegro is facing are: low component values in the Academic Integrity Maturity Model (see Fig. 1 ); there are examples of unpunished cheating in the media; some students enrol in the university unwillingly, due to the fact that it is hard to get employment; professors do not punish students’ cheating (ETINED 2018 ).

Academic Integrity Maturity Model radar chart for Montenegro. Source: (ETINED Council of Europe Platform on Ethics, Transparency and Integrity in Education, 2018 , p.75)

Certain attempts have been made after ETINED’s report in order to better treat the problems of AI. Montenegro is the first country in the Western Balkans that adopted The Law on Academic Integrity , in March of 2019. The Law was created within the Project Strengthen Academic Integrity and Combat Corruption in Higher Education , co-funded by the Council of Europe and European Commission (2017–2019). The whole project was aimed at understanding questions of ethics in higher education and raising awareness about AI. A lot of different activities have taken place within this project in Montenegrin higher education community since 2017, and that means that the questions about AI are put in the centre of academic discussions, both among members of academia (students, teachers, researchers) and members of the wider society. The greatest amount of the debate in Montenegro is about plagiarism and about software checking of plagiarism, but the questions on AI are much wider and deeper and AI consists of the whole set of academic values.

Methodology

Research design, aims and objectives.

Our aim in this study is to determine students’ and teachers’ attitudes towards cheating in assessing students’ performance. The main research method is case study (Flyvbjerg 2006 ; Yin 1994 ). We aim to describe how our respondents:

Recognize ethical misconduct (EM) in several situations given through case studies,

Understand the roles of each subject involved,

Predict consequences of the EM given in the case study and how they understand its possible causes,

Create individual recommendations to EM or resolve problem situation.

We used mixed methodology (qualitative and quantitative). Each question that was given to the respondents was open-ended, but it also was possible to code, categorize and thematize the answers (Vilig 2016 ) and to include quantitative data analysis.

The study design had a roadmap as follows:

There are many different types of academic dishonesty in assessing students’ performance, so we decided to choose 8 of them from three taxonomies arranged by Lothringer ( 2008 ). These taxonomies consider three domains of cheating: exams, writing assignments and other assignments and actions, and they consist of total 66 possibilities of cheating (Lothringer 2008 ). We chose 8 of them (random choice) and created 8 case studies. These case studies are moral reasoning situations.

Considering the possible “guilty” person in each case study, we decided to have three “guilty” scopes of action: a. guilty student(s), b. guilty teacher(s), c. guilty some other person(s) (other students, persons outside academia etc.). With these three dramatis personae we could have 8 different combinations (2 3 , 3 is a number of actors). It is possible to have 8 combinations as we showed in Table 1 .

Each case study is created according to one horizontal line and one combination, so we have one situation that is in line with ethical principles by all actors, and also the latest case study shows an example where none of the actors behaved ethically. Considering this, our design gets a better connection with reality.

We set up several questions about each case study: Is there any ethical misconduct (EM)? Who (if any) engaged in EM? Why do you think so? Which are the possible consequences of this misconduct? What are the possible causes of this behavior? What would you recommend to be done about this case?

We gave our respondents (120 students and 42 teachers) case studies and questions in the written form. Students and teachers responded to the same questions.

Important limitation of this methodology design was the time needed for individual responses (it varied from half an hour to one hour and 50 min, with the arithmetic mean of 46.50 min), but our respondents reported that they were highly interested and motivated to understand case studies. They rated the questionnaire’s interestingness with a rating of 4.22 on a scale up to five, and the effort invested received an average score of 2.92. So the effort rating is not negligible, but it is very important that the curiosity is valued by a higher score.

The research sample of students (120) includes participants from three basic study programs (pre-school, teacher training and pedagogy), and two postgraduate programs in the field of education. The sample is predominantly female based (114 female and 6 male students), which matches the fact that these studies are almost exclusively “female” studies. The number of students of the first and the second cycle of studies is even (50.1% master’s and 49.9% bachelor). The sample of teachers was obtained from a number of faculties of the University of Montenegro by random selection – respondents who showed interest in this topic were included. The sample consisted of 28 female and 14 male teachers. Mostly younger teachers were included – 38% had up to 10 years of work in education, and 30% had from 11 to 20 years of work in education.

Since there is a large imbalance in the overall sample between the number of female and male respondents (142 female or 87.65% and 20 male or 12.35%), we did not analyze the results according to the gender variable. The case studies were balanced in terms of gender, so realistic female and male “characters” appear in them. Considering that female and male “characters” in the case studies were involved in situations with different types and degrees of EM (from copying texts from paper, cheating through bugs, to registering exams without taking them previously), it was not possible to check the respondents’ attitude towards the female or male perpetrator of EM. Therefore, the design of this study did not allow us to check whether harsher punishments were intended for male or female “character”.

The data we received as answers to questions about causes, effects and recommendations (which we will treat as topics) were processed in accordance with a qualitative analysis, i.e. answers were coded and categorized. We classified 13 codes for effects, 17 codes for causes and 16 codes for recommendations in total. Nine categories were formed in the topics of effects and causes, while 7 were formed in the topic of recommendations. The categories for the three topics are given in Tables 4 , 5 and 6 .

The accuracy and inaccuracy of the assessment was defined in the following way: if a respondent considered the conduct of all who had participated in the event as (un) ethical, the answer correct was recorded; if he or she considered only certain forms of conduct as such, the answer was partially correct ; and if he or she recognized at least one form of conduct as opposite to its real meaning, i.e. if there was a shift between the ethical and the unethical, then the answer was identified as incorrect . The same principle was applied to the respondents’ explanations.

Results and discussion

Our results show good understanding of EM given in the case studies. Each EM was clearly recognized by almost 70% of the respondents (Table 2 ). These data confirm that students mostly know what is expected from them (Burrus et al. 2013 ). Several of the given EM – being incorporated in complex situation in which more than one person was cheating in some way – were not recognized. The only variable which provided us with a few statistically significant differences is the role of the respondent (teacher and student), so that we are delivering the results according to it. Tables 2 , 3 , 4 , 5 and 6 show frequencies and percentages for recognizing EM, explaining EM, suggesting consequences for EM, EM’ causes and recommendations for each case study.

We have used Spearman’s rank correlation coefficient to evaluate the registered effects, causes and recommendations. In three cases we have obtained high coefficient values. In case study 6, in the teachers’ sample it has been noted that a better knowledge of the consequences reduces the cause (r S = −0,849; p = 0,016). In case study 3, in the students’ sample a better knowledge of the causes leads to better recommendations (r S = 0,841; p = 0,036). In the same sample for case study 8, a better knowledge of the causes leads to a better understanding of the consequences (r S = 0,964; p < 0,001).

We are presenting the results in accordance with particular stories, whereas we have to point out that most categories which were recognized for a single story in terms of causes, effects and recommendations are practically the same for all case studies. However, differences occur in terms of the frequency of certain categories from one story to another. We are presenting case studies according to the order from Table 1 .

Case study 1

The results of case study 1 that was ethically clear are very interesting. In this case study the student was able to see exam questions which happened to be near him, because the professor left her office for some time and students were left alone there. However, it is clear from the case study that the student was uncertain (“many question went through his mind”), but he resisted the temptation. As in every case study, there was an ethical dilemma with this one, but it was resolved in the correct way. Almost 30% of teacher respondents described the situation as EM, while the same reasoning came from more than 20% of students (Table 2 ). The respondents who identified EM pointed to the professor’s recklessness in their open comments (14%), and they also showed distrust of the student – 19% of the respondents were convinced that the student had actually seen the questions, as well as that he had kept them to himself (there were a few more students in the professor’s office). The respondents’ explanations given in the form of open answers confirmed their estimation (Table 3 ).

Such answers confirm the presence of distrust in academia as well as the assumption that students most certainly cheat if given a chance. The same respondents state that the student was selfish (“he did not tell his fellow students”), curious (“he took a look at the professor’s desk”), and they recommend that “he should not look at other people’s stuff”. Even though it is clearly stated in the case study that the student accidentally spotted the questions on the pile of papers on the desk, these answers indicate that strict prohibitions and forms of control are required, which was confirmed by the answers which followed. Therefore, in our respondents’ opinion, the locus of control is predominantly external, which indicates the need for a number of changes in education. Namely, only when students have developed self-control, is it then possible to speak about interiorized ethical norms.

Other respondents from the teacher group (71%) and the student group (80%) did not observe EM (Table 2 ). Their comments confirmed this – they point out that the student resisted the temptation and wrote comments such as: “May he keep it up!” This group states that the professor was not careless, only that she trusted the students, and trust relationships are what should be built in higher education.

As for the three topics where open comments were asked (effects of conduct, causes and recommendations), few categories were classified. In this case study these are the categories which originate from the group of wrong estimations of EM, so in terms of effects the respondents think that the professor is in danger of losing her authority, because she failed to exercise strict control (4 respondents), and she allowed injustice to occur (3 respondents). The professor’s insufficient pedagogical competencies were stated as the causes of EM (5 respondents), as well as the student’s fear of the exam (5 respondents). Among the recommendations we have noted the need for: strict control (11 teachers and 4 students), learning about AI (5 teachers) and the student’s punishment (2 students). All open comments came from the respondents who had recognized EM in an ethically correct dilemma.

Case study 2

The second case study deals with a student of medicine who cannot pass his anatomy exam, even though he has studied hard. His ambitious mother bribes a person from the faculty administration (her schoolmate), and she records a positive grade. The student does not know what his mother has done. This case study provoked intense and extensive reactions from the respondents. In all likelihood, the studies of medicine must be in particular ethically pure, since they are directly associated with health care.

The way in which our respondents recognized EM in the case study is given in Table 2 , whereas the accuracy of their explanations is stated in Table 3 . The answers which are marked as incorrect labelled the student as the perpetrator of EM, while the partially correct answers excluded the mother, probably guided by the logic that she was not a member of the academic community. However, in the introductory part of the questionnaire it was not stated that the case studies related exclusively to members of the academic community.

The explanations of students and teachers differ considerably in statistical terms (Table 3 ). The students failed to recognize some of the perpetrators of EM and a number of them identified the student from this case study as a responsible one.