| A composite variable is a combination of multiple variables. It is used to measure multidimensional aspects that are difficult to observe. | - Entertainment

- Online education

- Database management, storage, and retrieval

Frequently Asked QuestionsWhat are the 10 types of variables in research. The 10 types of variables in research are: - Independent

- Confounding

- Categorical

- Extraneous.

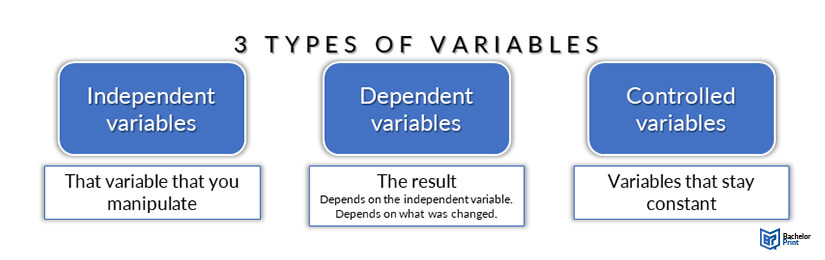

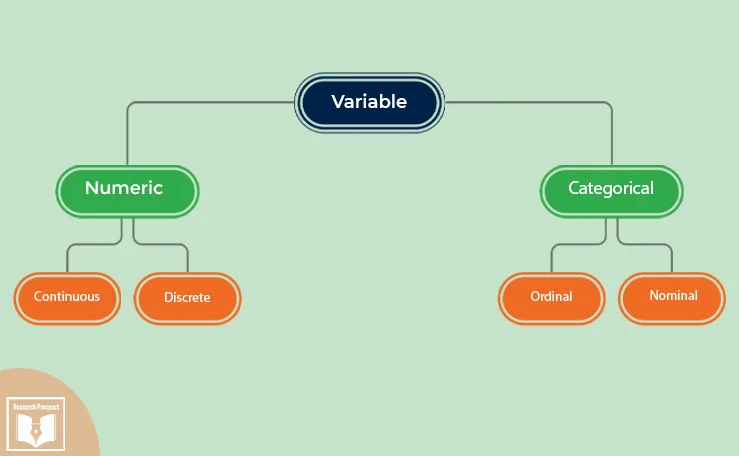

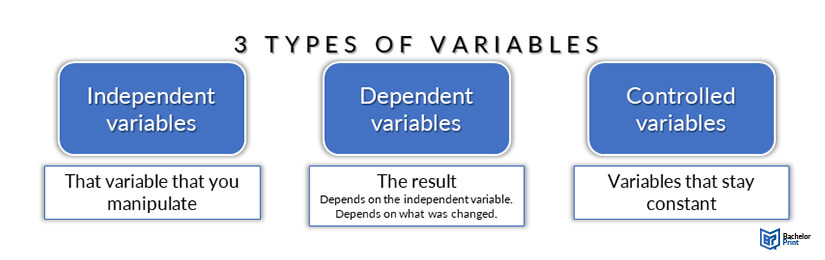

What is an independent variable?An independent variable, often termed the predictor or explanatory variable, is the variable manipulated or categorized in an experiment to observe its effect on another variable, called the dependent variable. It’s the presumed cause in a cause-and-effect relationship, determining if changes in it produce changes in the observed outcome. What is a variable?In research, a variable is any attribute, quantity, or characteristic that can be measured or counted. It can take on various values, making it “variable.” Variables can be classified as independent (manipulated), dependent (observed outcome), or control (kept constant). They form the foundation for hypotheses, observations, and data analysis in studies. What is a dependent variable?A dependent variable is the outcome or response being studied in an experiment or investigation. It’s what researchers measure to determine the effect of changes in the independent variable. In a cause-and-effect relationship, the dependent variable is presumed to be influenced or caused by the independent variable. What is a variable in programming?In programming, a variable is a symbolic name for a storage location that holds data or values. It allows data storage and retrieval for computational operations. Variables have types, like integer or string, determining the nature of data they can hold. They’re fundamental in manipulating and processing information in software. What is a control variable?A control variable in research is a factor that’s kept constant to ensure that it doesn’t influence the outcome. By controlling these variables, researchers can isolate the effects of the independent variable on the dependent variable, ensuring that other factors don’t skew the results or introduce bias into the experiment. What is a controlled variable in science?In science, a controlled variable is a factor that remains constant throughout an experiment. It ensures that any observed changes in the dependent variable are solely due to the independent variable, not other factors. By keeping controlled variables consistent, researchers can maintain experiment validity and accurately assess cause-and-effect relationships. How many independent variables should an investigation have?Ideally, an investigation should have one independent variable to clearly establish cause-and-effect relationships. Manipulating multiple independent variables simultaneously can complicate data interpretation. However, in advanced research, experiments with multiple independent variables (factorial designs) are used, but they require careful planning to understand interactions between variables. You May Also LikeYou can transcribe an interview by converting a conversation into a written format including question-answer recording sessions between two or more people. Experimental research refers to the experiments conducted in the laboratory or under observation in controlled conditions. Here is all you need to know about experimental research. The authenticity of dissertation is largely influenced by the research method employed. Here we present the most notable research methods for dissertation. USEFUL LINKS LEARNING RESOURCES  COMPANY DETAILS   Variables in Research | Types, Definiton & Examples IntroductionWhat is a variable, what are the 5 types of variables in research, other variables in research. Variables are fundamental components of research that allow for the measurement and analysis of data. They can be defined as characteristics or properties that can take on different values. In research design , understanding the types of variables and their roles is crucial for developing hypotheses , designing methods , and interpreting results . This article outlines the the types of variables in research, including their definitions and examples, to provide a clear understanding of their use and significance in research studies. By categorizing variables into distinct groups based on their roles in research, their types of data, and their relationships with other variables, researchers can more effectively structure their studies and achieve more accurate conclusions.  A variable represents any characteristic, number, or quantity that can be measured or quantified. The term encompasses anything that can vary or change, ranging from simple concepts like age and height to more complex ones like satisfaction levels or economic status. Variables are essential in research as they are the foundational elements that researchers manipulate, measure, or control to gain insights into relationships, causes, and effects within their studies. They enable the framing of research questions, the formulation of hypotheses, and the interpretation of results. Variables can be categorized based on their role in the study (such as independent and dependent variables ), the type of data they represent (quantitative or categorical), and their relationship to other variables (like confounding or control variables). Understanding what constitutes a variable and the various variable types available is a critical step in designing robust and meaningful research.  ATLAS.ti makes complex data easy to understandTurn to our powerful data analysis tools to make the most of your research. Get started with a free trial. Variables are crucial components in research, serving as the foundation for data collection , analysis , and interpretation . They are attributes or characteristics that can vary among subjects or over time, and understanding their types is essential for any study. Variables can be broadly classified into five main types, each with its distinct characteristics and roles within research. This classification helps researchers in designing their studies, choosing appropriate measurement techniques, and analyzing their results accurately. The five types of variables include independent variables, dependent variables, categorical variables, continuous variables, and confounding variables. These categories not only facilitate a clearer understanding of the data but also guide the formulation of hypotheses and research methodologies. Independent variablesIndependent variables are foundational to the structure of research, serving as the factors or conditions that researchers manipulate or vary to observe their effects on dependent variables. These variables are considered "independent" because their variation does not depend on other variables within the study. Instead, they are the cause or stimulus that directly influences the outcomes being measured. For example, in an experiment to assess the effectiveness of a new teaching method on student performance, the teaching method applied (traditional vs. innovative) would be the independent variable. The selection of an independent variable is a critical step in research design, as it directly correlates with the study's objective to determine causality or association. Researchers must clearly define and control these variables to ensure that observed changes in the dependent variable can be attributed to variations in the independent variable, thereby affirming the reliability of the results. In experimental research, the independent variable is what differentiates the control group from the experimental group, thereby setting the stage for meaningful comparison and analysis. Dependent variablesDependent variables are the outcomes or effects that researchers aim to explore and understand in their studies. These variables are called "dependent" because their values depend on the changes or variations of the independent variables. Essentially, they are the responses or results that are measured to assess the impact of the independent variable's manipulation. For instance, in a study investigating the effect of exercise on weight loss, the amount of weight lost would be considered the dependent variable, as it depends on the exercise regimen (the independent variable). The identification and measurement of the dependent variable are crucial for testing the hypothesis and drawing conclusions from the research. It allows researchers to quantify the effect of the independent variable , providing evidence for causal relationships or associations. In experimental settings, the dependent variable is what is being tested and measured across different groups or conditions, enabling researchers to assess the efficacy or impact of the independent variable's variation. To ensure accuracy and reliability, the dependent variable must be defined clearly and measured consistently across all participants or observations. This consistency helps in reducing measurement errors and increases the validity of the research findings. By carefully analyzing the dependent variables, researchers can derive meaningful insights from their studies, contributing to the broader knowledge in their field. Categorical variablesCategorical variables, also known as qualitative variables, represent types or categories that are used to group observations. These variables divide data into distinct groups or categories that lack a numerical value but hold significant meaning in research. Examples of categorical variables include gender (male, female, other), type of vehicle (car, truck, motorcycle), or marital status (single, married, divorced). These categories help researchers organize data into groups for comparison and analysis. Categorical variables can be further classified into two subtypes: nominal and ordinal. Nominal variables are categories without any inherent order or ranking among them, such as blood type or ethnicity. Ordinal variables, on the other hand, imply a sort of ranking or order among the categories, like levels of satisfaction (high, medium, low) or education level (high school, bachelor's, master's, doctorate). Understanding and identifying categorical variables is crucial in research as it influences the choice of statistical analysis methods. Since these variables represent categories without numerical significance, researchers employ specific statistical tests designed for a nominal or ordinal variable to draw meaningful conclusions. Properly classifying and analyzing categorical variables allow for the exploration of relationships between different groups within the study, shedding light on patterns and trends that might not be evident with numerical data alone. Continuous variablesContinuous variables are quantitative variables that can take an infinite number of values within a given range. These variables are measured along a continuum and can represent very precise measurements. Examples of continuous variables include height, weight, temperature, and time. Because they can assume any value within a range, continuous variables allow for detailed analysis and a high degree of accuracy in research findings. The ability to measure continuous variables at very fine scales makes them invaluable for many types of research, particularly in the natural and social sciences. For instance, in a study examining the effect of temperature on plant growth, temperature would be considered a continuous variable since it can vary across a wide spectrum and be measured to several decimal places. When dealing with continuous variables, researchers often use methods incorporating a particular statistical test to accommodate a wide range of data points and the potential for infinite divisibility. This includes various forms of regression analysis, correlation, and other techniques suited for modeling and analyzing nuanced relationships between variables. The precision of continuous variables enhances the researcher's ability to detect patterns, trends, and causal relationships within the data, contributing to more robust and detailed conclusions. Confounding variablesConfounding variables are those that can cause a false association between the independent and dependent variables, potentially leading to incorrect conclusions about the relationship being studied. These are extraneous variables that were not considered in the study design but can influence both the supposed cause and effect, creating a misleading correlation. Identifying and controlling for a confounding variable is crucial in research to ensure the validity of the findings. This can be achieved through various methods, including randomization, stratification, and statistical control. Randomization helps to evenly distribute confounding variables across study groups, reducing their potential impact. Stratification involves analyzing the data within strata or layers that share common characteristics of the confounder. Statistical control allows researchers to adjust for the effects of confounders in the analysis phase. Properly addressing confounding variables strengthens the credibility of research outcomes by clarifying the direct relationship between the dependent and independent variables, thus providing more accurate and reliable results.  Beyond the primary categories of variables commonly discussed in research methodology , there exists a diverse range of other variables that play significant roles in the design and analysis of studies. Below is an overview of some of these variables, highlighting their definitions and roles within research studies: - Discrete variables : A discrete variable is a quantitative variable that represents quantitative data , such as the number of children in a family or the number of cars in a parking lot. Discrete variables can only take on specific values.

- Categorical variables : A categorical variable categorizes subjects or items into groups that do not have a natural numerical order. Categorical data includes nominal variables, like country of origin, and ordinal variables, such as education level.

- Predictor variables : Often used in statistical models, a predictor variable is used to forecast or predict the outcomes of other variables, not necessarily with a causal implication.

- Outcome variables : These variables represent the results or outcomes that researchers aim to explain or predict through their studies. An outcome variable is central to understanding the effects of predictor variables.

- Latent variables : Not directly observable, latent variables are inferred from other, directly measured variables. Examples include psychological constructs like intelligence or socioeconomic status.

- Composite variables : Created by combining multiple variables, composite variables can measure a concept more reliably or simplify the analysis. An example would be a composite happiness index derived from several survey questions .

- Preceding variables : These variables come before other variables in time or sequence, potentially influencing subsequent outcomes. A preceding variable is crucial in longitudinal studies to determine causality or sequences of events.

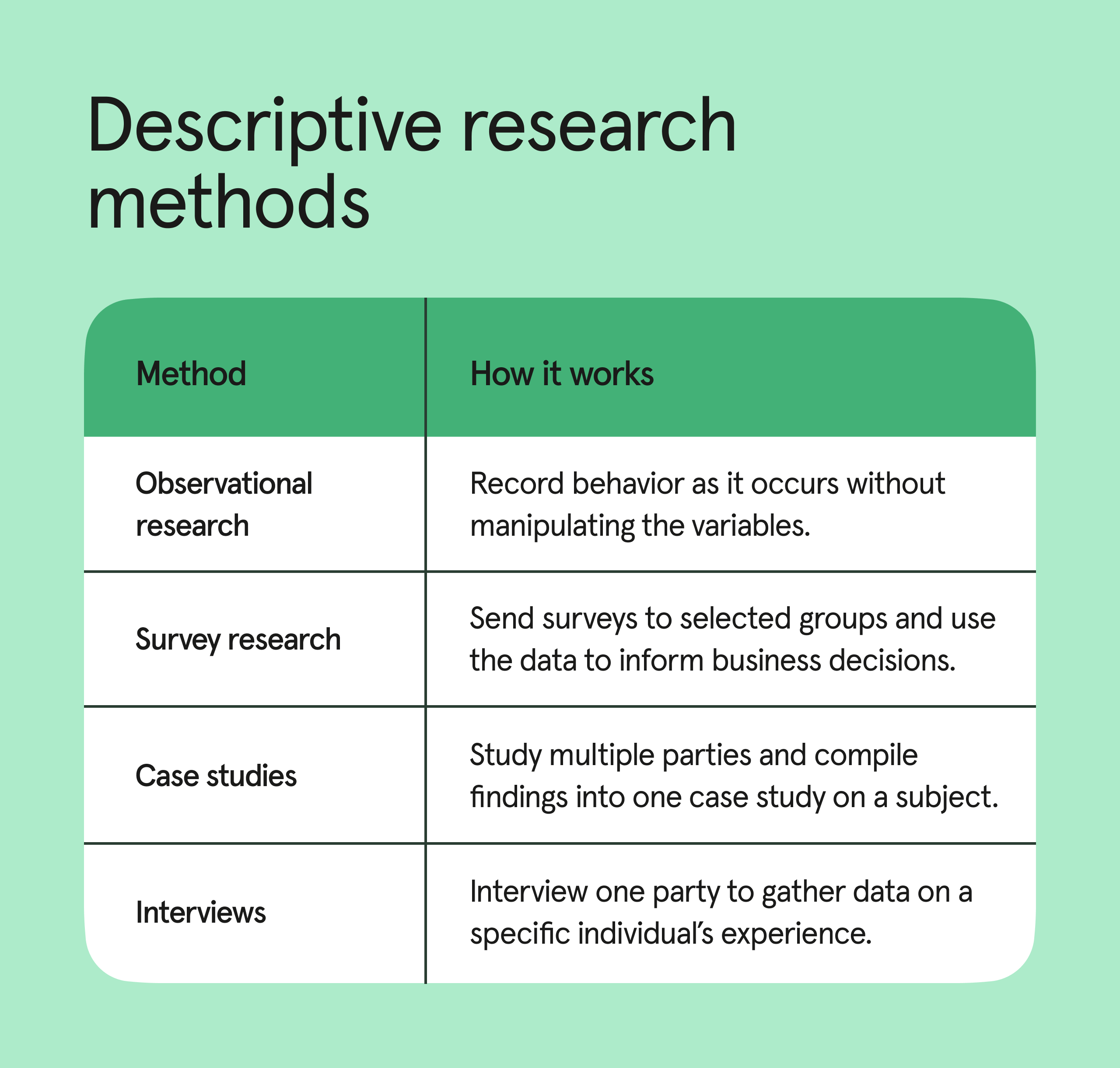

Master qualitative research with ATLAS.tiTurn data into critical insights with our data analysis platform. Try out a free trial today.  Types of VariableAll experiments examine some kind of variable(s). A variable is not only something that we measure, but also something that we can manipulate and something we can control for. To understand the characteristics of variables and how we use them in research, this guide is divided into three main sections. First, we illustrate the role of dependent and independent variables. Second, we discuss the difference between experimental and non-experimental research. Finally, we explain how variables can be characterised as either categorical or continuous. Dependent and Independent VariablesAn independent variable, sometimes called an experimental or predictor variable, is a variable that is being manipulated in an experiment in order to observe the effect on a dependent variable, sometimes called an outcome variable. Imagine that a tutor asks 100 students to complete a maths test. The tutor wants to know why some students perform better than others. Whilst the tutor does not know the answer to this, she thinks that it might be because of two reasons: (1) some students spend more time revising for their test; and (2) some students are naturally more intelligent than others. As such, the tutor decides to investigate the effect of revision time and intelligence on the test performance of the 100 students. The dependent and independent variables for the study are: Dependent Variable: Test Mark (measured from 0 to 100) Independent Variables: Revision time (measured in hours) Intelligence (measured using IQ score) The dependent variable is simply that, a variable that is dependent on an independent variable(s). For example, in our case the test mark that a student achieves is dependent on revision time and intelligence. Whilst revision time and intelligence (the independent variables) may (or may not) cause a change in the test mark (the dependent variable), the reverse is implausible; in other words, whilst the number of hours a student spends revising and the higher a student's IQ score may (or may not) change the test mark that a student achieves, a change in a student's test mark has no bearing on whether a student revises more or is more intelligent (this simply doesn't make sense). Therefore, the aim of the tutor's investigation is to examine whether these independent variables - revision time and IQ - result in a change in the dependent variable, the students' test scores. However, it is also worth noting that whilst this is the main aim of the experiment, the tutor may also be interested to know if the independent variables - revision time and IQ - are also connected in some way. In the section on experimental and non-experimental research that follows, we find out a little more about the nature of independent and dependent variables. Experimental and Non-Experimental Research- Experimental research : In experimental research, the aim is to manipulate an independent variable(s) and then examine the effect that this change has on a dependent variable(s). Since it is possible to manipulate the independent variable(s), experimental research has the advantage of enabling a researcher to identify a cause and effect between variables. For example, take our example of 100 students completing a maths exam where the dependent variable was the exam mark (measured from 0 to 100), and the independent variables were revision time (measured in hours) and intelligence (measured using IQ score). Here, it would be possible to use an experimental design and manipulate the revision time of the students. The tutor could divide the students into two groups, each made up of 50 students. In "group one", the tutor could ask the students not to do any revision. Alternately, "group two" could be asked to do 20 hours of revision in the two weeks prior to the test. The tutor could then compare the marks that the students achieved.

- Non-experimental research : In non-experimental research, the researcher does not manipulate the independent variable(s). This is not to say that it is impossible to do so, but it will either be impractical or unethical to do so. For example, a researcher may be interested in the effect of illegal, recreational drug use (the independent variable(s)) on certain types of behaviour (the dependent variable(s)). However, whilst possible, it would be unethical to ask individuals to take illegal drugs in order to study what effect this had on certain behaviours. As such, a researcher could ask both drug and non-drug users to complete a questionnaire that had been constructed to indicate the extent to which they exhibited certain behaviours. Whilst it is not possible to identify the cause and effect between the variables, we can still examine the association or relationship between them. In addition to understanding the difference between dependent and independent variables, and experimental and non-experimental research, it is also important to understand the different characteristics amongst variables. This is discussed next.

Categorical and Continuous VariablesCategorical variables are also known as discrete or qualitative variables. Categorical variables can be further categorized as either nominal , ordinal or dichotomous . - Nominal variables are variables that have two or more categories, but which do not have an intrinsic order. For example, a real estate agent could classify their types of property into distinct categories such as houses, condos, co-ops or bungalows. So "type of property" is a nominal variable with 4 categories called houses, condos, co-ops and bungalows. Of note, the different categories of a nominal variable can also be referred to as groups or levels of the nominal variable. Another example of a nominal variable would be classifying where people live in the USA by state. In this case there will be many more levels of the nominal variable (50 in fact).

- Dichotomous variables are nominal variables which have only two categories or levels. For example, if we were looking at gender, we would most probably categorize somebody as either "male" or "female". This is an example of a dichotomous variable (and also a nominal variable). Another example might be if we asked a person if they owned a mobile phone. Here, we may categorise mobile phone ownership as either "Yes" or "No". In the real estate agent example, if type of property had been classified as either residential or commercial then "type of property" would be a dichotomous variable.

- Ordinal variables are variables that have two or more categories just like nominal variables only the categories can also be ordered or ranked. So if you asked someone if they liked the policies of the Democratic Party and they could answer either "Not very much", "They are OK" or "Yes, a lot" then you have an ordinal variable. Why? Because you have 3 categories, namely "Not very much", "They are OK" and "Yes, a lot" and you can rank them from the most positive (Yes, a lot), to the middle response (They are OK), to the least positive (Not very much). However, whilst we can rank the levels, we cannot place a "value" to them; we cannot say that "They are OK" is twice as positive as "Not very much" for example.

Continuous variables are also known as quantitative variables. Continuous variables can be further categorized as either interval or ratio variables. - Interval variables are variables for which their central characteristic is that they can be measured along a continuum and they have a numerical value (for example, temperature measured in degrees Celsius or Fahrenheit). So the difference between 20°C and 30°C is the same as 30°C to 40°C. However, temperature measured in degrees Celsius or Fahrenheit is NOT a ratio variable.

- Ratio variables are interval variables, but with the added condition that 0 (zero) of the measurement indicates that there is none of that variable. So, temperature measured in degrees Celsius or Fahrenheit is not a ratio variable because 0°C does not mean there is no temperature. However, temperature measured in Kelvin is a ratio variable as 0 Kelvin (often called absolute zero) indicates that there is no temperature whatsoever. Other examples of ratio variables include height, mass, distance and many more. The name "ratio" reflects the fact that you can use the ratio of measurements. So, for example, a distance of ten metres is twice the distance of 5 metres.

Ambiguities in classifying a type of variableIn some cases, the measurement scale for data is ordinal, but the variable is treated as continuous. For example, a Likert scale that contains five values - strongly agree, agree, neither agree nor disagree, disagree, and strongly disagree - is ordinal. However, where a Likert scale contains seven or more value - strongly agree, moderately agree, agree, neither agree nor disagree, disagree, moderately disagree, and strongly disagree - the underlying scale is sometimes treated as continuous (although where you should do this is a cause of great dispute). It is worth noting that how we categorise variables is somewhat of a choice. Whilst we categorised gender as a dichotomous variable (you are either male or female), social scientists may disagree with this, arguing that gender is a more complex variable involving more than two distinctions, but also including measurement levels like genderqueer, intersex and transgender. At the same time, some researchers would argue that a Likert scale, even with seven values, should never be treated as a continuous variable.  Research Variables 101Independent variables, dependent variables, control variables and more By: Derek Jansen (MBA) | Expert Reviewed By: Kerryn Warren (PhD) | January 2023 If you’re new to the world of research, especially scientific research, you’re bound to run into the concept of variables , sooner or later. If you’re feeling a little confused, don’t worry – you’re not the only one! Independent variables, dependent variables, confounding variables – it’s a lot of jargon. In this post, we’ll unpack the terminology surrounding research variables using straightforward language and loads of examples . Overview: Variables In Research 1. ?

2. variables

3. variables

4. variables | 5. variables

6. variables

7. variables

8. variables | What (exactly) is a variable?The simplest way to understand a variable is as any characteristic or attribute that can experience change or vary over time or context – hence the name “variable”. For example, the dosage of a particular medicine could be classified as a variable, as the amount can vary (i.e., a higher dose or a lower dose). Similarly, gender, age or ethnicity could be considered demographic variables, because each person varies in these respects. Within research, especially scientific research, variables form the foundation of studies, as researchers are often interested in how one variable impacts another, and the relationships between different variables. For example: - How someone’s age impacts their sleep quality

- How different teaching methods impact learning outcomes

- How diet impacts weight (gain or loss)

As you can see, variables are often used to explain relationships between different elements and phenomena. In scientific studies, especially experimental studies, the objective is often to understand the causal relationships between variables. In other words, the role of cause and effect between variables. This is achieved by manipulating certain variables while controlling others – and then observing the outcome. But, we’ll get into that a little later… The “Big 3” VariablesVariables can be a little intimidating for new researchers because there are a wide variety of variables, and oftentimes, there are multiple labels for the same thing. To lay a firm foundation, we’ll first look at the three main types of variables, namely: - Independent variables (IV)

- Dependant variables (DV)

- Control variables

What is an independent variable?Simply put, the independent variable is the “ cause ” in the relationship between two (or more) variables. In other words, when the independent variable changes, it has an impact on another variable. For example: - Increasing the dosage of a medication (Variable A) could result in better (or worse) health outcomes for a patient (Variable B)

- Changing a teaching method (Variable A) could impact the test scores that students earn in a standardised test (Variable B)

- Varying one’s diet (Variable A) could result in weight loss or gain (Variable B).

It’s useful to know that independent variables can go by a few different names, including, explanatory variables (because they explain an event or outcome) and predictor variables (because they predict the value of another variable). Terminology aside though, the most important takeaway is that independent variables are assumed to be the “cause” in any cause-effect relationship. As you can imagine, these types of variables are of major interest to researchers, as many studies seek to understand the causal factors behind a phenomenon. Need a helping hand? What is a dependent variable?While the independent variable is the “ cause ”, the dependent variable is the “ effect ” – or rather, the affected variable . In other words, the dependent variable is the variable that is assumed to change as a result of a change in the independent variable. Keeping with the previous example, let’s look at some dependent variables in action: - Health outcomes (DV) could be impacted by dosage changes of a medication (IV)

- Students’ scores (DV) could be impacted by teaching methods (IV)

- Weight gain or loss (DV) could be impacted by diet (IV)

In scientific studies, researchers will typically pay very close attention to the dependent variable (or variables), carefully measuring any changes in response to hypothesised independent variables. This can be tricky in practice, as it’s not always easy to reliably measure specific phenomena or outcomes – or to be certain that the actual cause of the change is in fact the independent variable. As the adage goes, correlation is not causation . In other words, just because two variables have a relationship doesn’t mean that it’s a causal relationship – they may just happen to vary together. For example, you could find a correlation between the number of people who own a certain brand of car and the number of people who have a certain type of job. Just because the number of people who own that brand of car and the number of people who have that type of job is correlated, it doesn’t mean that owning that brand of car causes someone to have that type of job or vice versa. The correlation could, for example, be caused by another factor such as income level or age group, which would affect both car ownership and job type. To confidently establish a causal relationship between an independent variable and a dependent variable (i.e., X causes Y), you’ll typically need an experimental design , where you have complete control over the environmen t and the variables of interest. But even so, this doesn’t always translate into the “real world”. Simply put, what happens in the lab sometimes stays in the lab! As an alternative to pure experimental research, correlational or “ quasi-experimental ” research (where the researcher cannot manipulate or change variables) can be done on a much larger scale more easily, allowing one to understand specific relationships in the real world. These types of studies also assume some causality between independent and dependent variables, but it’s not always clear. So, if you go this route, you need to be cautious in terms of how you describe the impact and causality between variables and be sure to acknowledge any limitations in your own research.  What is a control variable?In an experimental design, a control variable (or controlled variable) is a variable that is intentionally held constant to ensure it doesn’t have an influence on any other variables. As a result, this variable remains unchanged throughout the course of the study. In other words, it’s a variable that’s not allowed to vary – tough life 🙂 As we mentioned earlier, one of the major challenges in identifying and measuring causal relationships is that it’s difficult to isolate the impact of variables other than the independent variable. Simply put, there’s always a risk that there are factors beyond the ones you’re specifically looking at that might be impacting the results of your study. So, to minimise the risk of this, researchers will attempt (as best possible) to hold other variables constant . These factors are then considered control variables. Some examples of variables that you may need to control include: - Temperature

- Time of day

- Noise or distractions

Which specific variables need to be controlled for will vary tremendously depending on the research project at hand, so there’s no generic list of control variables to consult. As a researcher, you’ll need to think carefully about all the factors that could vary within your research context and then consider how you’ll go about controlling them. A good starting point is to look at previous studies similar to yours and pay close attention to which variables they controlled for. Of course, you won’t always be able to control every possible variable, and so, in many cases, you’ll just have to acknowledge their potential impact and account for them in the conclusions you draw. Every study has its limitations , so don’t get fixated or discouraged by troublesome variables. Nevertheless, always think carefully about the factors beyond what you’re focusing on – don’t make assumptions!  Other types of variablesAs we mentioned, independent, dependent and control variables are the most common variables you’ll come across in your research, but they’re certainly not the only ones you need to be aware of. Next, we’ll look at a few “secondary” variables that you need to keep in mind as you design your research. - Moderating variables

- Mediating variables

- Confounding variables

- Latent variables

Let’s jump into it… What is a moderating variable?A moderating variable is a variable that influences the strength or direction of the relationship between an independent variable and a dependent variable. In other words, moderating variables affect how much (or how little) the IV affects the DV, or whether the IV has a positive or negative relationship with the DV (i.e., moves in the same or opposite direction). For example, in a study about the effects of sleep deprivation on academic performance, gender could be used as a moderating variable to see if there are any differences in how men and women respond to a lack of sleep. In such a case, one may find that gender has an influence on how much students’ scores suffer when they’re deprived of sleep. It’s important to note that while moderators can have an influence on outcomes , they don’t necessarily cause them ; rather they modify or “moderate” existing relationships between other variables. This means that it’s possible for two different groups with similar characteristics, but different levels of moderation, to experience very different results from the same experiment or study design. What is a mediating variable?Mediating variables are often used to explain the relationship between the independent and dependent variable (s). For example, if you were researching the effects of age on job satisfaction, then education level could be considered a mediating variable, as it may explain why older people have higher job satisfaction than younger people – they may have more experience or better qualifications, which lead to greater job satisfaction. Mediating variables also help researchers understand how different factors interact with each other to influence outcomes. For instance, if you wanted to study the effect of stress on academic performance, then coping strategies might act as a mediating factor by influencing both stress levels and academic performance simultaneously. For example, students who use effective coping strategies might be less stressed but also perform better academically due to their improved mental state. In addition, mediating variables can provide insight into causal relationships between two variables by helping researchers determine whether changes in one factor directly cause changes in another – or whether there is an indirect relationship between them mediated by some third factor(s). For instance, if you wanted to investigate the impact of parental involvement on student achievement, you would need to consider family dynamics as a potential mediator, since it could influence both parental involvement and student achievement simultaneously.  What is a confounding variable?A confounding variable (also known as a third variable or lurking variable ) is an extraneous factor that can influence the relationship between two variables being studied. Specifically, for a variable to be considered a confounding variable, it needs to meet two criteria: - It must be correlated with the independent variable (this can be causal or not)

- It must have a causal impact on the dependent variable (i.e., influence the DV)

Some common examples of confounding variables include demographic factors such as gender, ethnicity, socioeconomic status, age, education level, and health status. In addition to these, there are also environmental factors to consider. For example, air pollution could confound the impact of the variables of interest in a study investigating health outcomes. Naturally, it’s important to identify as many confounding variables as possible when conducting your research, as they can heavily distort the results and lead you to draw incorrect conclusions . So, always think carefully about what factors may have a confounding effect on your variables of interest and try to manage these as best you can. What is a latent variable?Latent variables are unobservable factors that can influence the behaviour of individuals and explain certain outcomes within a study. They’re also known as hidden or underlying variables , and what makes them rather tricky is that they can’t be directly observed or measured . Instead, latent variables must be inferred from other observable data points such as responses to surveys or experiments. For example, in a study of mental health, the variable “resilience” could be considered a latent variable. It can’t be directly measured , but it can be inferred from measures of mental health symptoms, stress, and coping mechanisms. The same applies to a lot of concepts we encounter every day – for example: - Emotional intelligence

- Quality of life

- Business confidence

- Ease of use

One way in which we overcome the challenge of measuring the immeasurable is latent variable models (LVMs). An LVM is a type of statistical model that describes a relationship between observed variables and one or more unobserved (latent) variables. These models allow researchers to uncover patterns in their data which may not have been visible before, thanks to their complexity and interrelatedness with other variables. Those patterns can then inform hypotheses about cause-and-effect relationships among those same variables which were previously unknown prior to running the LVM. Powerful stuff, we say!  Let’s recapIn the world of scientific research, there’s no shortage of variable types, some of which have multiple names and some of which overlap with each other. In this post, we’ve covered some of the popular ones, but remember that this is not an exhaustive list . To recap, we’ve explored: - Independent variables (the “cause”)

- Dependent variables (the “effect”)

- Control variables (the variable that’s not allowed to vary)

If you’re still feeling a bit lost and need a helping hand with your research project, check out our 1-on-1 coaching service , where we guide you through each step of the research journey. Also, be sure to check out our free dissertation writing course and our collection of free, fully-editable chapter templates .  Psst... there’s more!This post was based on one of our popular Research Bootcamps . If you're working on a research project, you'll definitely want to check this out ... You Might Also Like: Very informative, concise and helpful. Thank you  Helping information.Thanks  practical and well-demonstrated  Very helpful and insightful Submit a Comment Cancel replyYour email address will not be published. Required fields are marked * Save my name, email, and website in this browser for the next time I comment.  In order to continue enjoying our site, we ask that you confirm your identity as a human. Thank you very much for your cooperation.  An official website of the United States government The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site. The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely. - Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now . - Advanced Search

- Journal List

- Indian Dermatol Online J

- v.10(1); Jan-Feb 2019

Types of Variables, Descriptive Statistics, and Sample SizeFeroze kaliyadan. Department of Dermatology, King Faisal University, Al Hofuf, Saudi Arabia Vinay Kulkarni1 Department of Dermatology, Prayas Amrita Clinic, Pune, Maharashtra, India This short “snippet” covers three important aspects related to statistics – the concept of variables , the importance, and practical aspects related to descriptive statistics and issues related to sampling – types of sampling and sample size estimation. What is a variable?[ 1 , 2 ] To put it in very simple terms, a variable is an entity whose value varies. A variable is an essential component of any statistical data. It is a feature of a member of a given sample or population, which is unique, and can differ in quantity or quantity from another member of the same sample or population. Variables either are the primary quantities of interest or act as practical substitutes for the same. The importance of variables is that they help in operationalization of concepts for data collection. For example, if you want to do an experiment based on the severity of urticaria, one option would be to measure the severity using a scale to grade severity of itching. This becomes an operational variable. For a variable to be “good,” it needs to have some properties such as good reliability and validity, low bias, feasibility/practicality, low cost, objectivity, clarity, and acceptance. Variables can be classified into various ways as discussed below. Quantitative vs qualitativeA variable can collect either qualitative or quantitative data. A variable differing in quantity is called a quantitative variable (e.g., weight of a group of patients), whereas a variable differing in quality is called a qualitative variable (e.g., the Fitzpatrick skin type) A simple test which can be used to differentiate between qualitative and quantitative variables is the subtraction test. If you can subtract the value of one variable from the other to get a meaningful result, then you are dealing with a quantitative variable (this of course will not apply to rating scales/ranks). Quantitative variables can be either discrete or continuousDiscrete variables are variables in which no values may be assumed between the two given values (e.g., number of lesions in each patient in a sample of patients with urticaria). Continuous variables, on the other hand, can take any value in between the two given values (e.g., duration for which the weals last in the same sample of patients with urticaria). One way of differentiating between continuous and discrete variables is to use the “mid-way” test. If, for every pair of values of a variable, a value exactly mid-way between them is meaningful, the variable is continuous. For example, two values for the time taken for a weal to subside can be 10 and 13 min. The mid-way value would be 11.5 min which makes sense. However, for a number of weals, suppose you have a pair of values – 5 and 8 – the midway value would be 6.5 weals, which does not make sense. Under the umbrella of qualitative variables, you can have nominal/categorical variables and ordinal variablesNominal/categorical variables are, as the name suggests, variables which can be slotted into different categories (e.g., gender or type of psoriasis). Ordinal variables or ranked variables are similar to categorical, but can be put into an order (e.g., a scale for severity of itching). Dependent and independent variablesIn the context of an experimental study, the dependent variable (also called outcome variable) is directly linked to the primary outcome of the study. For example, in a clinical trial on psoriasis, the PASI (psoriasis area severity index) would possibly be one dependent variable. The independent variable (sometime also called explanatory variable) is something which is not affected by the experiment itself but which can be manipulated to affect the dependent variable. Other terms sometimes used synonymously include blocking variable, covariate, or predictor variable. Confounding variables are extra variables, which can have an effect on the experiment. They are linked with dependent and independent variables and can cause spurious association. For example, in a clinical trial for a topical treatment in psoriasis, the concomitant use of moisturizers might be a confounding variable. A control variable is a variable that must be kept constant during the course of an experiment. Descriptive StatisticsStatistics can be broadly divided into descriptive statistics and inferential statistics.[ 3 , 4 ] Descriptive statistics give a summary about the sample being studied without drawing any inferences based on probability theory. Even if the primary aim of a study involves inferential statistics, descriptive statistics are still used to give a general summary. When we describe the population using tools such as frequency distribution tables, percentages, and other measures of central tendency like the mean, for example, we are talking about descriptive statistics. When we use a specific statistical test (e.g., Mann–Whitney U-test) to compare the mean scores and express it in terms of statistical significance, we are talking about inferential statistics. Descriptive statistics can help in summarizing data in the form of simple quantitative measures such as percentages or means or in the form of visual summaries such as histograms and box plots. Descriptive statistics can be used to describe a single variable (univariate analysis) or more than one variable (bivariate/multivariate analysis). In the case of more than one variable, descriptive statistics can help summarize relationships between variables using tools such as scatter plots. Descriptive statistics can be broadly put under two categories: - Sorting/grouping and illustration/visual displays

- Summary statistics.

Sorting and groupingSorting and grouping is most commonly done using frequency distribution tables. For continuous variables, it is generally better to use groups in the frequency table. Ideally, group sizes should be equal (except in extreme ends where open groups are used; e.g., age “greater than” or “less than”). Another form of presenting frequency distributions is the “stem and leaf” diagram, which is considered to be a more accurate form of description. Suppose the weight in kilograms of a group of 10 patients is as follows: 56, 34, 48, 43, 87, 78, 54, 62, 61, 59 The “stem” records the value of the “ten's” place (or higher) and the “leaf” records the value in the “one's” place [ Table 1 ]. Stem and leaf plot | | |

|---|

| 0 | - | | 1 | - | | 2 | - | | 3 | 4 | | 4 | 3 8 | | 5 | 4 6 9 | | 6 | 1 2 | | 7 | 8 | | 8 | 7 | | 9 | - |

Illustration/visual display of dataThe most common tools used for visual display include frequency diagrams, bar charts (for noncontinuous variables) and histograms (for continuous variables). Composite bar charts can be used to compare variables. For example, the frequency distribution in a sample population of males and females can be illustrated as given in Figure 1 .  Composite bar chart A pie chart helps show how a total quantity is divided among its constituent variables. Scatter diagrams can be used to illustrate the relationship between two variables. For example, global scores given for improvement in a condition like acne by the patient and the doctor [ Figure 2 ].  Scatter diagram Summary statisticsThe main tools used for summary statistics are broadly grouped into measures of central tendency (such as mean, median, and mode) and measures of dispersion or variation (such as range, standard deviation, and variance). Imagine that the data below represent the weights of a sample of 15 pediatric patients arranged in ascending order: 30, 35, 37, 38, 38, 38, 42, 42, 44, 46, 47, 48, 51, 53, 86 Just having the raw data does not mean much to us, so we try to express it in terms of some values, which give a summary of the data. The mean is basically the sum of all the values divided by the total number. In this case, we get a value of 45. The problem is that some extreme values (outliers), like “'86,” in this case can skew the value of the mean. In this case, we consider other values like the median, which is the point that divides the distribution into two equal halves. It is also referred to as the 50 th percentile (50% of the values are above it and 50% are below it). In our previous example, since we have already arranged the values in ascending order we find that the point which divides it into two equal halves is the 8 th value – 42. In case of a total number of values being even, we choose the two middle points and take an average to reach the median. The mode is the most common data point. In our example, this would be 38. The mode as in our case may not necessarily be in the center of the distribution. The median is the best measure of central tendency from among the mean, median, and mode. In a “symmetric” distribution, all three are the same, whereas in skewed data the median and mean are not the same; lie more toward the skew, with the mean lying further to the skew compared with the median. For example, in Figure 3 , a right skewed distribution is seen (direction of skew is based on the tail); data values' distribution is longer on the right-hand (positive) side than on the left-hand side. The mean is typically greater than the median in such cases.  Location of mode, median, and mean Measures of dispersionThe range gives the spread between the lowest and highest values. In our previous example, this will be 86-30 = 56. A more valuable measure is the interquartile range. A quartile is one of the values which break the distribution into four equal parts. The 25 th percentile is the data point which divides the group between the first one-fourth and the last three-fourth of the data. The first one-fourth will form the first quartile. The 75 th percentile is the data point which divides the distribution into a first three-fourth and last one-fourth (the last one-fourth being the fourth quartile). The range between the 25 th percentile and 75 th percentile is called the interquartile range. Variance is also a measure of dispersion. The larger the variance, the further the individual units are from the mean. Let us consider the same example we used for calculating the mean. The mean was 45. For the first value (30), the deviation from the mean will be 15; for the last value (86), the deviation will be 41. Similarly we can calculate the deviations for all values in a sample. Adding these deviations and averaging will give a clue to the total dispersion, but the problem is that since the deviations are a mix of negative and positive values, the final total becomes zero. To calculate the variance, this problem is overcome by adding squares of the deviations. So variance would be the sum of squares of the variation divided by the total number in the population (for a sample we use “n − 1”). To get a more realistic value of the average dispersion, we take the square root of the variance, which is called the “standard deviation.” The box plotThe box plot is a composite representation that portrays the mean, median, range, and the outliers [ Figure 4 ].  The concept of skewness and kurtosisSkewness is a measure of the symmetry of distribution. Basically if the distribution curve is symmetric, it looks the same on either side of the central point. When this is not the case, it is said to be skewed. Kurtosis is a representation of outliers. Distributions with high kurtosis tend to have “heavy tails” indicating a larger number of outliers, whereas distributions with low kurtosis have light tails, indicating lesser outliers. There are formulas to calculate both skewness and kurtosis [Figures [Figures5 5 – 8 ].  Positive skew  High kurtosis (positive kurtosis – also called leptokurtic)  Negative skew  Low kurtosis (negative kurtosis – also called “Platykurtic”) Sample SizeIn an ideal study, we should be able to include all units of a particular population under study, something that is referred to as a census.[ 5 , 6 ] This would remove the chances of sampling error (difference between the outcome characteristics in a random sample when compared with the true population values – something that is virtually unavoidable when you take a random sample). However, it is obvious that this would not be feasible in most situations. Hence, we have to study a subset of the population to reach to our conclusions. This representative subset is a sample and we need to have sufficient numbers in this sample to make meaningful and accurate conclusions and reduce the effect of sampling error. We also need to know that broadly sampling can be divided into two types – probability sampling and nonprobability sampling. Examples of probability sampling include methods such as simple random sampling (each member in a population has an equal chance of being selected), stratified random sampling (in nonhomogeneous populations, the population is divided into subgroups – followed be random sampling in each subgroup), systematic (sampling is based on a systematic technique – e.g., every third person is selected for a survey), and cluster sampling (similar to stratified sampling except that the clusters here are preexisting clusters unlike stratified sampling where the researcher decides on the stratification criteria), whereas nonprobability sampling, where every unit in the population does not have an equal chance of inclusion into the sample, includes methods such as convenience sampling (e.g., sample selected based on ease of access) and purposive sampling (where only people who meet specific criteria are included in the sample). An accurate calculation of sample size is an essential aspect of good study design. It is important to calculate the sample size much in advance, rather than have to go for post hoc analysis. A sample size that is too less may make the study underpowered, whereas a sample size which is more than necessary might lead to a wastage of resources. We will first go through the sample size calculation for a hypothesis-based design (like a randomized control trial). The important factors to consider for sample size calculation include study design, type of statistical test, level of significance, power and effect size, variance (standard deviation for quantitative data), and expected proportions in the case of qualitative data. This is based on previous data, either based on previous studies or based on the clinicians' experience. In case the study is something being conducted for the first time, a pilot study might be conducted which helps generate these data for further studies based on a larger sample size). It is also important to know whether the data follow a normal distribution or not. Two essential aspects we must understand are the concept of Type I and Type II errors. In a study that compares two groups, a null hypothesis assumes that there is no significant difference between the two groups, and any observed difference being due to sampling or experimental error. When we reject a null hypothesis, when it is true, we label it as a Type I error (also denoted as “alpha,” correlating with significance levels). In a Type II error (also denoted as “beta”), we fail to reject a null hypothesis, when the alternate hypothesis is actually true. Type II errors are usually expressed as “1- β,” correlating with the power of the test. While there are no absolute rules, the minimal levels accepted are 0.05 for α (corresponding to a significance level of 5%) and 0.20 for β (corresponding to a minimum recommended power of “1 − 0.20,” or 80%). Effect size and minimal clinically relevant differenceFor a clinical trial, the investigator will have to decide in advance what clinically detectable change is significant (for numerical data, this is could be the anticipated outcome means in the two groups, whereas for categorical data, it could correlate with the proportions of successful outcomes in two groups.). While we will not go into details of the formula for sample size calculation, some important points are as follows: In the context where effect size is involved, the sample size is inversely proportional to the square of the effect size. What this means in effect is that reducing the effect size will lead to an increase in the required sample size. Reducing the level of significance (alpha) or increasing power (1-β) will lead to an increase in the calculated sample size. An increase in variance of the outcome leads to an increase in the calculated sample size. A note is that for estimation type of studies/surveys, sample size calculation needs to consider some other factors too. This includes an idea about total population size (this generally does not make a major difference when population size is above 20,000, so in situations where population size is not known we can assume a population of 20,000 or more). The other factor is the “margin of error” – the amount of deviation which the investigators find acceptable in terms of percentages. Regarding confidence levels, ideally, a 95% confidence level is the minimum recommended for surveys too. Finally, we need an idea of the expected/crude prevalence – either based on previous studies or based on estimates. Sample size calculation also needs to add corrections for patient drop-outs/lost-to-follow-up patients and missing records. An important point is that in some studies dealing with rare diseases, it may be difficult to achieve desired sample size. In these cases, the investigators might have to rework outcomes or maybe pool data from multiple centers. Although post hoc power can be analyzed, a better approach suggested is to calculate 95% confidence intervals for the outcome and interpret the study results based on this. Financial support and sponsorshipConflicts of interest. There are no conflicts of interest.  The Plagiarism Checker Online For Your Academic Work Start Plagiarism Check Editing & Proofreading for Your Research Paper Get it proofread now Online Printing & Binding with Free Express Delivery Configure binding now - Academic essay overview

- The writing process

- Structuring academic essays

- Types of academic essays

- Academic writing overview

- Sentence structure

- Academic writing process

- Improving your academic writing

- Titles and headings

- APA style overview

- APA citation & referencing

- APA structure & sections

- Citation & referencing

- Structure and sections

- APA examples overview

- Commonly used citations

- Other examples

- British English vs. American English

- Chicago style overview

- Chicago citation & referencing

- Chicago structure & sections

- Chicago style examples

- Citing sources overview

- Citation format

- Citation examples

- College essay overview

- Application

- How to write a college essay

- Types of college essays

- Commonly confused words

- Definitions

- Dissertation overview

- Dissertation structure & sections

- Dissertation writing process

- Graduate school overview

- Application & admission

- Study abroad

- Master degree

- Harvard referencing overview

- Language rules overview

- Grammatical rules & structures

- Parts of speech

- Punctuation

- Methodology overview

- Analyzing data

- Experiments

- Observations

- Inductive vs. Deductive

- Qualitative vs. Quantitative

- Types of validity

- Types of reliability

- Sampling methods

- Theories & Concepts

- Types of research studies

- Types of variables

- MLA style overview

- MLA examples

- MLA citation & referencing

- MLA structure & sections

- Plagiarism overview

- Plagiarism checker

- Types of plagiarism

- Printing production overview

- Research bias overview

- Types of research bias

- Example sections

- Types of research papers

- Research process overview

- Problem statement

- Research proposal

- Research topic

- Statistics overview

- Levels of measurment

- Frequency distribution

- Measures of central tendency

- Measures of variability

- Hypothesis testing

- Parameters & test statistics

- Types of distributions

- Correlation

- Effect size

- Hypothesis testing assumptions

- Types of ANOVAs

- Types of chi-square

- Statistical data

- Statistical models

- Spelling mistakes

- Tips overview

- Academic writing tips

- Dissertation tips

- Sources tips

- Working with sources overview

- Evaluating sources

- Finding sources

- Including sources

- Types of sources

Your Step to SuccessPlagiarism Check within 10min Printing & Binding with 3D Live Preview Types of Variables in Research – Definition & ExamplesHow do you like this article cancel reply. Save my name, email, and website in this browser for the next time I comment.  A fundamental component in statistical investigations is the methodology you employ in selecting your research variables. The careful selection of appropriate variable types can significantly enhance the robustness of your experimental design . This piece explores the diverse array of variable classifications within the field of statistical research. Additionally, understanding the different types of variables in research can greatly aid in shaping your experimental hypotheses and outcomes. Inhaltsverzeichnis - 1 Types of Variables in Research – In a Nutshell

- 2 Definition: Types of variables in research

- 3 Types of variables in research – Quantitative vs. Categorical

- 4 Types of variables in research – Independent vs. Dependent

- 5 Other useful types of variables in research

Types of Variables in Research – In a Nutshell- A variable is an attribute of an item of analysis in research.

- The types of variables in research can be categorized into: independent vs. dependent , or categorical vs. quantitative .

- The types of variables in research (correlational) can be classified into predictor or outcome variables.

- Other types of variables in research are confounding variables , latent variables , and composite variables.

Definition: Types of variables in researchA variable is a trait of an item of analysis in research. Types of variables in research are imperative, as they describe and measure places, people, ideas , or other research objects . There are many types of variables in research. Therefore, you must choose the right types of variables in research for your study. Note that the correct variable will help with your research design , test selection, and result interpretation. In a study testing whether some genders are more stress-tolerant than others, variables you can include are the level of stressors in the study setting, male and female subjects, and productivity levels in the presence of stressors. Also, before choosing which types of variables in research to use, you should know how the various types work and the ideal statistical tests and result interpretations you will use for your study. The key is to determine the type of data the variable contains and the part of the experiment the variable represents. Types of variables in research – Quantitative vs. CategoricalData is the precise extent of a variable in statistical research that you record in a data sheet. It is generally divided into quantitative and categorical classes. Quantitative or numerical data represents amounts, while categorical data represents collections or groupings. The type of data contained in your variable will determine the types of variables in research. For instance, variables consisting of quantitative data are called quantitative variables, while those containing categorical data are called categorical variables. The section below explains these two types of variables in research better. Quantitative variablesThe scores you record when collecting quantitative data usually represent real values you can add, divide , subtract , or multiply . There are two types of quantitative variables: discrete variables and continuous variables . The table below explains the elements that set apart discrete and continuous types of variables in research: | | | | | Discrete or integer variables | Individual item counts or values | • Number of employees in a company

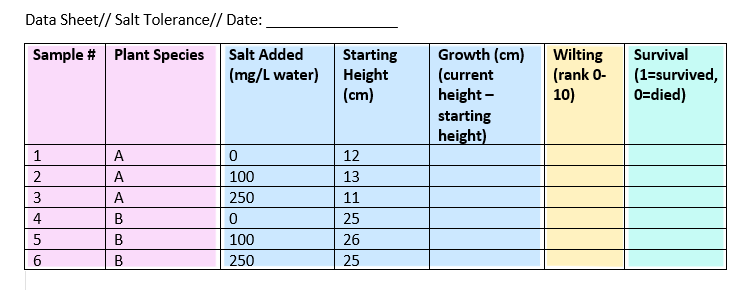

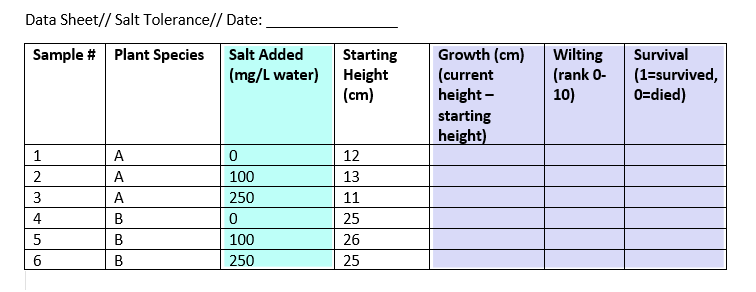

• Number of students in a school district

| | Continuous or ratio variables | Measurements of non-finite or continuous scores | • Age

• Weight

• Volume

• Distance | Categorical variablesCategorical variables contain data representing groupings. Additionally, the data in categorical variables is sometimes recorded as numbers . However, the numbers represent categories instead of real amounts. There are three categorical types of variables in research: nominal variables, ordinal variables , and binary variables . Here is a tabular summary. | | | | | Binary/dichotomous variables | YES/NO outcomes | • Win/lose in a game

• Pass/fail in an exam

| | Nominal variables | No-rank groups or orders between groups | • Colors

• Participant name

• Brand names

| | Ordinal variables | Groups ranked in a particular order | • Performance rankings in an exam

• Rating scales of survey responses | It is worth mentioning that some categorical variables can function as multiple types. For example, in some studies, you can use ordinal variables as quantitative variables if the scales are numerical and not discrete. Data sheet of quantitative and categorical variablesA data sheet is where you record the data on the variables in your experiment. In a study of the salt-tolerance levels of various plant species, you can record the data on salt addition and how the plant responds in your datasheet. The key is to gather the information and draw a conclusion over a specific period and filling out a data sheet along the process. Below is an example of a data sheet containing binary, nominal, continuous , and ordinal types of variables in research. | | | | | | | | A | 12 | 0 | - | - | - | | A | 18 | 50 | - | - | - | | B | 11 | 0 | - | - | - | | B | 15 | 50 | - | - | - | | C | 25 | 0 | - | - | - | | C | 31 | 50 | - | - | - |  Types of variables in research – Independent vs. Dependent The purpose of experiments is to determine how the variables affect each other. As stated in our experiment above, the study aims to find out how the quantity of salt introduce in the water affects the plant’s growth and survival. Therefore, the researcher manipulates the independent variables and measures the dependent variables . Additionally, you may have control variables that you hold constant. The table below summarizes independent variables, dependent variables , and control variables . | | | | | Independent/ treatment variables | The variables you manipulate to affect the experiment outcome | The amount of salt added to the water | | Dependent/ response variables | The variable that represents the experiment outcomes | The plant’s growth or survival | | Control variables | Variables held constant throughout the study | Temperature or light in the experiment room | Data sheet of independent and dependent variablesIn salt-tolerance research, there is one independent variable (salt amount) and three independent variables. All other variables are neither dependent nor independent. Below is a data sheet based on our experiment: Types of variables in correlational researchThe types of variables in research may differ depending on the study. In correlational research , dependent and independent variables do not apply because the study objective is not to determine the cause-and-effect link between variables. However, in correlational research, one variable may precede the other, as illness leads to death, and not vice versa. In such an instance, the preceding variable, like illness, is the predictor variable, while the other one is the outcome variable. Other useful types of variables in researchThe key to conducting effective research is to define your types of variables as independent and dependent. Next, you must determine if they are categorical or numerical types of variables in research so you can choose the proper statistical tests for your study. Below are other types of variables in research worth understanding. | | | | | Confounding variables | Hides the actual impact of an alternative variable in your study | Pot size and soil type | | Latent variables | Cannot be measured directly | Salt tolerance | | Composite variables | Formed by combining multiple variables | The health variables combined into a single health score | What is the definition for independent and dependent variables?An autonomous or independent variable is the one you believe is the origin of the outcome, while the dependent variable is the one you believe affects the outcome of your study. What are quantitative and categorical variables?Knowing the types of variables in research that you can work with will help you choose the best statistical tests and result representation techniques. It will also help you with your study design. Discrete and continuous variables: What is their difference?Discrete variables are types of variables in research that represent counts, like the quantities of objects. In contrast, continuous variables are types of variables in research that represent measurable quantities like age, volume, and weight. I’m so happy with how my dissertation turned out! The order process was very... We use cookies on our website. Some of them are essential, while others help us to improve this website and your experience. Individual Privacy Preferences Cookie Details Privacy Policy Imprint Here you will find an overview of all cookies used. You can give your consent to whole categories or display further information and select certain cookies. Accept all Save Essential cookies enable basic functions and are necessary for the proper function of the website. Show Cookie Information Hide Cookie Information | Name | | | Anbieter | Eigentümer dieser Website, | | Zweck | Speichert die Einstellungen der Besucher, die in der Cookie Box von Borlabs Cookie ausgewählt wurden. | | Cookie Name | borlabs-cookie | | Cookie Laufzeit | 1 Jahr | | Name | | | Anbieter | Bachelorprint | | Zweck | Erkennt das Herkunftsland und leitet zur entsprechenden Sprachversion um. | | Datenschutzerklärung | | | Host(s) | ip-api.com | | Cookie Name | georedirect | | Cookie Laufzeit | 1 Jahr | | Name | | | Anbieter | Playcanvas | | Zweck | Display our 3D product animations | | Datenschutzerklärung | | | Host(s) | playcanv.as, playcanvas.as, playcanvas.com | | Cookie Laufzeit | 1 Jahr | Statistics cookies collect information anonymously. This information helps us to understand how our visitors use our website. | Akzeptieren | | | Name | | | Anbieter | Google Ireland Limited, Gordon House, Barrow Street, Dublin 4, Ireland | | Zweck | Cookie von Google zur Steuerung der erweiterten Script- und Ereignisbehandlung. | | Datenschutzerklärung | | | Cookie Name | _ga,_gat,_gid | | Cookie Laufzeit | 2 Jahre | Content from video platforms and social media platforms is blocked by default. If External Media cookies are accepted, access to those contents no longer requires manual consent. | Akzeptieren | | | Name | | | Anbieter | Meta Platforms Ireland Limited, 4 Grand Canal Square, Dublin 2, Ireland | | Zweck | Wird verwendet, um Facebook-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | .facebook.com | | Akzeptieren | | | Name | | | Anbieter | Google Ireland Limited, Gordon House, Barrow Street, Dublin 4, Ireland | | Zweck | Wird zum Entsperren von Google Maps-Inhalten verwendet. | | Datenschutzerklärung | | | Host(s) | .google.com | | Cookie Name | NID | | Cookie Laufzeit | 6 Monate | | Akzeptieren | | | Name | | | Anbieter | Meta Platforms Ireland Limited, 4 Grand Canal Square, Dublin 2, Ireland | | Zweck | Wird verwendet, um Instagram-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | .instagram.com | | Cookie Name | pigeon_state | | Cookie Laufzeit | Sitzung | | Akzeptieren | | | Name | | | Anbieter | Openstreetmap Foundation, St John’s Innovation Centre, Cowley Road, Cambridge CB4 0WS, United Kingdom | | Zweck | Wird verwendet, um OpenStreetMap-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | .openstreetmap.org | | Cookie Name | _osm_location, _osm_session, _osm_totp_token, _osm_welcome, _pk_id., _pk_ref., _pk_ses., qos_token | | Cookie Laufzeit | 1-10 Jahre | | Akzeptieren | | | Name | | | Anbieter | Twitter International Company, One Cumberland Place, Fenian Street, Dublin 2, D02 AX07, Ireland | | Zweck | Wird verwendet, um Twitter-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | .twimg.com, .twitter.com | | Cookie Name | __widgetsettings, local_storage_support_test | | Cookie Laufzeit | Unbegrenzt | | Akzeptieren | | | Name | | | Anbieter | Vimeo Inc., 555 West 18th Street, New York, New York 10011, USA | | Zweck | Wird verwendet, um Vimeo-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | player.vimeo.com | | Cookie Name | vuid | | Cookie Laufzeit | 2 Jahre | | Akzeptieren | | | Name | | | Anbieter | Google Ireland Limited, Gordon House, Barrow Street, Dublin 4, Ireland | | Zweck | Wird verwendet, um YouTube-Inhalte zu entsperren. | | Datenschutzerklärung | | | Host(s) | google.com | | Cookie Name | NID | | Cookie Laufzeit | 6 Monate | Privacy Policy Imprint Explore Jobs - Jobs Near Me

- Remote Jobs

- Full Time Jobs

- Part Time Jobs

- Entry Level Jobs

- Work From Home Jobs

Find Specific Jobs - $15 Per Hour Jobs

- $20 Per Hour Jobs

- Hiring Immediately Jobs

- High School Jobs

- H1b Visa Jobs

Explore Careers - Business And Financial

- Architecture And Engineering

- Computer And Mathematical

Explore Professions - What They Do

- Certifications

- Demographics

Best Companies Explore Companies - CEO And Executies

- Resume Builder

- Career Advice

- Explore Majors

- Questions And Answers

- Interview Questions

The Different Types Of Variables Used In Research And Statistics- APR Formula

- Total Variable Cost Formula

- How to Calculate Probability

- How To Find A Percentile

- How To Calculate Weighted Average

- What Is The Sample Mean?

- Hot To Calculate Growth Rate

- Hot To Calculate Inflation Rate

- How To Calculate Marginal Utility

- How To Average Percentages

- Calculate Debt To Asset Ratio

- How To Calculate Percent Yield

- Fixed Cost Formula

- How To Calculate Interest

- How To Calculate Earnings Per Share

- How To Calculate Retained Earnings

- How To Calculate Adjusted Gross Income

- How To Calculate Consumer Price Index

- How To Calculate Cost Of Goods Sold

- How To Calculate Correlation

- How To Calculate Confidence Interval

- How To Calculate Consumer Surplus

- How To Calculate Debt To Income Ratio

- How To Calculate Depreciation

- How To Calculate Elasticity Of Demand

- How To Calculate Equity

- How To Calculate Full Time Equivalent

- How To Calculate Gross Profit Percentage

- How To Calculate Margin Of Error

- How To Calculate Opportunity Cost

- How To Calculate Operating Cash Flow

- How To Calculate Operating Income

- How To Calculate Odds

- How To Calculate Percent Change

- How To Calculate Z Score

- Cost Of Capital Formula

- How To Calculate Time And A Half

- Types Of Variables