- Search Menu

- Sign in through your institution

- Advance Articles

- Author Guidelines

- Open Access Policy

- Self-Archiving Policy

- About Significance

- About The Royal Statistical Society

- Editorial Board

- Advertising & Corporate Services

- Journals on Oxford Academic

- Books on Oxford Academic

Article Contents

What is randomisation, why do we randomise, choosing a randomisation method, implementing the chosen randomisation method.

- < Previous

Randomisation: What, Why and How?

- Article contents

- Figures & tables

- Supplementary Data

Zoë Hoare, Randomisation: What, Why and How?, Significance , Volume 7, Issue 3, September 2010, Pages 136–138, https://doi.org/10.1111/j.1740-9713.2010.00443.x

- Permissions Icon Permissions

Randomisation is a fundamental aspect of randomised controlled trials, but how many researchers fully understand what randomisation entails or what needs to be taken into consideration to implement it effectively and correctly? Here, for students or for those about to embark on setting up a trial, Zoë Hoare gives a basic introduction to help approach randomisation from a more informed direction.

Most trials of new medical treatments, and most other trials for that matter, now implement some form of randomisation. The idea sounds so simple that defining it becomes almost a joke: randomisation is “putting participants into the treatment groups randomly”. If only it were that simple. Randomisation can be a minefield, and not everyone understands what exactly it is or why they are doing it.

A key feature of a randomised controlled trial is that it is genuinely not known whether the new treatment is better than what is currently offered. The researchers should be in a state of equipoise; although they may hope that the new treatment is better, there is no definitive evidence to back this hypothesis up. This evidence is what the trial is trying to provide.

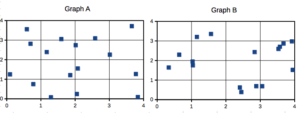

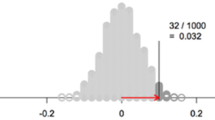

You will have, at its simplest, two groups: patients who are getting the new treatment, and those getting the control or placebo. You do not hand-select which patient goes into which group, because that would introduce selection bias. Instead you allocate your patients randomly. In its simplest form this can be done by the tossing of a fair coin: heads, the patient gets the trial treatment; tails, he gets the control. Simple randomisation is a fair way of ensuring that any differences that occur between the treatment groups arise completely by chance. But – and this is the first but of many here – simple randomisation can lead to unbalanced groups, that is, groups of unequal size. This is particularly true if the trial is only small. For example, tossing a fair coin 10 times will only result in five heads and five tails about 25% of the time. We would have a 66% chance of getting 6 heads and 4 tails, 5 and 5, or 4 and 6; 33% of the time we would get an even larger imbalance, with 7, 8, 9 or even all 10 patients in one group and the other group correspondingly undersized.

The impact of an imbalance like this is far greater for a small trial than for a larger trial. Tossing a fair coin 100 times will result in imbalance larger than 60–40 less than 1% of the time. One important part of the trial design process is the statement of intention of using randomisation; then we need to establish which method to use, when it will be used, and whether or not it is in fact random.

Randomisation needs to be controlled: You would not want all the males under 30 to be in one trial group and all the women over 70 in the other

It is partly true to say that we do it because we have to. The Consolidated Standards of Reporting Trials (CONSORT) 1 , to which we should all adhere, tells us: “Ideally, participants should be assigned to comparison groups in the trial on the basis of a chance (random) process characterized by unpredictability.” The requirement is there for a reason. Randomisation of the participants is crucial because it allows the principles of statistical theory to stand and as such allows a thorough analysis of the trial data without bias. The exact method of randomisation can have an impact on the trial analyses, and this needs to be taken into account when writing the statistical analysis plan.

Ideally, simple randomisation would always be the preferred option. However, in practice there often needs to be some control of the allocations to avoid severe imbalances within treatments or within categories of patient. You would not want, for example, all the males under 30 to be in one group and all the females over 70 in the other. This is where restricted or stratified randomisation comes in.

Restricted randomisation relates to using any method to control the split of allocations to each of the treatment groups based on certain criteria. This can be as simple as generating a random list, such as AAABBBABABAABB …, and allocating each participant as they arrive to the next treatment on the list. At certain points within the allocations we know that the groups will be balanced in numbers – here at the sixth, eighth, tenth and 14th participants – and we can control the maximum imbalance at any one time.

Stratified randomisation sets out to control the balance in certain baseline characteristics of the participants – such as sex or age. This can be thought of as producing an individual randomisation list for each of the characteristics concerned.

© iStockphoto.com/dra_schwartz

Stratification variables are the baseline characteristics that you think might influence the outcome your trial is trying to measure. For example, if you thought gender was going to have an effect on the efficacy of the treatment then you would use it as one of your stratification variables. A stratified randomisation procedure would aim to ensure a balance of the two gender groups between the two treatment groups.

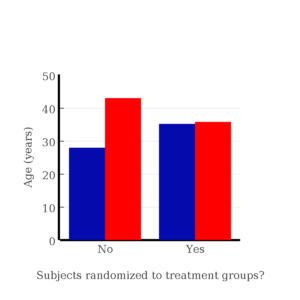

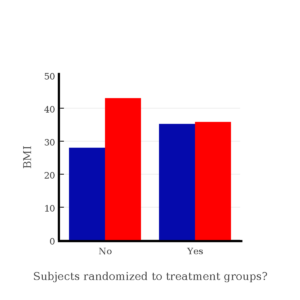

If you also thought age would be affecting the treatment then you could also stratify by age (young/old) with some sensible limits on what old and young are. Once you start stratifying by age and by gender, you have to start taking care. You will need to use a stratified randomisation process that balances at the stratum level (i.e. at the level of those characteristics) to ensure that all four strata (male/young, male/old, female/young and female/old) have equivalent numbers of each of the treatment groups represented.

“Great”, you might think. “I'll just stratify by all my baseline characteristics!” Better not. Stop and consider what this would mean. As the number of stratification variables increases linearly, the number of strata increases exponentially. This reduces the number of participants that would appear in each stratum. In our example above, with our two stratification variables of age and sex we had four strata; if we added, say “blue-eyed” and “overweight” to our criteria to give four stratification variables each with just two levels we would get 16 represented strata. How likely is it that each of those strata will be represented in the population targeted by the trial? In other words, will we be sure of finding a blue-eyed young male who is also overweight among our patients? And would one such overweight possible Adonis be statistically enough? It becomes evident that implementing pre-generated lists within each stratification level or stratum and maintaining an overall balance of group sizes becomes much more complicated with many stratification variables and the uncertainty of what type of participant will walk through the door next.

Does it matter? There are a wide variety of methods for randomisation, and which one you choose does actually matter. It needs to be able to do everything that is required of it. Ask yourself these questions, and others:

Can the method accommodate enough treatment groups? Some methods are limited to two treatment groups; many trials involve three or more.

What type of randomness, if any, is injected into the method? The level of randomness dictates how predictable a method is.

A deterministic method has no randomness, meaning that with all the previous information you can tell in advance which group the next patient to appear will be allocated to. Allocating alternate participants to the two treatments using ABABABABAB … would be an example.

A static random element means that each allocation is made with a pre-defined probability. The coin-toss method does this.

With a dynamic element the probability of allocation is always changing in relation to the information received, meaning that the probability of allocation can only be worked out with knowledge of the algorithm together with all its settings. A biased coin toss does this where the bias is recalculated for each participant.

Can the method accommodate stratification variables, and if so how many? Not all of them can. And can it cope with continuous stratification variables? Most variables are divided into mutually exclusive categories (e.g. male or female), but sometimes it may be necessary (or preferable) to use a continuous scale of the variable – such as weight, or body mass index.

Can the method use an unequal allocation ratio? Not all trials require equal-sized treatment groups. There are many reasons why it might be wise to have more patients receiving treatment A than treatment B 2 . However, an allocation ratio being something other than 1:1 does impact on the study design and on the calculation of the sample size, so is not something to be changing mid-trial. Not all allocation methods can cope with this inequality.

Is thresholding used in the method? Thresholding handles imbalances in allocation. A threshold is set and if the imbalance becomes greater than the threshold then the allocation becomes deterministic to reduce the imbalance back below the threshold.

Can the method be implemented sequentially? In other words, does it require that the total number of participants be known at the beginning of the allocations? Some methods generate lists requiring exactly N participants to be recruited in order to be effective – and recruiting participants is often one of the more problematic parts of a trial.

Is the method complex? If so, then its practical implementation becomes an issue for the day-to-day running of the trial.

Is the method suitable to apply to a cluster randomisation? Cluster randomisations are used when randomising groups of individuals to a treatment rather than the individuals themselves. This can be due to the nature of the treatment, such as a new teaching method for schools or a dietary intervention for families. Using clusters is a big part of the trial design and the randomisation needs to be handled slightly differently.

Should a response-adaptive method be considered? If there is some evidence that one treatment is better than another, then a response-adaptive method works by taking into account the outcomes of previous allocations and works to minimise the number of participants on the “wrong” treatment.

For multi-centred trials, how to handle the randomisations across the centres should be considered at this point. Do all centres need to be completely balanced? Are all centres the same size? Considering the various centres as stratification variables is one way of dealing with more than one centre.

Once the method of randomisation has been established the next important step is to consider how to implement it. The recommended way is to enlist the services of a central randomisation office that can offer robust, validated techniques with the security and back-up needed to implement many of the methods proposed today. How the method is implemented must be as clearly reported as the method chosen. As part of the implementation it is important to keep the allocations concealed, both those already done and any future ones, from as many people as possible. This helps prevent selection bias: a clinician may withhold a participant if he believes that based on previous allocations the next allocations would not be the “preferred” ones – see the section below on subversion.

Part of the trial design will be to note exactly who should know what about how each participant has been allocated. Researchers and participants may be equally blinded, but that is not always the case.

For example, in a blinded trial there may be researchers who do not know which group the participants have been allocated to. This enables them to conduct the assessments without any bias for the allocation. They may, however, start to guess, on the basis of the results they see. A measure of blinding may be incorporated for the researchers to indicate whether they have remained blind to the treatment allocated. This can be in the form of a simple scale tool for the researcher to indicate how confident they are in knowing which allocated group the participant is in by the end of an assessment. With psychosocial interventions it is often impossible to hide from the participants, let alone the clinicians, which treatment group they have been allocated to.

In a drug trial where a placebo can be prescribed a coded system can ensure that neither patients nor researchers know which group is which until after the analysis stage.

With any level of blinding there may be a requirement to unblind participants or clinicians at any point in the trial, and there should be a documented procedure drawn up on how to unblind a particular participant without risking the unblinding of a trial. For drug trials in particular, the methods for unblinding a participant must be stated in the trial protocol. Wherever possible the data analysts and statisticians should remain blind to the allocation until after the main analysis has taken place.

Blinding should not be confused with allocation concealment. Blinding prevents performance and ascertainment bias within a trial, while allocation concealment prevents selection bias. Bias introduced by poor allocation concealment may be thought of as a predictive bias, trying to influence the results from the outset, while the biases introduced by non-blinding can be thought of as a reactive bias, creating causal links in outcomes because of being in possession of information about the treatment group.

In the literature on randomisation there are numerous tales of how allocation schemes have been subverted by clinicians trying to do the best for the trial or for their patient or both. This includes anecdotal tales of clinicians holding sealed envelopes containing the allocations up to X-ray lights and confessing to breaking into locked filing cabinets to get at the codes 3 . This type of behaviour has many explanations and reasons, but does raise the question whether these clinicians were in a state of equipoise with regard to the trial, and whether therefore they should really have been involved with the trial. Randomisation schemes and their implications must be signed up to by the whole team and are not something that only the participants need to consent to.

Clinicians have been known to X-ray sealed allocation envelopes to try to get their patients into the preferred group in a trial

The 2010 CONSORT statement can be found at http://www.consort-statement.org/consort-statement/ .

Dumville , J. C. , Hahn , S. , Miles , J. N. V. and Torgerson , D. J. ( 2006 ) The use of unequal randomisation ratios in clinical trials: A review . Contemporary Clinical Trials , 27 , 1 – 12 .

Google Scholar

Shulz , K. F. ( 1995 ) Subverting randomisation in controlled trials . Journal of the American Medical Association , 274 , 1456 – 1458 .

| Month: | Total Views: |

|---|---|

| February 2023 | 6 |

| March 2023 | 6 |

| April 2023 | 7 |

| May 2023 | 16 |

| June 2023 | 24 |

| July 2023 | 19 |

| August 2023 | 45 |

| September 2023 | 106 |

| October 2023 | 139 |

| November 2023 | 234 |

| December 2023 | 184 |

| January 2024 | 239 |

| February 2024 | 197 |

| March 2024 | 183 |

| April 2024 | 140 |

| May 2024 | 167 |

| June 2024 | 83 |

Email alerts

Citing articles via.

- Recommend to Your Librarian

- Advertising & Corporate Services

- Journals Career Network

Affiliations

- Online ISSN 1740-9713

- Print ISSN 1740-9705

- Copyright © 2024 Royal Statistical Society

- About Oxford Academic

- Publish journals with us

- University press partners

- What we publish

- New features

- Open access

- Institutional account management

- Rights and permissions

- Get help with access

- Accessibility

- Advertising

- Media enquiries

- Oxford University Press

- Oxford Languages

- University of Oxford

Oxford University Press is a department of the University of Oxford. It furthers the University's objective of excellence in research, scholarship, and education by publishing worldwide

- Copyright © 2024 Oxford University Press

- Cookie settings

- Cookie policy

- Privacy policy

- Legal notice

This Feature Is Available To Subscribers Only

Sign In or Create an Account

This PDF is available to Subscribers Only

For full access to this pdf, sign in to an existing account, or purchase an annual subscription.

- Yale Directories

Institution for Social and Policy Studies

Advancing research • shaping policy • developing leaders, why randomize.

About Randomized Field Experiments Randomized field experiments allow researchers to scientifically measure the impact of an intervention on a particular outcome of interest.

What is a randomized field experiment? In a randomized experiment, a study sample is divided into one group that will receive the intervention being studied (the treatment group) and another group that will not receive the intervention (the control group). For instance, a study sample might consist of all registered voters in a particular city. This sample will then be randomly divided into treatment and control groups. Perhaps 40% of the sample will be on a campaign’s Get-Out-the-Vote (GOTV) mailing list and the other 60% of the sample will not receive the GOTV mailings. The outcome measured –voter turnout– can then be compared in the two groups. The difference in turnout will reflect the effectiveness of the intervention.

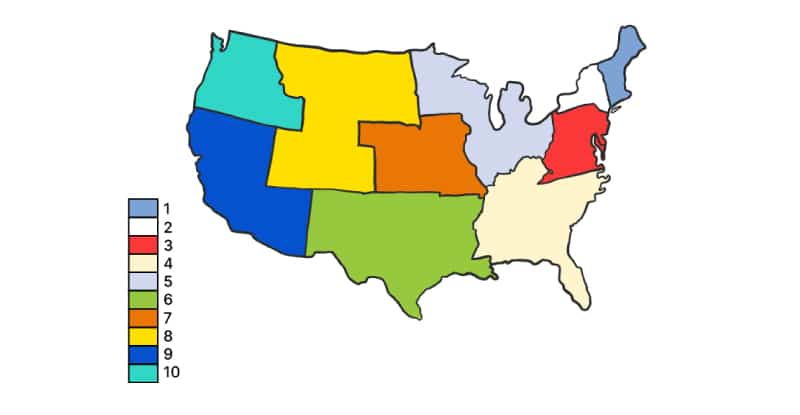

What does random assignment mean? The key to randomized experimental research design is in the random assignment of study subjects – for example, individual voters, precincts, media markets or some other group – into treatment or control groups. Randomization has a very specific meaning in this context. It does not refer to haphazard or casual choosing of some and not others. Randomization in this context means that care is taken to ensure that no pattern exists between the assignment of subjects into groups and any characteristics of those subjects. Every subject is as likely as any other to be assigned to the treatment (or control) group. Randomization is generally achieved by employing a computer program containing a random number generator. Randomization procedures differ based upon the research design of the experiment. Individuals or groups may be randomly assigned to treatment or control groups. Some research designs stratify subjects by geographic, demographic or other factors prior to random assignment in order to maximize the statistical power of the estimated effect of the treatment (e.g., GOTV intervention). Information about the randomization procedure is included in each experiment summary on the site.

What are the advantages of randomized experimental designs? Randomized experimental design yields the most accurate analysis of the effect of an intervention (e.g., a voter mobilization phone drive or a visit from a GOTV canvasser, on voter behavior). By randomly assigning subjects to be in the group that receives the treatment or to be in the control group, researchers can measure the effect of the mobilization method regardless of other factors that may make some people or groups more likely to participate in the political process. To provide a simple example, say we are testing the effectiveness of a voter education program on high school seniors. If we allow students from the class to volunteer to participate in the program, and we then compare the volunteers’ voting behavior against those who did not participate, our results will reflect something other than the effects of the voter education intervention. This is because there are, no doubt, qualities about those volunteers that make them different from students who do not volunteer. And, most important for our work, those differences may very well correlate with propensity to vote. Instead of letting students self-select, or even letting teachers select students (as teachers may have biases in who they choose), we could randomly assign all students in a given class to be in either a treatment or control group. This would ensure that those in the treatment and control groups differ solely due to chance. The value of randomization may also be seen in the use of walk lists for door-to-door canvassers. If canvassers choose which houses they will go to and which they will skip, they may choose houses that seem more inviting or they may choose houses that are placed closely together rather than those that are more spread out. These differences could conceivably correlate with voter turnout. Or if house numbers are chosen by selecting those on the first half of a ten page list, they may be clustered in neighborhoods that differ in important ways from neighborhoods in the second half of the list. Random assignment controls for both known and unknown variables that can creep in with other selection processes to confound analyses. Randomized experimental design is a powerful tool for drawing valid inferences about cause and effect. The use of randomized experimental design should allow a degree of certainty that the research findings cited in studies that employ this methodology reflect the effects of the interventions being measured and not some other underlying variable or variables.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Random Assignment in Experiments | Introduction & Examples

Random Assignment in Experiments | Introduction & Examples

Published on March 8, 2021 by Pritha Bhandari . Revised on June 22, 2023.

In experimental research, random assignment is a way of placing participants from your sample into different treatment groups using randomization.

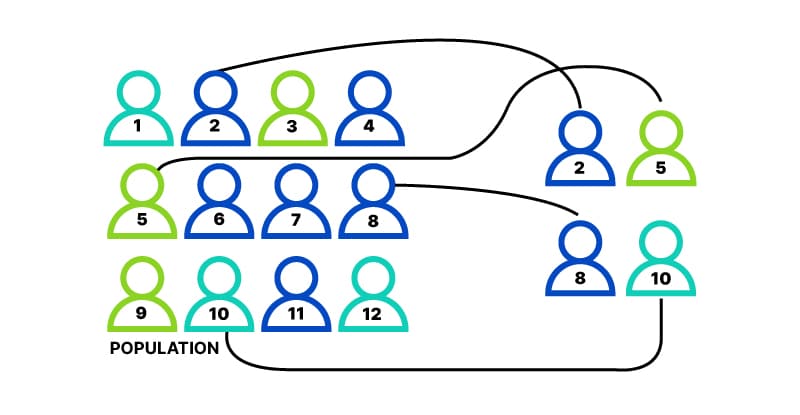

With simple random assignment, every member of the sample has a known or equal chance of being placed in a control group or an experimental group. Studies that use simple random assignment are also called completely randomized designs .

Random assignment is a key part of experimental design . It helps you ensure that all groups are comparable at the start of a study: any differences between them are due to random factors, not research biases like sampling bias or selection bias .

Table of contents

Why does random assignment matter, random sampling vs random assignment, how do you use random assignment, when is random assignment not used, other interesting articles, frequently asked questions about random assignment.

Random assignment is an important part of control in experimental research, because it helps strengthen the internal validity of an experiment and avoid biases.

In experiments, researchers manipulate an independent variable to assess its effect on a dependent variable, while controlling for other variables. To do so, they often use different levels of an independent variable for different groups of participants.

This is called a between-groups or independent measures design.

You use three groups of participants that are each given a different level of the independent variable:

- a control group that’s given a placebo (no dosage, to control for a placebo effect ),

- an experimental group that’s given a low dosage,

- a second experimental group that’s given a high dosage.

Random assignment to helps you make sure that the treatment groups don’t differ in systematic ways at the start of the experiment, as this can seriously affect (and even invalidate) your work.

If you don’t use random assignment, you may not be able to rule out alternative explanations for your results.

- participants recruited from cafes are placed in the control group ,

- participants recruited from local community centers are placed in the low dosage experimental group,

- participants recruited from gyms are placed in the high dosage group.

With this type of assignment, it’s hard to tell whether the participant characteristics are the same across all groups at the start of the study. Gym-users may tend to engage in more healthy behaviors than people who frequent cafes or community centers, and this would introduce a healthy user bias in your study.

Although random assignment helps even out baseline differences between groups, it doesn’t always make them completely equivalent. There may still be extraneous variables that differ between groups, and there will always be some group differences that arise from chance.

Most of the time, the random variation between groups is low, and, therefore, it’s acceptable for further analysis. This is especially true when you have a large sample. In general, you should always use random assignment in experiments when it is ethically possible and makes sense for your study topic.

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

Random sampling and random assignment are both important concepts in research, but it’s important to understand the difference between them.

Random sampling (also called probability sampling or random selection) is a way of selecting members of a population to be included in your study. In contrast, random assignment is a way of sorting the sample participants into control and experimental groups.

While random sampling is used in many types of studies, random assignment is only used in between-subjects experimental designs.

Some studies use both random sampling and random assignment, while others use only one or the other.

Random sampling enhances the external validity or generalizability of your results, because it helps ensure that your sample is unbiased and representative of the whole population. This allows you to make stronger statistical inferences .

You use a simple random sample to collect data. Because you have access to the whole population (all employees), you can assign all 8000 employees a number and use a random number generator to select 300 employees. These 300 employees are your full sample.

Random assignment enhances the internal validity of the study, because it ensures that there are no systematic differences between the participants in each group. This helps you conclude that the outcomes can be attributed to the independent variable .

- a control group that receives no intervention.

- an experimental group that has a remote team-building intervention every week for a month.

You use random assignment to place participants into the control or experimental group. To do so, you take your list of participants and assign each participant a number. Again, you use a random number generator to place each participant in one of the two groups.

To use simple random assignment, you start by giving every member of the sample a unique number. Then, you can use computer programs or manual methods to randomly assign each participant to a group.

- Random number generator: Use a computer program to generate random numbers from the list for each group.

- Lottery method: Place all numbers individually in a hat or a bucket, and draw numbers at random for each group.

- Flip a coin: When you only have two groups, for each number on the list, flip a coin to decide if they’ll be in the control or the experimental group.

- Use a dice: When you have three groups, for each number on the list, roll a dice to decide which of the groups they will be in. For example, assume that rolling 1 or 2 lands them in a control group; 3 or 4 in an experimental group; and 5 or 6 in a second control or experimental group.

This type of random assignment is the most powerful method of placing participants in conditions, because each individual has an equal chance of being placed in any one of your treatment groups.

Random assignment in block designs

In more complicated experimental designs, random assignment is only used after participants are grouped into blocks based on some characteristic (e.g., test score or demographic variable). These groupings mean that you need a larger sample to achieve high statistical power .

For example, a randomized block design involves placing participants into blocks based on a shared characteristic (e.g., college students versus graduates), and then using random assignment within each block to assign participants to every treatment condition. This helps you assess whether the characteristic affects the outcomes of your treatment.

In an experimental matched design , you use blocking and then match up individual participants from each block based on specific characteristics. Within each matched pair or group, you randomly assign each participant to one of the conditions in the experiment and compare their outcomes.

Sometimes, it’s not relevant or ethical to use simple random assignment, so groups are assigned in a different way.

When comparing different groups

Sometimes, differences between participants are the main focus of a study, for example, when comparing men and women or people with and without health conditions. Participants are not randomly assigned to different groups, but instead assigned based on their characteristics.

In this type of study, the characteristic of interest (e.g., gender) is an independent variable, and the groups differ based on the different levels (e.g., men, women, etc.). All participants are tested the same way, and then their group-level outcomes are compared.

When it’s not ethically permissible

When studying unhealthy or dangerous behaviors, it’s not possible to use random assignment. For example, if you’re studying heavy drinkers and social drinkers, it’s unethical to randomly assign participants to one of the two groups and ask them to drink large amounts of alcohol for your experiment.

When you can’t assign participants to groups, you can also conduct a quasi-experimental study . In a quasi-experiment, you study the outcomes of pre-existing groups who receive treatments that you may not have any control over (e.g., heavy drinkers and social drinkers). These groups aren’t randomly assigned, but may be considered comparable when some other variables (e.g., age or socioeconomic status) are controlled for.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Student’s t -distribution

- Normal distribution

- Null and Alternative Hypotheses

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Data cleansing

- Reproducibility vs Replicability

- Peer review

- Prospective cohort study

Research bias

- Implicit bias

- Cognitive bias

- Placebo effect

- Hawthorne effect

- Hindsight bias

- Affect heuristic

- Social desirability bias

In experimental research, random assignment is a way of placing participants from your sample into different groups using randomization. With this method, every member of the sample has a known or equal chance of being placed in a control group or an experimental group.

Random selection, or random sampling , is a way of selecting members of a population for your study’s sample.

In contrast, random assignment is a way of sorting the sample into control and experimental groups.

Random sampling enhances the external validity or generalizability of your results, while random assignment improves the internal validity of your study.

Random assignment is used in experiments with a between-groups or independent measures design. In this research design, there’s usually a control group and one or more experimental groups. Random assignment helps ensure that the groups are comparable.

In general, you should always use random assignment in this type of experimental design when it is ethically possible and makes sense for your study topic.

To implement random assignment , assign a unique number to every member of your study’s sample .

Then, you can use a random number generator or a lottery method to randomly assign each number to a control or experimental group. You can also do so manually, by flipping a coin or rolling a dice to randomly assign participants to groups.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bhandari, P. (2023, June 22). Random Assignment in Experiments | Introduction & Examples. Scribbr. Retrieved June 18, 2024, from https://www.scribbr.com/methodology/random-assignment/

Is this article helpful?

Pritha Bhandari

Other students also liked, guide to experimental design | overview, steps, & examples, confounding variables | definition, examples & controls, control groups and treatment groups | uses & examples, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

- Open access

- Published: 16 August 2021

A roadmap to using randomization in clinical trials

- Vance W. Berger 1 ,

- Louis Joseph Bour 2 ,

- Kerstine Carter 3 ,

- Jonathan J. Chipman ORCID: orcid.org/0000-0002-3021-2376 4 , 5 ,

- Colin C. Everett ORCID: orcid.org/0000-0002-9788-840X 6 ,

- Nicole Heussen ORCID: orcid.org/0000-0002-6134-7206 7 , 8 ,

- Catherine Hewitt ORCID: orcid.org/0000-0002-0415-3536 9 ,

- Ralf-Dieter Hilgers ORCID: orcid.org/0000-0002-5945-1119 7 ,

- Yuqun Abigail Luo 10 ,

- Jone Renteria 11 , 12 ,

- Yevgen Ryeznik ORCID: orcid.org/0000-0003-2997-8566 13 ,

- Oleksandr Sverdlov ORCID: orcid.org/0000-0002-1626-2588 14 &

- Diane Uschner ORCID: orcid.org/0000-0002-7858-796X 15

for the Randomization Innovative Design Scientific Working Group

BMC Medical Research Methodology volume 21 , Article number: 168 ( 2021 ) Cite this article

28k Accesses

39 Citations

14 Altmetric

Metrics details

Randomization is the foundation of any clinical trial involving treatment comparison. It helps mitigate selection bias, promotes similarity of treatment groups with respect to important known and unknown confounders, and contributes to the validity of statistical tests. Various restricted randomization procedures with different probabilistic structures and different statistical properties are available. The goal of this paper is to present a systematic roadmap for the choice and application of a restricted randomization procedure in a clinical trial.

We survey available restricted randomization procedures for sequential allocation of subjects in a randomized, comparative, parallel group clinical trial with equal (1:1) allocation. We explore statistical properties of these procedures, including balance/randomness tradeoff, type I error rate and power. We perform head-to-head comparisons of different procedures through simulation under various experimental scenarios, including cases when common model assumptions are violated. We also provide some real-life clinical trial examples to illustrate the thinking process for selecting a randomization procedure for implementation in practice.

Restricted randomization procedures targeting 1:1 allocation vary in the degree of balance/randomness they induce, and more importantly, they vary in terms of validity and efficiency of statistical inference when common model assumptions are violated (e.g. when outcomes are affected by a linear time trend; measurement error distribution is misspecified; or selection bias is introduced in the experiment). Some procedures are more robust than others. Covariate-adjusted analysis may be essential to ensure validity of the results. Special considerations are required when selecting a randomization procedure for a clinical trial with very small sample size.

Conclusions

The choice of randomization design, data analytic technique (parametric or nonparametric), and analysis strategy (randomization-based or population model-based) are all very important considerations. Randomization-based tests are robust and valid alternatives to likelihood-based tests and should be considered more frequently by clinical investigators.

Peer Review reports

Various research designs can be used to acquire scientific medical evidence. The randomized controlled trial (RCT) has been recognized as the most credible research design for investigations of the clinical effectiveness of new medical interventions [ 1 , 2 ]. Evidence from RCTs is widely used as a basis for submissions of regulatory dossiers in request of marketing authorization for new drugs, biologics, and medical devices. Three important methodological pillars of the modern RCT include blinding (masking), randomization, and the use of control group [ 3 ].

While RCTs provide the highest standard of clinical evidence, they are laborious and costly, in terms of both time and material resources. There are alternative designs, such as observational studies with either a cohort or case–control design, and studies using real world evidence (RWE). When properly designed and implemented, observational studies can sometimes produce similar estimates of treatment effects to those found in RCTs, and furthermore, such studies may be viable alternatives to RCTs in many settings where RCTs are not feasible and/or not ethical. In the era of big data, the sources of clinically relevant data are increasingly rich and include electronic health records, data collected from wearable devices, health claims data, etc. Big data creates vast opportunities for development and implementation of novel frameworks for comparative effectiveness research [ 4 ], and RWE studies nowadays can be implemented rapidly and relatively easily. But how credible are the results from such studies?

In 1980, D. P. Byar issued warnings and highlighted potential methodological problems with comparison of treatment effects using observational databases [ 5 ]. Many of these issues still persist and actually become paramount during the ongoing COVID-19 pandemic when global scientific efforts are made to find safe and efficacious vaccines and treatments as soon as possible. While some challenges pertinent to RWE studies are related to the choice of proper research methodology, some additional challenges arise from increasing requirements of health authorities and editorial boards of medical journals for the investigators to present evidence of transparency and reproducibility of their conducted clinical research. Recently, two top medical journals, the New England Journal of Medicine and the Lancet, retracted two COVID-19 studies that relied on observational registry data [ 6 , 7 ]. The retractions were made at the request of the authors who were unable to ensure reproducibility of the results [ 8 ]. Undoubtedly, such cases are harmful in many ways. The already approved drugs may be wrongly labeled as “toxic” or “inefficacious”, and the reputation of the drug developers could be blemished or destroyed. Therefore, the highest standards for design, conduct, analysis, and reporting of clinical research studies are now needed more than ever. When treatment effects are modest, yet still clinically meaningful, a double-blind, randomized, controlled clinical trial design helps detect these differences while adjusting for possible confounders and adequately controlling the chances of both false positive and false negative findings.

Randomization in clinical trials has been an important area of methodological research in biostatistics since the pioneering work of A. Bradford Hill in the 1940’s and the first published randomized trial comparing streptomycin with a non-treatment control [ 9 ]. Statisticians around the world have worked intensively to elaborate the value, properties, and refinement of randomization procedures with an incredible record of publication [ 10 ]. In particular, a recent EU-funded project ( www.IDeAl.rwth-aachen.de ) on innovative design and analysis of small population trials has “randomization” as one work package. In 2020, a group of trial statisticians around the world from different sectors formed a subgroup of the Drug Information Association (DIA) Innovative Designs Scientific Working Group (IDSWG) to raise awareness of the full potential of randomization to improve trial quality, validity and rigor ( https://randomization-working-group.rwth-aachen.de/ ).

The aims of the current paper are three-fold. First, we describe major recent methodological advances in randomization, including different restricted randomization designs that have superior statistical properties compared to some widely used procedures such as permuted block designs. Second, we discuss different types of experimental biases in clinical trials and explain how a carefully chosen randomization design can mitigate risks of these biases. Third, we provide a systematic roadmap for evaluating different restricted randomization procedures and selecting an “optimal” one for a particular trial. We also showcase application of these ideas through several real life RCT examples.

The target audience for this paper would be clinical investigators and biostatisticians who are tasked with the design, conduct, analysis, and interpretation of clinical trial results, as well as regulatory and scientific/medical journal reviewers. Recognizing the breadth of the concept of randomization, in this paper we focus on a randomized, comparative, parallel group clinical trial design with equal (1:1) allocation, which is typically implemented using some restricted randomization procedure, possibly stratified by some important baseline prognostic factor(s) and/or study center. Some of our findings and recommendations are generalizable to more complex clinical trial settings. We shall highlight these generalizations and outline additional important considerations that fall outside the scope of the current paper.

The paper is organized as follows. The “ Methods ” section provides some general background on the methodology of randomization in clinical trials, describes existing restricted randomization procedures, and discusses some important criteria for comparison of these procedures in practice. In the “ Results ” section, we present our findings from four simulation studies that illustrate the thinking process when evaluating different randomization design options at the study planning stage. The “ Conclusions ” section summarizes the key findings and important considerations on restricted randomization procedures, and it also highlights some extensions and further topics on randomization in clinical trials.

What is randomization and what are its virtues in clinical trials?

Randomization is an essential component of an experimental design in general and clinical trials in particular. Its history goes back to R. A. Fisher and his classic book “The Design of Experiments” [ 11 ]. Implementation of randomization in clinical trials is due to A. Bradford Hill who designed the first randomized clinical trial evaluating the use of streptomycin in treating tuberculosis in 1946 [ 9 , 12 , 13 ].

Reference [ 14 ] provides a good summary of the rationale and justification for the use of randomization in clinical trials. The randomized controlled trial (RCT) has been referred to as “the worst possible design (except for all the rest)” [ 15 ], indicating that the benefits of randomization should be evaluated in comparison to what we are left with if we do not randomize. Observational studies suffer from a wide variety of biases that may not be adequately addressed even using state-of-the-art statistical modeling techniques.

The RCT in the medical field has several features that distinguish it from experimental designs in other fields, such as agricultural experiments. In the RCT, the experimental units are humans, and in the medical field often diagnosed with a potentially fatal disease. These subjects are sequentially enrolled for participation in the study at selected study centers, which have relevant expertise for conducting clinical research. Many contemporary clinical trials are run globally, at multiple research institutions. The recruitment period may span several months or even years, depending on a therapeutic indication and the target patient population. Patients who meet study eligibility criteria must sign the informed consent, after which they are enrolled into the study and, for example, randomized to either experimental treatment E or the control treatment C according to the randomization sequence. In this setup, the choice of the randomization design must be made judiciously, to protect the study from experimental biases and ensure validity of clinical trial results.

The first virtue of randomization is that, in combination with allocation concealment and masking, it helps mitigate selection bias due to an investigator’s potential to selectively enroll patients into the study [ 16 ]. A non-randomized, systematic design such as a sequence of alternating treatment assignments has a major fallacy: an investigator, knowing an upcoming treatment assignment in a sequence, may enroll a patient who, in their opinion, would be best suited for this treatment. Consequently, one of the groups may contain a greater number of “sicker” patients and the estimated treatment effect may be biased. Systematic covariate imbalances may increase the probability of false positive findings and undermine the integrity of the trial. While randomization alleviates the fallacy of a systematic design, it does not fully eliminate the possibility of selection bias (unless we consider complete randomization for which each treatment assignment is determined by a flip of a coin, which is rarely, if ever used in practice [ 17 ]). Commonly, RCTs employ restricted randomization procedures which sequentially balance treatment assignments while maintaining allocation randomness. A popular choice is the permuted block design that controls imbalance by making treatment assignments at random in blocks. To minimize potential for selection bias, one should avoid overly restrictive randomization schemes such as permuted block design with small block sizes, as this is very similar to alternating treatment sequence.

The second virtue of randomization is its tendency to promote similarity of treatment groups with respect to important known, but even more importantly, unknown confounders. If treatment assignments are made at random, then by the law of large numbers, the average values of patient characteristics should be approximately equal in the experimental and the control groups, and any observed treatment difference should be attributed to the treatment effects, not the effects of the study participants [ 18 ]. However, one can never rule out the possibility that the observed treatment difference is due to chance, e.g. as a result of random imbalance in some patient characteristics [ 19 ]. Despite that random covariate imbalances can occur in clinical trials of any size, such imbalances do not compromise the validity of statistical inference, provided that proper statistical techniques are applied in the data analysis.

Several misconceptions on the role of randomization and balance in clinical trials were documented and discussed by Senn [ 20 ]. One common misunderstanding is that balance of prognostic covariates is necessary for valid inference. In fact, different randomization designs induce different extent of balance in the distributions of covariates, and for a given trial there is always a possibility of observing baseline group differences. A legitimate approach is to pre-specify in the protocol the clinically important covariates to be adjusted for in the primary analysis, apply a randomization design (possibly accounting for selected covariates using pre-stratification or some other approach), and perform a pre-planned covariate-adjusted analysis (such as analysis of covariance for a continuous primary outcome), verifying the model assumptions and conducting additional supportive/sensitivity analyses, as appropriate. Importantly, the pre-specified prognostic covariates should always be accounted for in the analysis, regardless whether their baseline differences are present or not [ 20 ].

It should be noted that some randomization designs (such as covariate-adaptive randomization procedures) can achieve very tight balance of covariate distributions between treatment groups [ 21 ]. While we address randomization within pre-specified stratifications, we do not address more complex covariate- and response-adaptive randomization in this paper.

Finally, randomization plays an important role in statistical analysis of the clinical trial. The most common approach to inference following the RCT is the invoked population model [ 10 ]. With this approach, one posits that there is an infinite target population of patients with the disease, from which \(n\) eligible subjects are sampled in an unbiased manner for the study and are randomized to the treatment groups. Within each group, the responses are assumed to be independent and identically distributed (i.i.d.), and inference on the treatment effect is performed using some standard statistical methodology, e.g. a two sample t-test for normal outcome data. The added value of randomization is that it makes the assumption of i.i.d. errors more feasible compared to a non-randomized study because it introduces a real element of chance in the allocation of patients.

An alternative approach is the randomization model , in which the implemented randomization itself forms the basis for statistical inference [ 10 ]. Under the null hypothesis of the equality of treatment effects, individual outcomes (which are regarded as not influenced by random variation, i.e. are considered as fixed) are not affected by treatment. Treatment assignments are permuted in all possible ways consistent with the randomization procedure actually used in the trial. The randomization-based p- value is the sum of null probabilities of the treatment assignment permutations in the reference set that yield the test statistic values greater than or equal to the experimental value. A randomization-based test can be a useful supportive analysis, free of assumptions of parametric tests and protective against spurious significant results that may be caused by temporal trends [ 14 , 22 ].

It is important to note that Bayesian inference has also become a common statistical analysis in RCTs [ 23 ]. Although the inferential framework relies upon subjective probabilities, a study analyzed through a Bayesian framework still relies upon randomization for the other aforementioned virtues [ 24 ]. Hence, the randomization considerations discussed herein have broad application.

What types of randomization methodologies are available?

Randomization is not a single methodology, but a very broad class of design techniques for the RCT [ 10 ]. In this paper, we consider only randomization designs for sequential enrollment clinical trials with equal (1:1) allocation in which randomization is not adapted for covariates and/or responses. The simplest procedure for an RCT is complete randomization design (CRD) for which each subject’s treatment is determined by a flip of a fair coin [ 25 ]. CRD provides no potential for selection bias (e.g. based on prediction of future assignments) but it can result, with non-negligible probability, in deviations from the 1:1 allocation ratio and covariate imbalances, especially in small samples. This may lead to loss of statistical efficiency (decrease in power) compared to the balanced design. In practice, some restrictions on randomization are made to achieve balanced allocation. Such randomization designs are referred to as restricted randomization procedures [ 26 , 27 ].

Suppose we plan to randomize an even number of subjects \(n\) sequentially between treatments E and C. Two basic designs that equalize the final treatment numbers are the random allocation rule (Rand) and the truncated binomial design (TBD), which were discussed in the 1957 paper by Blackwell and Hodges [ 28 ]. For Rand, any sequence of exactly \(n/2\) E’s and \(n/2\) C’s is equally likely. For TBD, treatment assignments are made with probability 0.5 until one of the treatments receives its quota of \(n/2\) subjects; thereafter all remaining assignments are made deterministically to the opposite treatment.

A common feature of both Rand and TBD is that they aim at the final balance, whereas at intermediate steps it is still possible to have substantial imbalances, especially if \(n\) is large. A long run of a single treatment in a sequence may be problematic if there is a time drift in some important covariate, which can lead to chronological bias [ 29 ]. To mitigate this risk, one can further restrict randomization so that treatment assignments are balanced over time. One common approach is the permuted block design (PBD) [ 30 ], for which random treatment assignments are made in blocks of size \(2b\) ( \(b\) is some small positive integer), with exactly \(b\) allocations to each of the treatments E and C. The PBD is perhaps the oldest (it can be traced back to A. Bradford Hill’s 1951 paper [ 12 ]) and the most widely used randomization method in clinical trials. Often its choice in practice is justified by simplicity of implementation and the fact that it is referenced in the authoritative ICH E9 guideline on statistical principles for clinical trials [ 31 ]. One major challenge with PBD is the choice of the block size. If \(b=1\) , then every pair of allocations is balanced, but every even allocation is deterministic. Larger block sizes increase allocation randomness. The use of variable block sizes has been suggested [ 31 ]; however, PBDs with variable block sizes are also quite predictable [ 32 ]. Another problematic feature of the PBD is that it forces periodic return to perfect balance, which may be unnecessary from the statistical efficiency perspective and may increase the risk of prediction of upcoming allocations.

More recent and better alternatives to the PBD are the maximum tolerated imbalance (MTI) procedures [ 33 , 34 , 35 , 36 , 37 , 38 , 39 , 40 , 41 ]. These procedures provide stronger encryption of the randomization sequence (i.e. make it more difficult to predict future treatment allocations in the sequence even knowing the current sizes of the treatment groups) while controlling treatment imbalance at a pre-defined threshold throughout the experiment. A general MTI procedure specifies a certain boundary for treatment imbalance, say \(b>0\) , that cannot be exceeded. If, at a given allocation step the absolute value of imbalance is equal to \(b\) , then one next allocation is deterministically forced toward balance. This is in contrast to PBD which, after reaching the target quota of allocations for either treatment within a block, forces all subsequent allocations to achieve perfect balance at the end of the block. Some notable MTI procedures are the big stick design (BSD) proposed by Soares and Wu in 1983 [ 37 ], the maximal procedure proposed by Berger, Ivanova and Knoll in 2003 [ 35 ], the block urn design (BUD) proposed by Zhao and Weng in 2011 [ 40 ], just to name a few. These designs control treatment imbalance within pre-specified limits and are more immune to selection bias than the PBD [ 42 , 43 ].

Another important class of restricted randomization procedures is biased coin designs (BCDs). Starting with the seminal 1971 paper of Efron [ 44 ], BCDs have been a hot research topic in biostatistics for 50 years. Efron’s BCD is very simple: at any allocation step, if treatment numbers are balanced, the next assignment is made with probability 0.5; otherwise, the underrepresented treatment is assigned with probability \(p\) , where \(0.5<p\le 1\) is a fixed and pre-specified parameter that determines the tradeoff between balance and randomness. Note that \(p=1\) corresponds to PBD with block size 2. If we set \(p<1\) (e.g. \(p=2/3\) ), then the procedure has no deterministic assignments and treatment allocation will be concentrated around 1:1 with high probability [ 44 ]. Several extensions of Efron’s BCD providing better tradeoff between treatment balance and allocation randomness have been proposed [ 45 , 46 , 47 , 48 , 49 ]; for example, a class of adjustable biased coin designs introduced by Baldi Antognini and Giovagnoli in 2004 [ 49 ] unifies many BCDs in a single framework. A comprehensive simulation study comparing different BCDs has been published by Atkinson in 2014 [ 50 ].

Finally, urn models provide a useful mechanism for RCT designs [ 51 ]. Urn models apply some probabilistic rules to sequentially add/remove balls (representing different treatments) in the urn, to balance treatment assignments while maintaining the randomized nature of the experiment [ 39 , 40 , 52 , 53 , 54 , 55 ]. A randomized urn design for balancing treatment assignments was proposed by Wei in 1977 [ 52 ]. More novel urn designs, such as the drop-the-loser urn design developed by Ivanova in 2003 [ 55 ] have reduced variability and can attain the target treatment allocation more efficiently. Many urn designs involve parameters that can be fine-tuned to obtain randomization procedures with desirable balance/randomness tradeoff [ 56 ].

What are the attributes of a good randomization procedure?

A “good” randomization procedure is one that helps successfully achieve the study objective(s). Kalish and Begg [ 57 ] state that the major objective of a comparative clinical trial is to provide a precise and valid comparison. To achieve this, the trial design should be such that it: 1) prevents bias; 2) ensures an efficient treatment comparison; and 3) is simple to implement to minimize operational errors. Table 1 elaborates on these considerations, focusing on restricted randomization procedures for 1:1 randomized trials.

Before delving into a detailed discussion, let us introduce some important definitions. Following [ 10 ], a randomization sequence is a random vector \({{\varvec{\updelta}}}_{n}=({\delta }_{1},\dots ,{\delta }_{n})\) , where \({\delta }_{i}=1\) , if the i th subject is assigned to treatment E or \({\delta }_{i}=0\) , if the \(i\) th subject is assigned to treatment C. A restricted randomization procedure can be defined by specifying a probabilistic rule for the treatment assignment of the ( i +1)st subject, \({\delta }_{i+1}\) , given the past allocations \({{\varvec{\updelta}}}_{i}\) for \(i\ge 1\) . Let \({N}_{E}\left(i\right)={\sum }_{j=1}^{i}{\delta }_{j}\) and \({N}_{C}\left(i\right)=i-{N}_{E}\left(i\right)\) denote the numbers of subjects assigned to treatments E and C, respectively, after \(i\) allocation steps. Then \(D\left(i\right)={N}_{E}\left(i\right)-{N}_{C}(i)\) is treatment imbalance after \(i\) allocations. For any \(i\ge 1\) , \(D\left(i\right)\) is a random variable whose probability distribution is determined by the chosen randomization procedure.

Balance and randomness

Treatment balance and allocation randomness are two competing requirements in the design of an RCT. Restricted randomization procedures that provide a good tradeoff between these two criteria are desirable in practice.

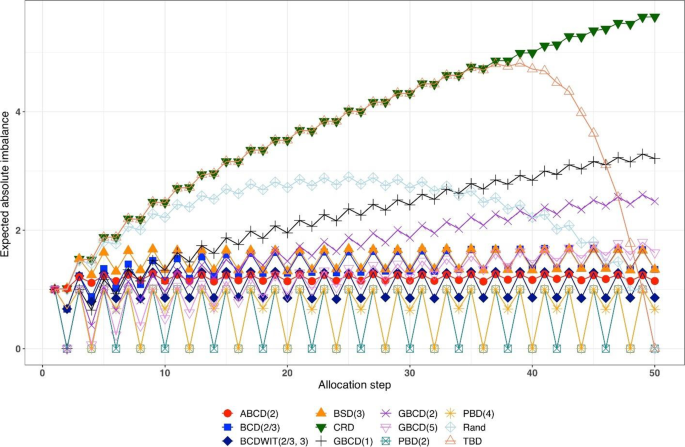

Consider a trial with sample size \(n\) . The absolute value of imbalance, \(\left|D(i)\right|\) \((i=1,\dots,n)\) , provides a measure of deviation from equal allocation after \(i\) allocation steps. \(\left|D(i)\right|=0\) indicates that the trial is perfectly balanced. One can also consider \(\Pr(\vert D\left(i\right)\vert=0)\) , the probability of achieving exact balance after \(i\) allocation steps. In particular \(\Pr(\vert D\left(n\right)\vert=0)\) is the probability that the final treatment numbers are balanced. Two other useful summary measures are the expected imbalance at the \(i\mathrm{th}\) step, \(E\left|D(i)\right|\) and the expected value of the maximum imbalance of the entire randomization sequence, \(E\left(\underset{1\le i\le n}{\mathrm{max}}\left|D\left(i\right)\right|\right)\) .

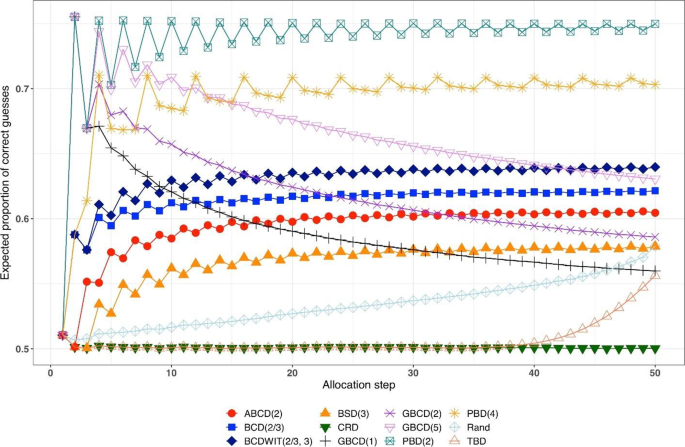

Greater forcing of balance implies lack of randomness. A procedure that lacks randomness may be susceptible to selection bias [ 16 ], which is a prominent issue in open-label trials with a single center or with randomization stratified by center, where the investigator knows the sequence of all previous treatment assignments. A classic approach to quantify the degree of susceptibility of a procedure to selection bias is the Blackwell-Hodges model [ 28 ]. Let \({G}_{i}=1\) (or 0), if at the \(i\mathrm{th}\) allocation step an investigator makes a correct (or incorrect) guess on treatment assignment \({\delta }_{i}\) , given past allocations \({{\varvec{\updelta}}}_{i-1}\) . Then the predictability of the design at the \(i\mathrm{th}\) step is the expected value of \({G}_{i}\) , i.e. \(E\left(G_i\right)=\Pr(G_i=1)\) . Blackwell and Hodges [ 28 ] considered the expected bias factor , the difference between expected total number of correct guesses of a given sequence of random assignments and the similar quantity obtained from CRD for which treatment assignments are made independently with equal probability: \(E(F)=E\left({\sum }_{i=1}^{n}{G}_{i}\right)-n/2\) . This quantity is zero for CRD, and it is positive for restricted randomization procedures (greater values indicate higher expected bias). Matts and Lachin [ 30 ] suggested taking expected proportion of deterministic assignments in a sequence as another measure of lack of randomness.

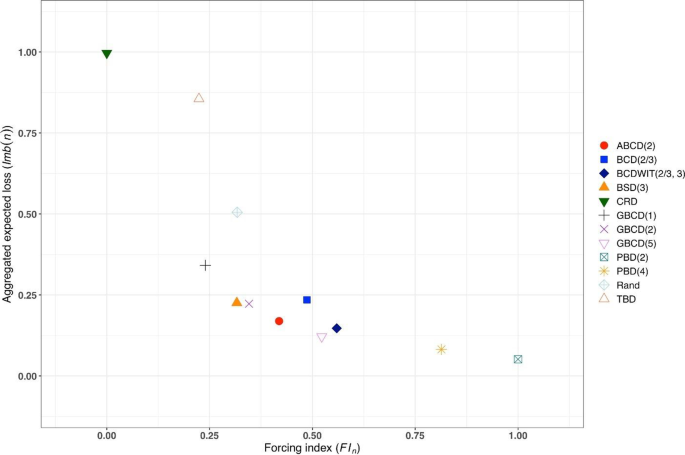

In the literature, various restricted randomization procedures have been compared in terms of balance and randomness [ 50 , 58 , 59 ]. For instance, Zhao et al. [ 58 ] performed a comprehensive simulation study of 14 restricted randomization procedures with different choices of design parameters, for sample sizes in the range of 10 to 300. The key criteria were the maximum absolute imbalance and the correct guess probability. The authors found that the performance of the designs was within a closed region with the boundaries shaped by Efron’s BCD [ 44 ] and the big stick design [ 37 ], signifying that the latter procedure with a suitably chosen MTI boundary can be superior to other restricted randomization procedures in terms of balance/randomness tradeoff. Similar findings confirming the utility of the big stick design were recently reported by Hilgers et al. [ 60 ].

Validity and efficiency

Validity of a statistical procedure essentially means that the procedure provides correct statistical inference following an RCT. In particular, a chosen statistical test is valid, if it controls the chance of a false positive finding, that is, the pre-specified probability of a type I error of the test is achieved but not exceeded. The strong control of type I error rate is a major prerequisite for any confirmatory RCT. Efficiency means high statistical power for detecting meaningful treatment differences (when they exist), and high accuracy of estimation of treatment effects.

Both validity and efficiency are major requirements of any RCT, and both of these aspects are intertwined with treatment balance and allocation randomness. Restricted randomization designs, when properly implemented, provide solid ground for valid and efficient statistical inference. However, a careful consideration of different options can help an investigator to optimize the choice of a randomization procedure for their clinical trial.

Let us start with statistical efficiency. Equal (1:1) allocation frequently maximizes power and estimation precision. To illustrate this, suppose the primary outcomes in the two groups are normally distributed with respective means \({\mu }_{E}\) and \({\mu }_{C}\) and common standard deviation \(\sigma >0\) . Then the variance of an efficient estimator of the treatment difference \({\mu }_{E}-{\mu }_{C}\) is equal to \(V=\frac{4{\sigma }^{2}}{n-{L}_{n}}\) , where \({L}_{n}=\frac{{\left|D(n)\right|}^{2}}{n}\) is referred to as loss [ 61 ]. Clearly, \(V\) is minimized when \({L}_{n}=0\) , or equivalently, \(D\left(n\right)=0\) , i.e. the balanced trial.

When the primary outcome follows a more complex statistical model, optimal allocation may be unequal across the treatment groups; however, 1:1 allocation is still nearly optimal for binary outcomes [ 62 , 63 ], survival outcomes [ 64 ], and possibly more complex data types [ 65 , 66 ]. Therefore, a randomization design that balances treatment numbers frequently promotes efficiency of the treatment comparison.

As regards inferential validity, it is important to distinguish two approaches to statistical inference after the RCT – an invoked population model and a randomization model [ 10 ]. For a given randomization procedure, these two approaches generally produce similar results when the assumption of normal random sampling (and some other assumptions) are satisfied, but the randomization model may be more robust when model assumptions are violated; e.g. when outcomes are affected by a linear time trend [ 67 , 68 ]. Another important issue that may interfere with validity is selection bias. Some authors showed theoretically that PBDs with small block sizes may result in serious inflation of the type I error rate under a selection bias model [ 69 , 70 , 71 ]. To mitigate risk of selection bias, one should ideally take preventative measures, such as blinding/masking, allocation concealment, and avoidance of highly restrictive randomization designs. However, for already completed studies with evidence of selection bias [ 72 ], special statistical adjustments are warranted to ensure validity of the results [ 73 , 74 , 75 ].

Implementation aspects

With the current state of information technology, implementation of randomization in RCTs should be straightforward. Validated randomization systems are emerging, and they can handle randomization designs of increasing complexity for clinical trials that are run globally. However, some important points merit consideration.

The first point has to do with how a randomization sequence is generated and implemented. One should distinguish between advance and adaptive randomization [ 16 ]. Here, by “adaptive” randomization we mean “in-real-time” randomization, i.e. when a randomization sequence is generated not upfront, but rather sequentially, as eligible subjects enroll into the study. Restricted randomization procedures are “allocation-adaptive”, in the sense that the treatment assignment of an individual subject is adapted to the history of previous treatment assignments. While in practice the majority of trials with restricted and stratified randomization use randomization schedules pre-generated in advance, there are some circumstances under which “in-real-time” randomization schemes may be preferred; for instance, clinical trials with high cost of goods and/or shortage of drug supply [ 76 ].

The advance randomization approach includes the following steps: 1) for the chosen randomization design and sample size \(n\) , specify the probability distribution on the reference set by enumerating all feasible randomization sequences of length \(n\) and their corresponding probabilities; 2) select a sequence at random from the reference set according to the probability distribution; and 3) implement this sequence in the trial. While enumeration of all possible sequences and their probabilities is feasible and may be useful for trials with small sample sizes, the task becomes computationally prohibitive (and unnecessary) for moderate or large samples. In practice, Monte Carlo simulation can be used to approximate the probability distribution of the reference set of all randomization sequences for a chosen randomization procedure.

A limitation of advance randomization is that a sequence of treatment assignments must be generated upfront, and proper security measures (e.g. blinding/masking) must be in place to protect confidentiality of the sequence. With the adaptive or “in-real-time” randomization, a sequence of treatment assignments is generated dynamically as the trial progresses. For many restricted randomization procedures, the randomization rule can be expressed as \(\Pr(\delta_{i+1}=1)=\left|F\left\{D\left(i\right)\right\}\right|\) , where \(F\left\{\cdot \right\}\) is some non-increasing function of \(D\left(i\right)\) for any \(i\ge 1\) . This is referred to as the Markov property [ 77 ], which makes a procedure easy to implement sequentially. Some restricted randomization procedures, e.g. the maximal procedure [ 35 ], do not have the Markov property.

The second point has to do with how the final data analysis is performed. With an invoked population model, the analysis is conditional on the design and the randomization is ignored in the analysis. With a randomization model, the randomization itself forms the basis for statistical inference. Reference [ 14 ] provides a contemporaneous overview of randomization-based inference in clinical trials. Several other papers provide important technical details on randomization-based tests, including justification for control of type I error rate with these tests [ 22 , 78 , 79 ]. In practice, Monte Carlo simulation can be used to estimate randomization-based p- values [ 10 ].

A roadmap for comparison of restricted randomization procedures

The design of any RCT starts with formulation of the trial objectives and research questions of interest [ 3 , 31 ]. The choice of a randomization procedure is an integral part of the study design. A structured approach for selecting an appropriate randomization procedure for an RCT was proposed by Hilgers et al. [ 60 ]. Here we outline the thinking process one may follow when evaluating different candidate randomization procedures. Our presented roadmap is by no means exhaustive; its main purpose is to illustrate the logic behind some important considerations for finding an “optimal” randomization design for the given trial parameters.

Throughout, we shall assume that the study is designed as a randomized, two-arm comparative trial with 1:1 allocation, with a fixed sample size \(n\) that is pre-determined based on budgetary and statistical considerations to obtain a definitive assessment of the treatment effect via the pre-defined hypothesis testing. We start with some general considerations which determine the study design:

Sample size ( \(n\) ). For small or moderate studies, exact attainment of the target numbers per group may be essential, because even slight imbalance may decrease study power. Therefore, a randomization design in such studies should equalize well the final treatment numbers. For large trials, the risk of major imbalances is less of a concern, and more random procedures may be acceptable.

The length of the recruitment period and the trial duration. Many studies are short-term and enroll participants fast, whereas some other studies are long-term and may have slow patient accrual. In the latter case, there may be time drifts in patient characteristics, and it is important that the randomization design balances treatment assignments over time.

Level of blinding (masking): double-blind, single-blind, or open-label. In double-blind studies with properly implemented allocation concealment the risk of selection bias is low. By contrast, in open-label studies the risk of selection bias may be high, and the randomization design should provide strong encryption of the randomization sequence to minimize prediction of future allocations.

Number of study centers. Many modern RCTs are implemented globally at multiple research institutions, whereas some studies are conducted at a single institution. In the former case, the randomization is often stratified by center and/or clinically important covariates. In the latter case, especially in single-institution open-label studies, the randomization design should be chosen very carefully, to mitigate the risk of selection bias.

An important point to consider is calibration of the design parameters. Many restricted randomization procedures involve parameters, such as the block size in the PBD, the coin bias probability in Efron’s BCD, the MTI threshold, etc. By fine-tuning these parameters, one can obtain designs with desirable statistical properties. For instance, references [ 80 , 81 ] provide guidance on how to justify the block size in the PBD to mitigate the risk of selection bias or chronological bias. Reference [ 82 ] provides a formal approach to determine the “optimal” value of the parameter \(p\) in Efron’s BCD in both finite and large samples. The calibration of design parameters can be done using Monte Carlo simulations for the given trial setting.

Another important consideration is the scope of randomization procedures to be evaluated. As we mentioned already, even one method may represent a broad class of randomization procedures that can provide different levels of balance/randomness tradeoff; e.g. Efron’s BCD covers a wide spectrum of designs, from PBD(2) (if \(p=1\) ) to CRD (if \(p=0.5\) ). One may either prefer to focus on finding the “optimal” parameter value for the chosen design, or be more general and include various designs (e.g. MTI procedures, BCDs, urn designs, etc.) in the comparison. This should be done judiciously, on a case-by-case basis, focusing only on the most reasonable procedures. References [ 50 , 58 , 60 ] provide good examples of simulation studies to facilitate comparisons among various restricted randomization procedures for a 1:1 RCT.

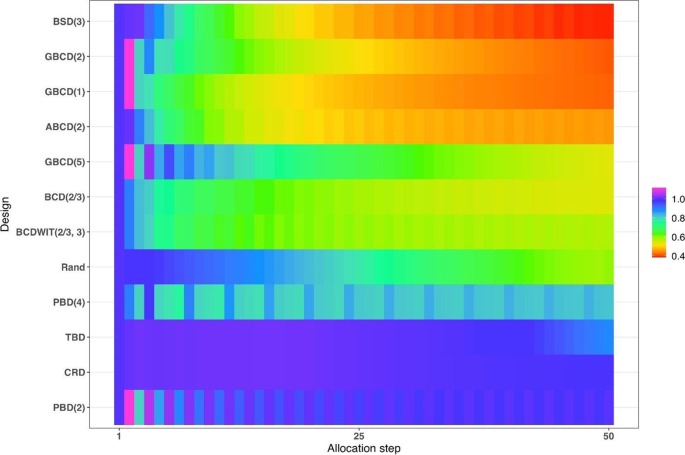

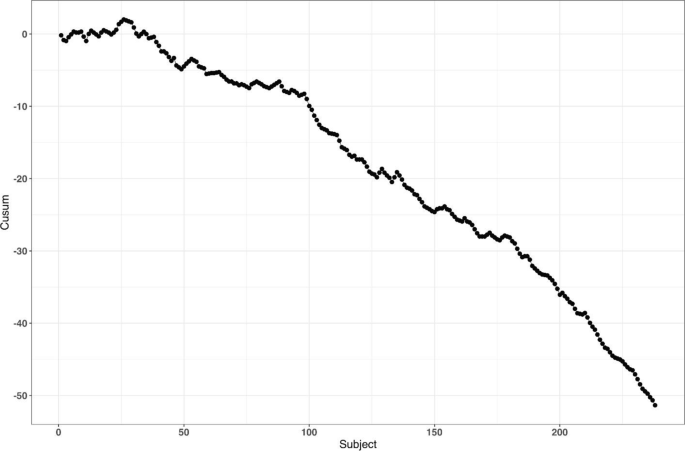

In parallel with the decision on the scope of randomization procedures to be assessed, one should decide upon the performance criteria against which these designs will be compared. Among others, one might think about the two competing considerations: treatment balance and allocation randomness. For a trial of size \(n\) , at each allocation step \(i=1,\dots ,n\) one can calculate expected absolute imbalance \(E\left|D(i)\right|\) and the probability of correct guess \(\Pr(G_i=1)\) as measures of lack of balance and lack of randomness, respectively. These measures can be either calculated analytically (when formulae are available) or through Monte Carlo simulations. Sometimes it may be useful to look at cumulative measures up to the \(i\mathrm{th}\) allocation step ( \(i=1,\dots ,n\) ); e.g. \(\frac{1}{i}{\sum }_{j=1}^{i}E\left|D(j)\right|\) and \(\frac1i\sum\nolimits_{j=1}^i\Pr(G_j=1)\) . For instance, \(\frac{1}{n}{\sum }_{j=1}^{n}{\mathrm{Pr}}({G}_{j}=1)\) is the average correct guess probability for a design with sample size \(n\) . It is also helpful to visualize the selected criteria. Visualizations can be done in a number of ways; e.g. plots of a criterion vs. allocation step, admissibility plots of two chosen criteria [ 50 , 59 ], etc. Such visualizations can help evaluate design characteristics, both overall and at intermediate allocation steps. They may also provide insights into the behavior of a particular design for different values of the tuning parameter, and/or facilitate a comparison among different types of designs.

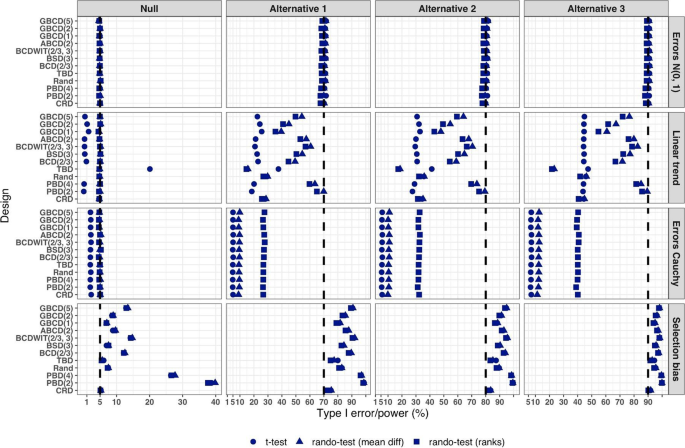

Another way to compare the merits of different randomization procedures is to study their inferential characteristics such as type I error rate and power under different experimental conditions. Sometimes this can be done analytically, but a more practical approach is to use Monte Carlo simulation. The choice of the modeling and analysis strategy will be context-specific. Here we outline some considerations that may be useful for this purpose:

Data generating mechanism . To simulate individual outcome data, some plausible statistical model must be posited. The form of the model will depend on the type of outcomes (e.g. continuous, binary, time-to-event, etc.), covariates (if applicable), the distribution of the measurement error terms, and possibly some additional terms representing selection and/or chronological biases [ 60 ].

True treatment effects . At least two scenarios should be considered: under the null hypothesis ( \({H}_{0}\) : treatment effects are the same) to evaluate the type I error rate, and under an alternative hypothesis ( \({H}_{1}\) : there is some true clinically meaningful difference between the treatments) to evaluate statistical power.

Randomization designs to be compared . The choice of candidate randomization designs and their parameters must be made judiciously.

Data analytic strategy . For any study design, one should pre-specify the data analysis strategy to address the primary research question. Statistical tests of significance to compare treatment effects may be parametric or nonparametric, with or without adjustment for covariates.

The approach to statistical inference: population model-based or randomization-based . These two approaches are expected to yield similar results when the population model assumptions are met, but they may be different if some assumptions are violated. Randomization-based tests following restricted randomization procedures will control the type I error at the chosen level if the distribution of the test statistic under the null hypothesis is fully specified by the randomization procedure that was used for patient allocation. This is always the case unless there is a major flaw in the design (such as selection bias whereby the outcome of any individual participant is dependent on treatment assignments of the previous participants).

Overall, there should be a well-thought plan capturing the key questions to be answered, the strategy to address them, the choice of statistical software for simulation and visualization of the results, and other relevant details.